Participant fatigue is not a new problem, but it is getting dramatically worse. The average knowledge worker now receives 4.7 research requests per month -- up from 1.2 a decade ago. Panel quality is declining across every major provider. Response rates for B2B research have dropped below 5% for cold outreach. And the participants who do show up are increasingly likely to straight-line through surveys, give one-word interview answers, and mentally check out before you reach your most important questions.

The standard response has been to make research shorter. Cut the interview from 60 minutes to 30. Reduce the survey from 40 questions to 15. Pay more for less time. But compression is not a solution to fatigue -- it is a capitulation. You get less insight, not better engagement.

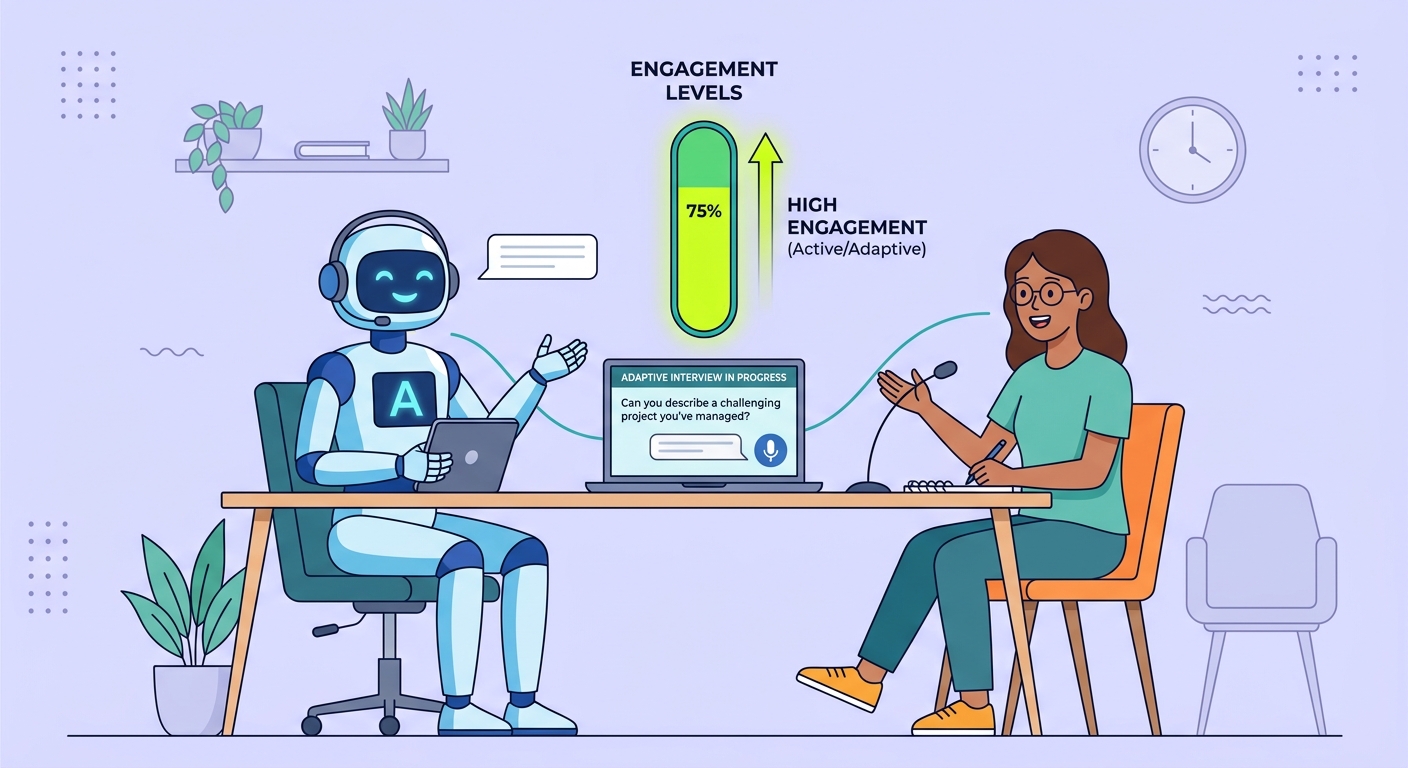

AI-adaptive interviews represent a fundamentally different approach. Instead of designing a fixed protocol and hoping participants stay engaged through all of it, adaptive systems dynamically adjust the conversation based on real-time signals of participant engagement, knowledge depth, and emotional state. The result is not a shorter interview. It is a smarter one.

Why Participant Fatigue Is an Engagement Problem, Not a Time Problem

The dominant mental model for participant fatigue is simple: people have a limited attention budget, and research that exceeds that budget produces bad data. This model predicts that shorter research always produces better data. It is wrong.

Cognitive psychology research on flow states and intrinsic motivation tells a different story. People routinely sustain deep engagement for hours on tasks that match their skill level, provide clear feedback, and maintain an appropriate challenge gradient. Video games, compelling conversations, and absorbing work all demonstrate that human attention is not a fixed resource that depletes linearly with time.

Participant fatigue is better understood as an engagement failure. It occurs when the research interaction becomes boring, confusing, repetitive, or irrelevant to the participant's own experience. A 90-minute interview where every question feels relevant and the conversation flows naturally produces better data in minute 85 than a 20-minute interview where the participant checked out at minute 8 because the questions were generic.

This reframing has profound implications for research design. The goal is not to minimize time. It is to maximize engagement-per-minute. And that requires understanding what drives engagement loss and designing systems that counteract it in real time.

The Four Drivers of Engagement Loss

Research participants disengage for four primary reasons, and each requires a different intervention.

Irrelevance. Questions that do not match the participant's experience trigger immediate disengagement. A participant who uses your product exclusively for reporting should not spend ten minutes answering questions about the data import workflow they have never touched. Fixed protocols cannot avoid this without extensive pre-screening that itself causes fatigue.

Repetition. When a participant has thoroughly explained their perspective on a topic, asking the same question from a different angle feels disrespectful of their time. Traditional interview guides often include redundant questions as a reliability check, but participants experience them as evidence that the interviewer is not listening.

Cognitive mismatch. Questions that are too simple bore expert participants. Questions that are too complex frustrate novices. A fixed protocol calibrated for the average participant is suboptimal for everyone, much like how traditional monitoring systems built for average-case behavior miss the signals that matter.

Emotional disconnect. Research interactions that feel transactional -- read question, receive answer, read next question -- fail to create the psychological safety necessary for honest, detailed responses. Participants who feel unheard give surface-level answers.

How AI Adaptive Interviews Address Each Driver

AI-adaptive interview systems use real-time natural language understanding to detect these engagement signals and modify the conversation dynamically.

Relevance routing. Based on early responses, the system identifies which topics and features are relevant to this specific participant and routes the conversation accordingly. A participant who mentions they primarily use the analytics dashboard gets deeper questions about data visualization and reporting. A participant who focuses on collaboration features gets questions about team workflows and permissions. The interview guide is not a fixed list but a decision tree that the AI navigates based on participant context.

This is analogous to how AI memory architectures enable stateful interactions -- the system builds a running model of this participant's experience and uses it to select the most informative next question, rather than proceeding through a generic sequence.

Redundancy detection. When a participant has already provided a comprehensive answer to a topic, the system recognizes this and skips related questions that would feel repetitive. If a participant's answer to "How do you typically start your workday with the product?" already covers their workflow, feature usage, and pain points, there is no need to ask those as separate follow-up questions. The AI extracts the relevant information and moves to genuinely new territory.

Complexity calibration. The system assesses each participant's domain expertise from their vocabulary, response depth, and conceptual sophistication, then adjusts question complexity accordingly. Expert users receive nuanced questions about edge cases and architectural tradeoffs. Novice users receive concrete questions about specific tasks and outcomes. Both participants feel the interview is pitched at their level, which sustains engagement.

Conversational naturalness. Unlike rigid survey instruments, adaptive AI interviews maintain a conversational flow that includes acknowledgment of previous answers, natural transitions between topics, and appropriate follow-up on unexpected but valuable tangents. This creates the engagement dynamics of a skilled human interviewer while maintaining the consistency and scalability that human moderators struggle to achieve without introducing bias.

The Data Quality Evidence

The engagement benefits of adaptive interviews are not theoretical. Early implementations show measurable improvements in data quality metrics.

Response length -- a proxy for engagement depth -- increases by 40-60% in adaptive interviews compared to fixed protocols of the same total duration. Participants elaborate more when questions feel relevant and the conversation feels responsive.

Informational uniqueness -- the proportion of a participant's responses that contain novel information not captured from other participants -- increases by approximately 25%. This is because adaptive routing pushes each conversation into the territory most unique to that participant rather than covering the same generic ground with everyone.

Participant satisfaction scores, measured post-interview, consistently show that adaptive interviews are rated as more respectful of the participant's time, more interesting, and more likely to lead to the participant agreeing to future research. In an environment where participant recruitment is increasingly difficult and expensive, this last point alone justifies the investment.

Perhaps most importantly, actionable insight yield -- the number of product-relevant findings that research teams extract per interview -- increases by 30-50%. This is the compounding effect of better engagement producing richer data producing more useful analysis. This shift mirrors what the industry is seeing broadly: AI adoption at scale creates qualitatively different outcomes, not just incremental improvements.

Implementation Considerations

Adaptive AI interviews are not a drop-in replacement for existing research workflows. They require rethinking several aspects of research design.

Topic mapping, not question writing. Instead of writing a linear interview guide, researchers define a topic map: the universe of things they want to learn, organized by priority, relevance conditions, and depth targets. The AI uses this map to construct a unique interview path for each participant. This requires more upfront research design effort but produces dramatically better results.

Analysis-ready structuring. Because each participant answers different questions, traditional analysis approaches that compare responses to the same question across participants break down. Adaptive systems need to tag and structure responses by topic and subtopic so that analysis can aggregate across the varied conversation paths. This is a data architecture challenge, not a research methodology challenge.

Calibration and testing. Adaptive systems need to be calibrated against human interviewer performance to ensure they maintain research quality standards. This means running parallel studies where the same participants are interviewed by both the AI system and a skilled human moderator, then comparing data quality metrics. Initial calibration is time-intensive but is a one-time cost per research domain.

Ethical considerations. Adaptive systems that detect emotional state raise important questions about participant consent and data use. Participants should know that the system is adapting to their responses and engagement signals. Transparency about the AI's role is not just ethically required -- it is practically beneficial, as participants who understand the system tend to engage more authentically.

The Future of Participant-Centric Research

The participant fatigue crisis is a symptom of a research industry that has historically designed for researcher convenience rather than participant experience. Fixed protocols exist because they are easy for researchers to write, administer, and analyze. They are not optimized for the participant's cognitive experience.

AI-adaptive interviews flip this dynamic. The complexity moves from the participant to the system. The participant has a natural, engaging conversation. The system handles the complexity of routing, adaptation, redundancy detection, and structured data extraction behind the scenes.

This is not a marginal improvement. It is a structural shift in how qualitative research operates. As participant pools become more fatigued and harder to recruit, the research teams that adopt adaptive approaches will have a systematic advantage in both data quality and research throughput.

The question is not whether participant-adaptive research will become the standard. It is whether your team will adopt it before your participant access degrades to the point where fixed-protocol research produces data too poor to act on.