Cross-cultural qualitative research is broken. Not in the dramatic, sky-is-falling sense, but in the quiet, systemic way that produces insights nobody trusts enough to act on.

Here is the pattern I see repeatedly: A Fortune 500 brand wants to understand Hispanic consumers. They commission a study. A research firm recruits 30 participants across three cities, runs focus groups in English and Spanish, transcribes everything, and delivers a deck eight weeks later. The insights are surface-level. The cultural nuance is flattened. And the CMO quietly shelves the report because it tells them what they already assumed.

This is not a people problem. The researchers are talented. The participants are genuine. The methodology is textbook. The problem is structural. Traditional qualitative research was built for cultural homogeneity, and we have been bolting on multilingual and multicultural capabilities as afterthoughts ever since.

AI does not fix this automatically. But the right AI-powered tools, deployed with cultural intentionality, fundamentally change what is possible. Let me lay out exactly how.

The Real Cost of Cultural Blind Spots in Qualitative Research

Before we talk about solutions, let us be honest about the problem.

The U.S. Census Bureau projects that by 2045, the United States will become majority-minority. Hispanic and Latino consumers already represent $2.8 trillion in purchasing power. Asian American purchasing power exceeds $1.3 trillion. Black consumers drive cultural trends that shape mainstream markets.

Yet most qualitative research still treats these populations as segments to be studied rather than communities to be understood. The methodological gaps are real:

Language as a barrier, not a lens. Most multilingual research treats translation as a logistics problem. Get the discussion guide into Spanish. Hire a simultaneous interpreter. Translate the transcripts back to English. At every step, meaning leaks. Idioms flatten. Emotional texture disappears. The participant who code-switches between English and Spanish mid-sentence — which is how millions of bicultural Americans actually talk — becomes a data quality problem instead of a rich signal.

Coding frameworks built on dominant-culture assumptions. When a research team in New York develops a codebook for analyzing consumer attitudes toward financial services, they bring implicit assumptions about individualism, risk tolerance, and family decision-making that may not map onto collectivist cultural frameworks. The codes themselves become instruments of cultural bias, filtering out exactly the insights the study was designed to capture.

Sample sizes that mock statistical reality. Qualitative research already works with small samples. Cross-cultural qual research subdivides those small samples further — by language, generation, acculturation level, geography. You end up with cell sizes of four or five people carrying the representational weight of entire cultural communities. Nobody says this out loud, but everyone in the room knows the insights are fragile.

Timelines that kill relevance. A properly executed cross-cultural qualitative study with in-language moderation, cultural consultation, back-translation of materials, and nuanced analysis takes 10 to 16 weeks. In a market where consumer sentiment shifts monthly, that timeline turns insights into history.

These are not new complaints. What is new is that we finally have tools capable of addressing them at a structural level.

How AI Actually Transforms Multilingual Qualitative Analysis

Let me be specific about what AI can and cannot do here, because the hype cycle around AI in research has produced more confusion than clarity.

What AI Does Well in Cross-Cultural Research

Real-time multilingual transcription and analysis. Modern AI transcription handles Spanish, Mandarin, Hindi, Tagalog, Arabic, and dozens of other languages with accuracy rates that now match or exceed human transcriptionists for most use cases. More importantly, AI can process code-switching — those mid-sentence language shifts that are the hallmark of bicultural speech — without treating it as noise. Auto-transcription in the participant's language of choice means you capture the actual words people use, not a translator's approximation of them.

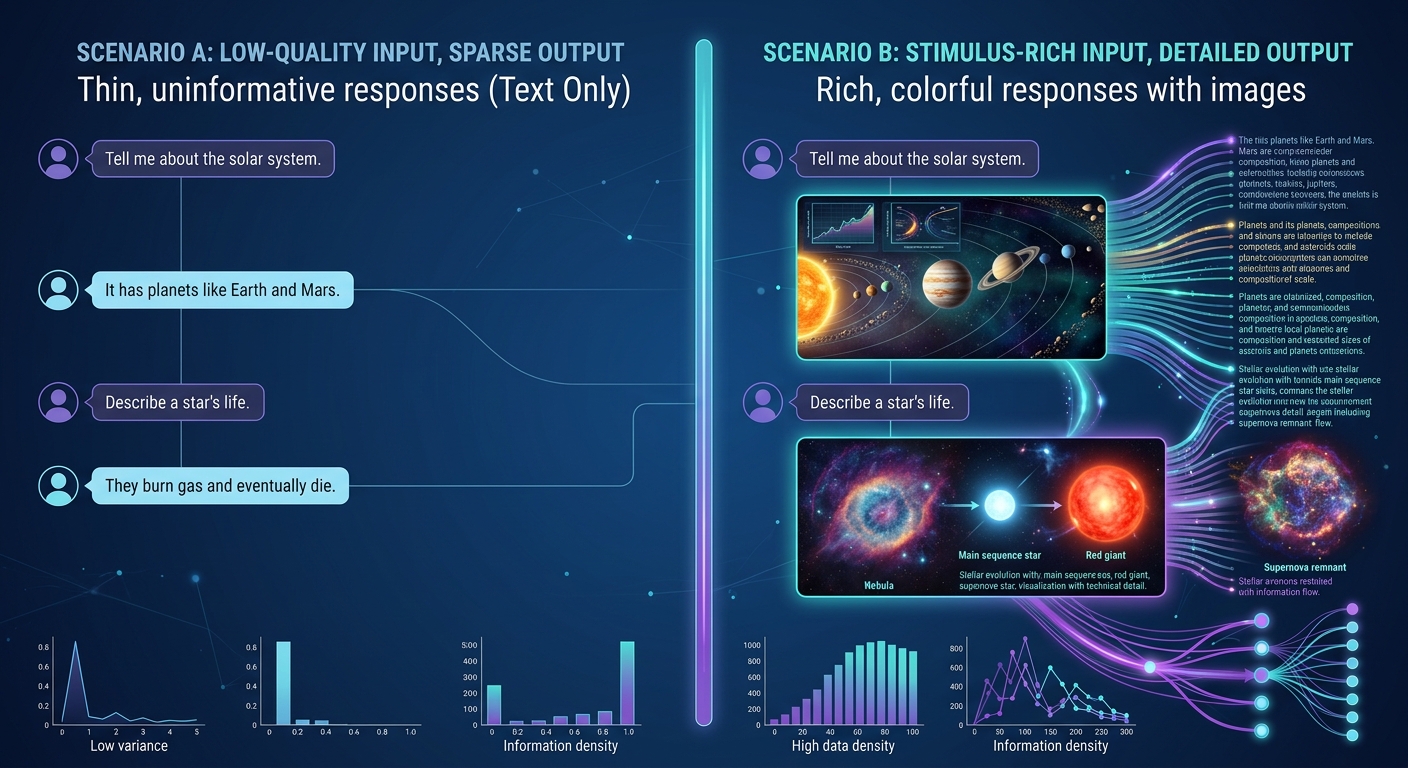

Semantic analysis that crosses language boundaries. This is where things get genuinely powerful. Traditional cross-cultural analysis requires bilingual analysts who can identify thematic parallels across languages. AI-powered theme identification can surface conceptual clusters across Spanish, English, and Spanglish transcripts simultaneously, identifying when a participant in Miami saying "mi familia decide junta" and a participant in Chicago saying "we always talk it over as a family" are expressing the same underlying value — without requiring a human analyst to manually connect those dots.

Scale without sacrificing depth. The fundamental tension in qualitative research has always been depth versus breadth. You can go deep with a few people or wide with many, but rarely both. AI changes this equation. When your platform can process and code hundreds of open-ended responses in minutes rather than weeks, you can run cross-cultural qualitative studies with sample sizes that actually support the cultural segmentation you need. The same principles we outlined in how to analyze open-ended survey responses at scale apply with even more force in multilingual contexts.

Multiple analytical lenses applied simultaneously. Qualz offers 14 distinct analytical frameworks that can be applied to the same dataset. In cross-cultural research, this is transformative. You can analyze the same set of interviews through a sentiment lens, a cultural values lens, a behavioral lens, and a linguistic lens without re-coding the data each time. The cultural nuances that one framework misses, another catches.

What AI Cannot Do (And Should Not Try)

AI cannot replace cultural expertise. Full stop. An AI model trained primarily on English-language data carries the cultural assumptions of that training data. It can process multilingual input, but it does not inherently understand that the concept of "convenience" means something fundamentally different in a time-scarce dual-income Anglo household versus a multigenerational Latino household where cooking is a communal activity. Human cultural consultants, community researchers, and bicultural analysts remain essential. AI amplifies their expertise — it does not substitute for it.

AI cannot validate its own cultural blind spots. Every model has them. The responsible approach is to build human review into the analytical pipeline, not to trust algorithmic output as culturally neutral. This is one reason we advocate strongly for research democratization done right — putting AI tools in the hands of diverse research teams who can catch what the algorithms miss.

A Practical Framework for AI-Powered Cross-Cultural Qualitative Research

Theory is nice. Frameworks ship. Here is a five-phase model that research teams can implement now.

Phase 1: Culturally Grounded Study Design

Before a single question is written, the research design must account for cultural context. This means:

Community-informed research questions. Do not start with what the brand wants to know. Start with what the community considers meaningful. This often requires pre-research — informal conversations, cultural advisory input, or literature review — to ensure your research questions are not imposing external frameworks on lived experience.

In-language instrument development. Discussion guides and survey instruments should be developed in the target language by native speakers, not translated from English. The difference is substantial. A question developed in Spanish will probe cultural constructs that a translated English question will miss entirely.

Methodology matched to cultural communication norms. Not every culture communicates insight the same way. Some populations are more comfortable in group settings. Others require one-on-one environments to share honestly. Some cultures privilege narrative and storytelling; others favor direct response. Your methodology should flex to the culture, not the other way around.

Phase 2: Multilingual Data Collection at Scale

This is where AI-powered platforms create the most immediate value.

Asynchronous, in-language participation. AI-moderated interviews and surveys allow participants to engage in their preferred language, on their own schedule, from their own environment. This eliminates the logistical nightmare of scheduling in-person multilingual focus groups and dramatically expands geographic reach.

AI-moderated depth interviews. AI interview capabilities allow for adaptive, conversational data collection that follows up on culturally specific responses in real time. When a participant mentions a concept that warrants deeper exploration, the AI probe follows the thread — in the participant's language. This produces richer data than a static survey while maintaining the scale advantages of digital collection.

Mixed-methods integration. The most robust cross-cultural insights come from combining qualitative depth with quantitative breadth. Platforms that support mixed methods research integration allow teams to move fluidly between structured and unstructured data collection within the same study, triangulating cultural insights across methodologies.

Phase 3: AI-Assisted Multilingual Analysis

This phase is where traditional cross-cultural research bottlenecks most severely, and where AI delivers the greatest time-to-insight improvement.

Parallel multilingual coding. Rather than translating all data into English before coding — which strips cultural context — AI can code transcripts in their original language and then map codes across languages at the conceptual level. This preserves linguistic nuance while enabling cross-cultural comparison.

Culturally calibrated sentiment analysis. Sentiment expression varies dramatically across cultures. Directness in German communication does not signal the same emotional valence as directness in Japanese communication. AI sentiment models that account for cultural communication norms produce more accurate readings than culture-blind sentiment scoring. We explored the broader implications in sentiment analysis meets qualitative research, and cultural calibration adds another critical layer.

Theme identification across cultural contexts. AI-powered theme identification can surface both universal themes (concepts that appear across all cultural segments) and culture-specific themes (concepts that emerge only within particular cultural groups). This dual-level analysis is exactly what cross-cultural research is supposed to deliver, and it traditionally requires weeks of manual comparative coding.

Phase 4: Cultural Synthesis and Validation

This is where human expertise becomes non-negotiable.

Bicultural analyst review. Every AI-generated cross-cultural analysis should be reviewed by analysts who are culturally fluent in the populations studied. Not just bilingual — bicultural. They understand the unstated assumptions, the social dynamics, and the contextual meaning that even sophisticated AI can miss.

Community validation. For high-stakes research, consider sharing preliminary findings with cultural advisors or community representatives before finalizing. This is not about political correctness — it is about data quality. If your findings do not resonate with people who live the experience you are studying, your analysis has a blind spot.

Triangulation across methods and sources. Cross-cultural insights are strongest when they emerge from multiple data sources. Survey responses, interview transcripts, behavioral data, and observational notes should all point in the same direction. When they diverge, that divergence itself is a finding worth investigating.

Phase 5: Culturally Intelligent Reporting

The deliverable matters as much as the methodology. Cross-cultural research reports fail when they flatten cultural differences into a single narrative.

Segment-specific insight decks. Produce separate, detailed analyses for each cultural segment alongside the cross-cultural comparison. Stakeholders working on Hispanic marketing need depth on that community, not just a summary table comparing five segments.

Language-specific evidence. Include verbatim quotes in the original language with contextual translations (not just literal translations). A quote in Spanish, accompanied by an explanation of why those specific words matter, carries more weight than an English paraphrase that strips the cultural texture.

Actionable cultural frameworks. Do not just describe differences. Provide frameworks that stakeholders can use to make decisions. What does this mean for product design? For messaging? For channel strategy? For pricing? Cultural insights that do not connect to business decisions are academic exercises.

Synthetic Participants in Cross-Cultural Research: Opportunities and Limits

One of the most interesting developments in AI-powered research is the emergence of synthetic participants — AI-generated respondents that can simulate the perspectives of specific demographic and psychographic profiles.

For cross-cultural research, synthetic participants offer several potential applications:

Hypothesis generation and study design. Before investing in recruitment and fieldwork across multiple cultural segments, synthetic participants can help researchers pressure-test their discussion guides, identify potential cultural blind spots in their research design, and generate hypotheses to explore with real participants.

Hard-to-reach population sampling. Some cultural segments are genuinely difficult to recruit — recent immigrants who distrust institutional research, ultra-high-net-worth individuals from specific cultural backgrounds, or diaspora populations spread across multiple countries. Synthetic participants can supplement (not replace) real participant data for these segments.

Rapid competitive and market scanning. When you need a quick read on how a concept might land across cultural segments — not definitive research, but directional input — synthetic participants can provide that signal in hours rather than weeks.

The critical limitation: Synthetic participants are modeled on training data that reflects published, digitized cultural expression. They systematically underrepresent voices that are not well-represented in that training data — which often means the most marginalized and culturally distinct perspectives are exactly the ones synthetic participants capture least accurately. Use them for hypothesis generation. Use real people for validation.

Compliance and Ethics in Cross-Cultural AI Research

Cross-cultural research conducted through AI platforms raises specific compliance considerations that teams must address proactively.

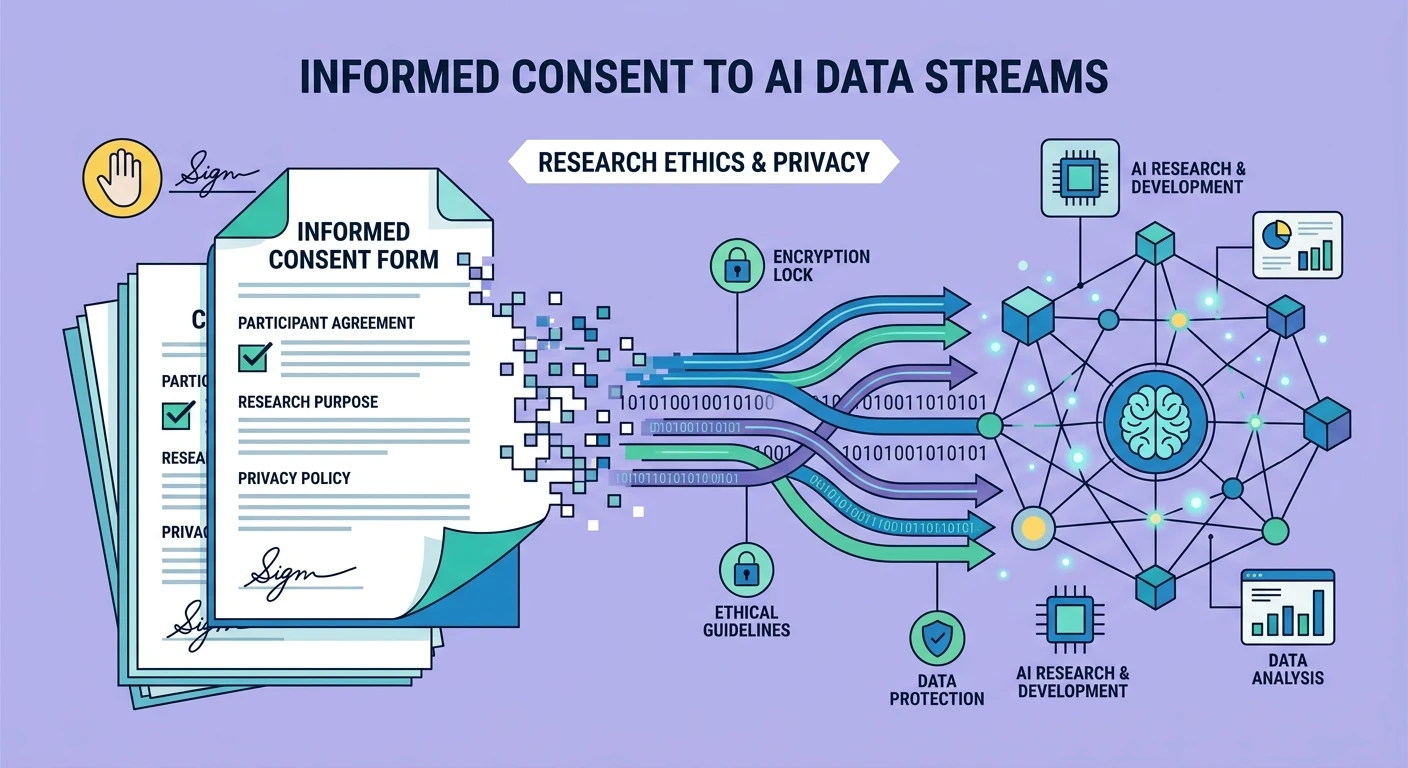

GDPR and data sovereignty. When researching European populations — including diaspora communities — GDPR applies. AI platforms must handle multilingual PII detection, consent management across languages, and data processing documentation that satisfies regulatory requirements in every relevant jurisdiction. This becomes particularly complex when a single study spans EU and non-EU participants.

Cultural data sensitivity. Some cultural communities have specific sensitivities around data collection that go beyond legal compliance. Indigenous communities may have protocols around who can access cultural knowledge. Religious communities may have restrictions on how certain topics are discussed or recorded. Ethical cross-cultural research requires understanding these norms and building them into the platform configuration, not just the research protocol.

Informed consent across literacy levels. Consent forms written in legal English and translated into other languages are not truly informed consent for participants with limited literacy or limited experience with institutional research. AI platforms can support multimedia consent processes — video explanations, audio walkthroughs, interactive Q&A — that make informed consent genuinely informed across educational and cultural contexts.

Bias transparency. Researchers have an ethical obligation to document the limitations of AI-powered analysis in cross-cultural contexts. Every report should include a methodology section that honestly addresses which cultural nuances the AI may have missed and what human review was conducted to mitigate those gaps.

The Business Case for AI-Powered Cross-Cultural Research

Let me close with the economics, because methodology improvements that do not pencil out do not get adopted.

Traditional cross-cultural qualitative research is expensive because every step multiplies. Three cultural segments means three sets of recruiters, three moderators, three translators, three rounds of analysis. A typical cross-cultural qual study with 60-90 participants across three segments runs $150,000 to $300,000 and takes 12-16 weeks.

AI-powered cross-cultural research does not eliminate every cost, but it fundamentally changes the cost structure:

Recruitment: Similar costs, though AI-moderated async participation expands the eligible pool and reduces scheduling overhead.

Moderation: AI moderation at $0 marginal cost per interview versus $200-500 per hour for bilingual human moderators. For a 90-participant study, this alone saves $50,000-100,000.

Translation and transcription: Near-zero marginal cost versus $2-5 per minute of audio across languages.

Analysis: AI coding and theme identification in hours versus weeks of manual bilingual coding. A conservative estimate puts the time savings at 60-70%, with corresponding cost reduction.

Timeline: 3-5 weeks end-to-end versus 12-16 weeks. The time savings alone may be worth more than the direct cost savings, particularly for brands operating in fast-moving consumer markets.

The total cost reduction is typically 40-60% for equivalent scope, with the option to invest some of those savings into larger sample sizes, additional cultural segments, or more sophisticated analytical frameworks.

Moving Forward

Cross-cultural qualitative research is not a niche capability anymore. It is a baseline requirement for any organization that wants to understand the markets it serves. The question is whether your research infrastructure is built for cultural intelligence or still treating multilingual, multicultural populations as edge cases.

AI does not solve the culture problem. People solve the culture problem. AI solves the scale, speed, and consistency problems that have made cross-cultural qual research prohibitively expensive and painfully slow for most organizations.

The firms that figure this out first — the ones that combine genuine cultural expertise with AI-powered analytical infrastructure — will produce insights their competitors cannot match. Not because their researchers are smarter, but because their tools let those researchers work at the scale and speed the market demands.

The research industry is at an inflection point. Stakeholder engagement in the AI era demands tools that can handle the full complexity of human cultural expression, not just the English-speaking subset of it. The technology is finally here. The question is who will use it first.