The Methodology Gap in AI-Moderated Research

AI-moderated interviews are having a moment. The pitch is compelling: run dozens or hundreds of in-depth interviews simultaneously, get automatic transcription, and analyze at scale. No scheduling. No moderator fatigue. No geographic constraints.

But here's what most platforms get wrong: they treat every interview the same way.

You get a text field to type your questions, an AI that asks those questions in order, and a transcript at the end. Whether you're doing foundational problem discovery, collecting feedback from existing users, or testing a new value proposition, the AI behaves identically. Same tone. Same probing strategy. Same conversation arc.

That's like handing a junior researcher a list of questions and saying "go." The questions might be right, but the way they're asked — the follow-up instincts, the areas to probe deeper, the things to deliberately avoid saying — that's where methodology lives. And methodology is what separates useful interviews from transcripts full of noise.

The research methodology you choose should change how the interview is conducted, not just what questions are asked. A Problem Discovery interview has fundamentally different conversation dynamics than a Customer Feedback session or a Value Proposition Test. The moderator's role, tone, probing strategy, and even what they're explicitly told not to say — all of these should shift based on the research goal.

Three Research Goals, Three Different Conversation Architectures

Let's be precise about what makes each mode distinct — not just in terms of questions, but in terms of how the AI moderator behaves minute-by-minute during the conversation.

Problem Discovery: The Curious Detective

Research goal: Uncover problems, frustrations, and unmet needs you haven't anticipated yet.

When to use it:

- You're building a new product and need to validate that the problem exists

- You're exploring a new market and want to understand pain points before designing solutions

- You're conducting Jobs-to-Be-Done research

- You want to avoid contaminating discovery with solution bias

The critical principle: Zero solution talk.

This is the single most important — and most frequently violated — rule in problem discovery research. The moment you mention a potential solution, you've primed the participant. Their responses shift from "here's what I actually struggle with" to "here's what I think about your idea." Those are fundamentally different conversations, and conflating them is one of the most common research mistakes in product development.

In Problem Discovery mode, the AI moderator will never mention, hint at, or describe any product, feature, or solution. This isn't a suggestion — it's a hard constraint programmed into the AI's behavior. Even if the participant asks "So what are you building?", the AI redirects back to their experience.

How the conversation unfolds:

The AI moderator acts as a curious detective — someone whose only job is to uncover stories about past experiences. The conversation architecture is built around three techniques:

1. Story-based prompts instead of direct questions. Rather than asking "What challenges do you face with project management?", the AI opens with prompts like "Tell me about the last time a project deadline felt genuinely stressful. Walk me through what happened." Stories produce richer, more specific data than opinions. People remember concrete events better than they summarize abstract frustrations.

2. Past actions only — never hypotheticals. The AI avoids questions about future behavior ("Would you use a tool that...?") because hypothetical responses are notoriously unreliable. People are terrible at predicting their own behavior. Instead, every question grounds the participant in what they've actually done, felt, and experienced.

3. Deep probing for root causes. When a pain point surfaces, the AI doesn't move on. It digs deeper with techniques categorized by intent:

- To find the pain: "What was the most frustrating part of getting everything ready?"

- To find workarounds: "How do you currently handle that? Walk me through your process."

- To understand the 'why': "Why is that step so important? What happens if it doesn't go well?"

The AI also embraces silence. After a participant finishes speaking, the AI pauses before responding — creating space for the participant to add more detail without being prompted. This is a deliberate moderator technique that most AI tools skip because it feels "awkward." But experienced qualitative researchers know: the best insights often come in those unprompted additions.

What this looks like in the generated guide:

When you click "Generate with AI" in Problem Discovery mode, you don't get a list of questions. You get an Exploration Framework — core story prompts with categorized probing techniques beneath each one. This structure gives the AI enough direction to maintain focus while preserving the conversational flexibility that makes discovery research productive.

Customer Feedback: The Empathetic Partner

Research goal: Collect structured, actionable feedback from people who actively use your product or service.

When to use it:

- You want to understand how customers actually use your product (not how you think they use it)

- You're building a product roadmap and need grounded user input

- You're identifying the biggest friction points in the current experience

- You're preparing for a redesign and need to know what to preserve vs. change

The critical principle: Ground everything in real usage.

Customer feedback interviews fail when they become opinion surveys. "What do you think about our dashboard?" produces vague, unhelpful answers. "Walk me through the last time you opened the dashboard and what you were trying to accomplish" produces specific, actionable data.

In Customer Feedback mode, every question connects to the participant's actual, personal experience with the product. The AI asks for specific recent examples rather than general opinions. When someone says "the reporting is good," the AI follows up with "Can you think of a specific time the reporting helped you make a decision? What were you trying to figure out?"

How the conversation unfolds:

The AI moderator acts as an empathetic partner — someone who genuinely values the customer's time and perspective. The conversation follows a deliberate emotional arc:

Phase 1: Understanding context and usage. The AI starts by establishing who this person is in relation to the product. What's their role? How long have they been using it? What are they primarily trying to accomplish? This isn't small talk — it's critical context that frames everything that follows.

Phase 2: Exploring value and bright spots. Before diving into problems, the AI asks about what's working well. This isn't just politeness — it builds rapport and prevents the interview from becoming a complaint session. When participants know their positive feedback is also valued, they provide more balanced, nuanced criticism later.

Phase 3: Uncovering pain points and friction. This is the core of the conversation. The AI probes for specific moments of frustration, confusion, or inefficiency. When users describe workarounds — unusual processes they've developed to compensate for product limitations — the AI pays special attention. Workarounds are gold: they reveal unmet needs that the user has already validated by investing effort to solve them.

Phase 4: Gathering improvement ideas. The conversation closes with a "magic wand" prompt: "If you could change one thing about [product], what would it be?" But the AI doesn't stop there — it probes the underlying problem: "What would that change enable you to do that you can't do now?"

Unique behavioral traits:

- The AI validates emotions explicitly. If a user says "I was really frustrated," the AI acknowledges it: "That does sound frustrating." This isn't performative empathy — it's a technique that makes participants feel heard, which leads to more detailed and honest feedback.

- The AI thanks the customer at the opening and closing. It's a small thing, but it signals respect for their time and expertise.

- The AI listens for workarounds and flags them. When someone says "I usually export to Excel and then…" the AI recognizes this as a signal that the in-product experience is falling short and probes deeper.

Value Proposition Testing: The Impartial Facilitator

Research goal: Present a proposed solution to participants and gather their honest, unbiased reactions.

When to use it:

- You have a concept — a product idea, a feature, a positioning statement — and want to know if it resonates

- You want to test whether users understand your value proposition as you intend it

- You need to compare your concept against existing alternatives in the participant's mind

- You're deciding between multiple product directions and want real user input

The critical principle: Present neutrally — validate, don't sell.

This is the mode where most internal research goes wrong. Product teams — understandably — are excited about their ideas. That excitement leaks into how concepts are presented: "We've built this amazing new feature that solves X. What do you think?" The participant, picking up on the enthusiasm, provides politely positive feedback. The team celebrates. The feature launches. Nobody uses it.

Value Proposition Testing mode makes the AI moderator an impartial facilitator who presents concepts without persuasive or marketing language. The AI never frames questions in ways that suggest a desired answer. Instead of "Wouldn't this make your workflow easier?", it asks "How does this compare to how you currently handle this?"

How the conversation unfolds:

Phase 1: Setting the stage by recapping the problem. Before presenting any concept, the AI asks the participant to describe their current challenges in the relevant area. This accomplishes two things: it primes the participant to evaluate the concept against their real needs (not abstract impressions), and it gives you baseline data about the problem space.

Phase 2: Introducing the concept neutrally. The AI presents your solution concept — pulled from your study description — using factual, non-persuasive language. No superlatives. No marketing copy. Just a clear description of what it does.

Phase 3: Capturing the gut reaction. Immediately after the concept is presented, the AI asks for the participant's first, unfiltered reaction. This is the most valuable moment in the interview. Before the participant has time to rationalize or be polite, what did they actually feel? Excitement? Confusion? Skepticism? Indifference?

The AI then performs a comprehension check: "In your own words, how would you describe what this does?" If the participant can't explain the concept back clearly, you have a messaging problem — and you've caught it before launch.

Phase 4: Probing for value, fit, and differentiation. The AI explores whether the concept fits into the participant's existing workflow, how it compares to their current alternatives, what would make them switch (or not), and what's missing.

Unique behavioral traits:

- The AI listens for hesitation and probes it. "You sounded a bit unsure there — can you tell me more about what's giving you pause?" Hesitation is signal. In most interviews, it gets glossed over.

- The AI never defends the concept. If a participant says "I don't see why I'd need this," the AI doesn't counter with benefits. It asks "Tell me more about why" and documents the objection.

- The AI pays attention to comparison language. When participants spontaneously compare the concept to something they already use, that's competitive intelligence. The AI follows that thread.

Why Methodology-Aware AI Matters More Than Better Prompts

You might be thinking: "Can't I just write better prompts for a general-purpose AI interviewer?"

In theory, yes. In practice, no — for three reasons.

1. Methodology constraints need to be enforced, not suggested. You can write in your prompt "Don't mention any solutions." But a general-purpose AI, optimizing for conversational naturalness, will occasionally violate that constraint — especially if the participant brings it up. A specialized mode treats it as a hard rule that cannot be overridden by conversational context.

2. Conversation dynamics are complex. The difference between "Ask all probes" and "Ask probes only if the response is surface-level" isn't something you can encode in a text prompt reliably. Specialized modes handle this through configured probe policies with explicit max probe counts per question — parameters that the AI evaluates at runtime against the participant's actual response.

3. The generated interview guide should match the methodology. When you click "Generate with AI" in Problem Discovery mode, you get an Exploration Framework with core story prompts and categorized probing techniques. In Customer Feedback mode, you get a four-phase guide structure. In Value Proposition Testing mode, you get a validation guide with a comprehension check step. A general-purpose tool generates the same format regardless of your research goal.

Choosing the Right Mode: A Decision Framework

| Signal | Use Problem Discovery | Use Customer Feedback | Use Value Proposition Testing |

|---|---|---|---|

| You don't have a solution yet | ✓ | ||

| You have users actively using a product | ✓ | ||

| You have a concept to validate | ✓ | ||

| You want to avoid solution bias | ✓ | ||

| You need roadmap input from users | ✓ | ||

| You're choosing between product directions | ✓ | ||

| You're conducting JTBD research | ✓ | ||

| You're preparing for a redesign | ✓ | ||

| You need to test messaging clarity | ✓ |

The sequence matters, too. Many product teams run all three modes in sequence across a research program:

- Problem Discovery first — validate that the problem exists and is worth solving

- Value Proposition Testing second — test whether your proposed solution resonates

- Customer Feedback third (post-launch) — understand how the real product is actually being used

This creates a research lifecycle that mirrors the product development lifecycle. Each mode feeds into the next.

Practical Setup: From Goal to Live Interview in 15 Minutes

Here's the actual workflow for setting up a specialized interview:

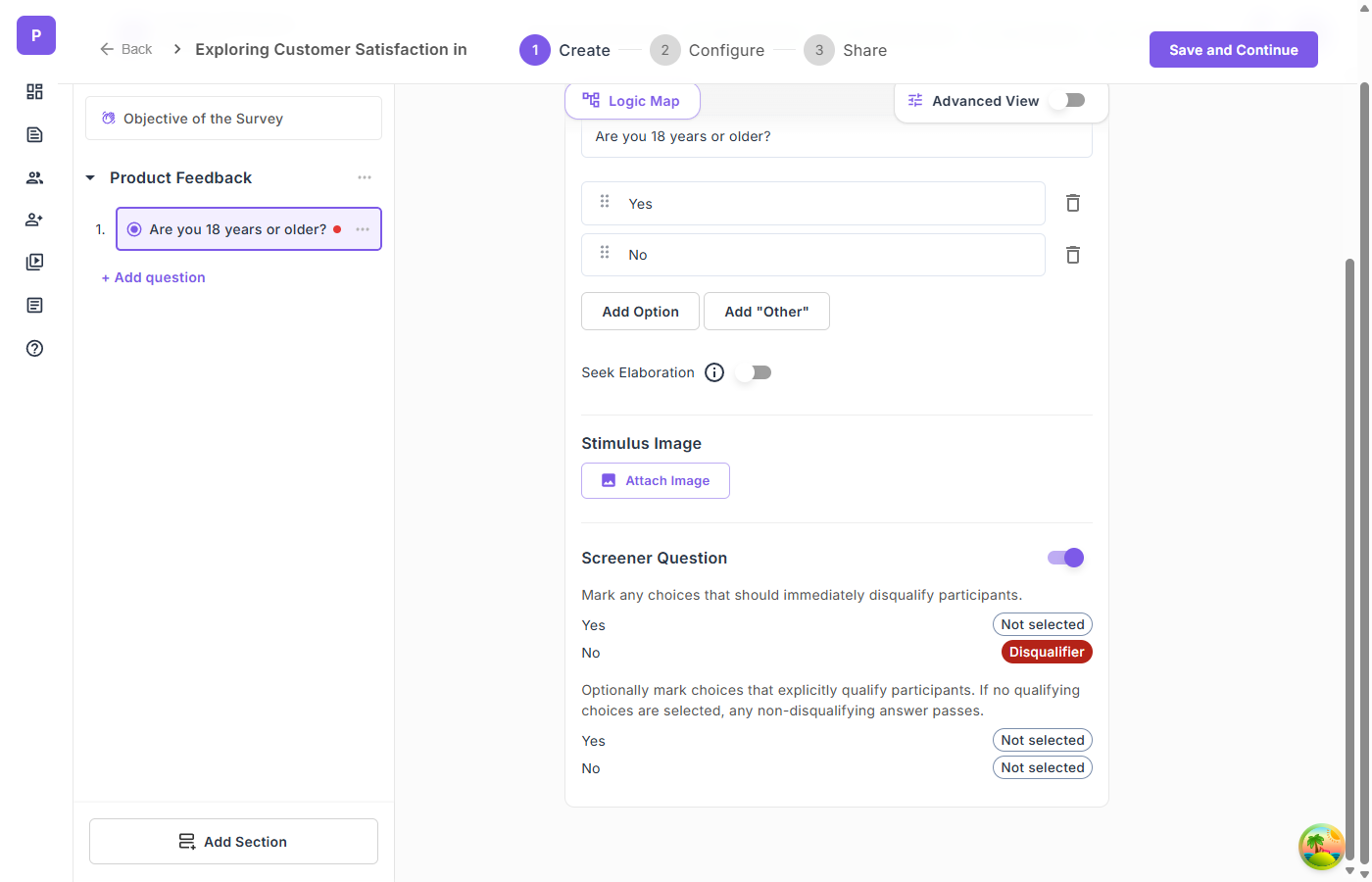

Step 1: Define your study. Give it a title and a specific objective. The more specific your objective, the better the AI-generated guide will be. "Understand customer needs" is vague. "Discover the top 3 frustrations enterprise IT managers face when onboarding new SaaS tools" is actionable.

Step 2: Select your specialized mode. This single selection changes everything downstream — the guide format, the AI moderator's personality, and the conversation rules.

Step 3: Set your duration. The platform calibrates the number of topics or questions to fit your time limit. A 10-minute Problem Discovery interview gets 2-3 core story prompts. A 30-minute Customer Feedback session gets a full four-phase guide with probes.

Step 4: Generate your guide with AI. One click produces a complete, mode-appropriate interview guide. Review it, edit inline, reorder, add or remove items. The guide is structured — stored in a format the AI moderator follows precisely — but fully editable.

Step 5: Add stimulus if needed. For Value Proposition Testing, this is critical. Upload your concept mockups, landing page screenshots, or positioning statements. Assign them to specific questions or topics so the AI presents them at exactly the right moment.

Step 6: Configure and share. Set your language (20+ supported), choose a voice for the AI moderator, add access controls, and generate your share link.

What Changes When the AI Knows Why It's Asking

The real shift here isn't about features. It's about embedding research intent into the instrument itself.

When an AI moderator knows that the goal is problem discovery, it doesn't just ask different questions — it listens differently. It knows that workarounds are signals. It knows that silence can produce better data than another probe. It knows that mentioning a solution, even accidentally, compromises the entire conversation.

When it knows the goal is customer feedback, it starts with value before moving to friction. It validates emotions. It chases workarounds. It asks for specific examples when it gets vague praise or vague criticism.

When it knows the goal is value proposition testing, it presents neutrally. It checks comprehension. It doesn't defend the concept. It follows comparison language to understand the competitive landscape.

This is what experienced qualitative researchers do instinctively. They adjust their moderating style to match the research methodology. The specialized mode doesn't replace that skill — it democratizes it. It allows product managers, startup founders, CX teams, and junior researchers to run methodologically sound interviews without years of qualitative training.

The Compounding Effect on Analysis

Better-structured interviews don't just produce better transcripts. They produce easier-to-analyze transcripts.

When every Problem Discovery interview follows the same exploration framework, your analysis can systematically compare pain points across participants. When every Customer Feedback session follows the same four-phase structure, you can isolate bright spots from friction points without manual sorting.

And when you layer multi-lens analysis on top — running the same transcripts through Jobs-to-Be-Done, Sentiment & Emotion Spectrum, and Customer Journey Pain Points lenses — the structured conversation architecture means each lens has clean, methodologically consistent data to work with.

The quality of your insights is a function of the quality of your data collection. Specialized modes ensure that quality is built in from the first question.

Qualz.ai supports six interview modes: Structured, Semi-Structured, Unstructured, Problem Discovery, Customer Feedback, and Value Proposition Testing. Each mode configures the AI moderator's behavior, probe strategy, and generated interview guide to match your research methodology. Start a free study →