Most qualitative research captures a single moment. You interview a user today, code the transcript, extract themes, and deliver findings. The insights are valuable, but they are frozen in time. You know what the user thinks right now. You have no idea how they got here, and you cannot predict where they are going.

This is the fundamental limitation of cross-sectional qualitative research, and every experienced UX researcher knows it. The user who raves about your onboarding flow in a Week 1 interview might be quietly churning by Week 8. The product manager who seems indifferent during initial research might become your strongest internal champion once they encounter the right trigger event. Single-point studies miss these trajectories entirely.

Longitudinal qualitative research — diary studies, repeated interviews, experience sampling, ethnographic tracking over weeks or months — captures what cross-sectional research cannot: how experience evolves, how mental models shift, how behavior changes in response to real-world context over time.

The problem has never been whether longitudinal qual is valuable. Everyone agrees it is. The problem has been that analyzing it is so expensive and complex that most teams never attempt it.

AI changes this equation fundamentally.

Why Longitudinal Qualitative Research Matters More Than Ever

SaaS products are not static objects that users evaluate once and adopt forever. They are living systems that users develop relationships with over time. The user's first week bears almost no resemblance to their experience at month six. Their needs evolve, their workflows adapt, their expectations shift based on accumulated experience.

Yet most UX research programs treat users as if they exist in a perpetual present tense. We run usability tests on new features. We conduct satisfaction interviews at arbitrary intervals. We measure NPS quarterly and treat each score as if it appeared from nowhere.

This approach misses three critical dimensions that only longitudinal research can capture.

Trajectory Over Snapshot

A user who rates their experience 7 out of 10 today could be on an upward trajectory from 4 (good news) or a downward trajectory from 9 (a churn signal). The number is identical. The story is completely different. Longitudinal research captures the direction and velocity of experience change, not just the current state.

Trigger Events and Turning Points

Every product relationship has inflection moments — the day the user discovers a feature that changes their workflow, the frustration that makes them start evaluating competitors, the conversation with a colleague that reframes how they think about the tool. These turning points are invisible in cross-sectional research because participants cannot reliably recall or articulate them retrospectively. Diary studies and repeated interviews catch them as they happen.

Adaptation and Workaround Evolution

Users do not passively accept your product as designed. They build workarounds, develop habits, create processes that accommodate your product's limitations. These adaptations evolve over time and represent some of the most valuable product intelligence available. A workaround that persists for three months is a feature request with proven demand. A workaround that disappears after a product update tells you your improvement actually landed.

The Traditional Analysis Problem

If the case for longitudinal qualitative research is so compelling, why do so few teams do it?

The answer is analytical complexity. Consider the math of a modest longitudinal study.

You recruit 15 participants for an 8-week diary study. Each participant makes 3 entries per week. That is 360 diary entries. You also conduct brief interviews at weeks 1, 4, and 8 — another 45 interview transcripts. Total data: 405 discrete pieces of qualitative data from 15 people across 8 time points.

Now try to analyze that manually.

Traditional qualitative coding requires reading each entry, assigning thematic codes, and then — here is the hard part — tracking how those codes evolve within each participant over time AND identifying patterns across participants. You are not just coding themes. You are coding trajectories.

A single participant might generate 24 diary entries plus 3 interviews. To understand their experience trajectory, an analyst needs to read all 27 pieces of data in chronological order, track which themes appear and disappear, note when sentiment shifts, and identify trigger events that explain those shifts. Then they need to do that 14 more times and synthesize across all participants.

At typical manual coding rates, a study like this requires 300 to 500 analyst hours. At $150 per hour for a qualified qualitative researcher, that is $45,000 to $75,000 in analysis costs alone — for a study with just 15 participants. Most product teams have neither the budget nor the timeline to support this.

The result is a painful gap: everyone knows longitudinal qual is more valuable, but almost nobody can afford to do it properly. Teams compromise by running shorter studies, recruiting fewer participants, or — most commonly — simply sticking with cross-sectional research and accepting the blind spots.

How AI Transforms Longitudinal Analysis

AI does not just make longitudinal qualitative analysis faster. It makes a fundamentally different kind of analysis possible — one that humans alone could not perform regardless of time or budget.

Consistent Coding Across Time

The most basic advantage is consistency. When a human analyst codes diary entry number 1 and diary entry number 360, they are a different analyst. They have been influenced by every entry in between. Their understanding of the codebook has drifted. Their attention has flagged. Codes that seemed important in week 1 feel routine by week 6.

AI applies the same coding framework to every entry with identical rigor. This consistency is not just efficient — it is methodologically critical for longitudinal research, where the whole point is to detect genuine change over time rather than artifacts of analyst drift.

Within-Participant Trajectory Mapping

This is where AI unlocks something genuinely new. For each participant, AI can construct a coded timeline that maps theme emergence, sentiment shifts, behavioral changes, and trigger events across every data point. It can identify the exact entry where a participant's language about your product shifts from exploratory to committed, or from satisfied to frustrated.

These individual trajectories become the building blocks of longitudinal insight. In traditional analysis, constructing even five detailed participant trajectories is a significant research effort. AI can construct all fifteen simultaneously.

Cross-Participant Pattern Detection

With individual trajectories mapped, AI excels at detecting patterns across participants. Do most users experience a "valley of disillusionment" around week 3? Is there a common trigger event that precedes adoption deepening? Do different user segments follow different experience trajectories?

These cross-participant temporal patterns are essentially invisible to manual analysis because they require holding multiple timelines in working memory simultaneously. They are precisely the kind of structured pattern recognition where AI delivers the most value.

The underlying capability that makes this possible is what AI researchers call memory architecture — the ability to maintain structured representations of context across extended interactions. The same AI memory architecture patterns that power enterprise agent systems enable research tools to track participant experience across weeks and months of longitudinal data, maintaining coherence that would overwhelm human working memory.

Temporal Code Evolution

Traditional qualitative coding produces a flat output: here are the themes, here is how frequently they appear. Longitudinal coding with AI produces a dynamic output: here is how themes emerge, peak, fade, and interact over time.

For example, the code "onboarding confusion" might appear in 12 of 15 participants during week 1, decline to 3 by week 3, and spike back to 5 in week 6 when a new feature launches. This temporal code evolution tells a story that frequency counts alone cannot: onboarding is working, but feature releases are reintroducing confusion for users who thought they had things figured out.

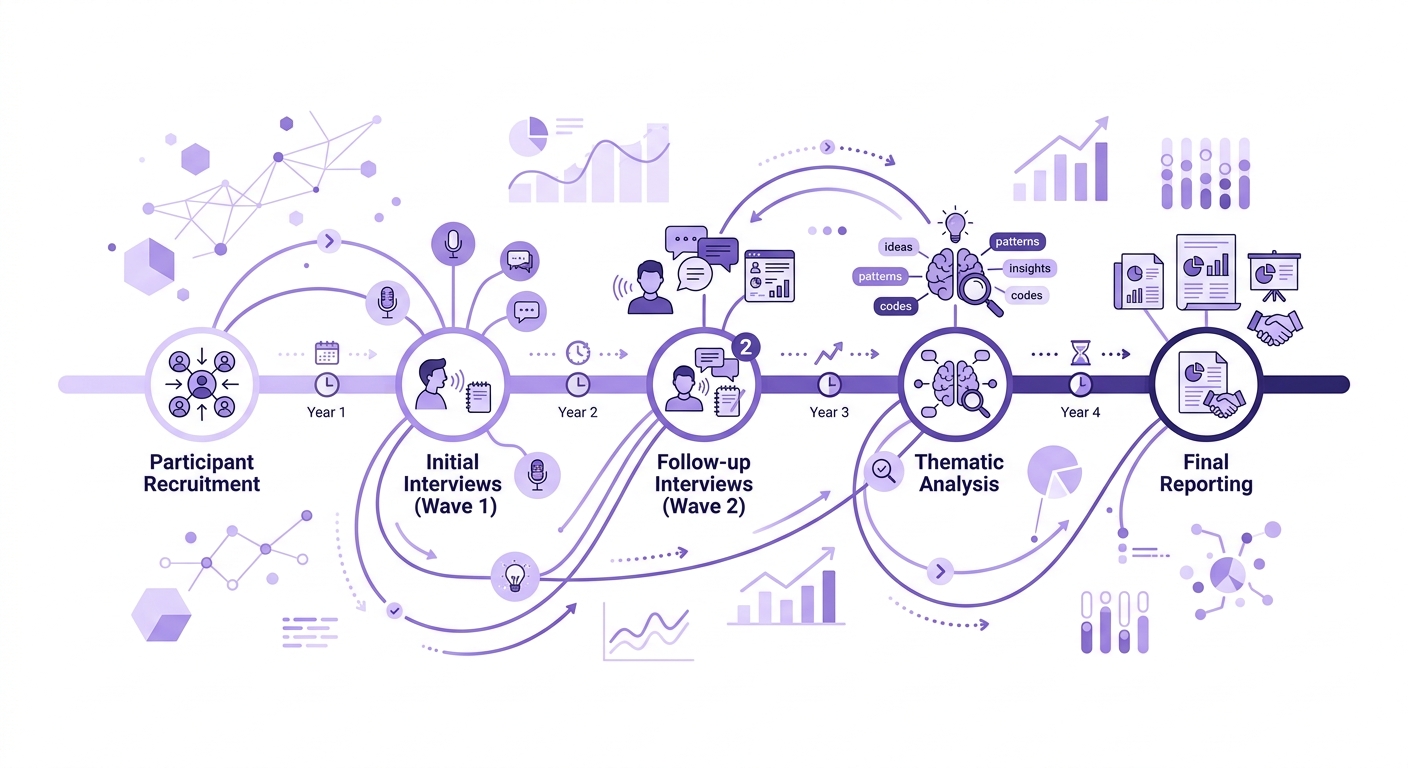

Setting Up a Longitudinal Qualitative Study With AI-Assisted Analysis

Here is a practical framework for teams ready to run their first AI-assisted longitudinal study.

Phase 1: Design for Temporal Analysis

Your study design must embed time as an analytical dimension from the start.

Choose your longitudinal method. Diary studies work well for capturing ongoing experience in context. Repeated interviews work better for exploring evolving mental models in depth. Experience sampling (brief prompted check-ins at random intervals) captures natural variation. Many studies combine methods — for example, daily diary entries supplemented by weekly interviews.

For teams new to diary studies, the operational details matter significantly. We have written extensively about running diary studies with AI-assisted analysis, covering participant recruitment, prompt design, and compliance strategies that keep response quality high over multi-week studies.

Define your temporal structure. Decide the total duration, the data collection frequency, and any milestone events you want to capture (onboarding completion, first month, feature release). These temporal markers become coding anchors during analysis.

Design prompts that surface change. The best longitudinal diary prompts explicitly ask about change: "What has shifted in how you use [product] since last week?" "Describe a moment this week when your expectations were either confirmed or contradicted." Change-oriented prompts produce data that is naturally suited to trajectory analysis.

Phase 2: Collect With Consistency

Longitudinal data quality depends on consistent collection over time. This is where many studies fail — participant fatigue, inconsistent prompting, and dropout erode the dataset.

Use structured but flexible prompts. Each diary entry should address the same core dimensions (current experience, notable events, emotional state, behavioral changes) while leaving room for emergent topics. This consistency creates comparable data points across time without constraining what participants can report.

Capture context alongside content. Prompt participants to note what was happening around their product use — workload, team changes, competing priorities. This contextual data becomes essential for interpreting experience shifts. A satisfaction decline that coincides with a product bug means something different from one that coincides with a reorganization at the participant's company.

Monitor compliance and quality in real time. Do not wait until the study ends to discover that half your participants stopped responding in week 3. Use your research platform to track entry rates and quality throughout the study. For distributed teams, remote ethnographic approaches can supplement diary data with observational context that enriches the longitudinal picture.

Phase 3: AI-Powered Longitudinal Analysis

This is where the analytical approach diverges from traditional qualitative coding.

Step 1: Initial coding pass. Upload all diary entries and interview transcripts and run a standard thematic coding pass. This produces your baseline codebook — the themes present across the entire dataset regardless of time.

Step 2: Temporal coding overlay. Apply time-aware coding that tags each data point with its position in the study timeline and the participant's tenure at that point. This is the coding layer that enables trajectory analysis.

Step 3: Within-participant trajectory construction. For each participant, generate a coded timeline that shows how their themes, sentiment, and behavioral patterns evolve across all their data points. Identify inflection points where significant shifts occur.

Step 4: Cross-participant pattern analysis. Compare trajectories across participants to identify common temporal patterns. Look for shared inflection points, divergent trajectories between segments, and universal versus idiosyncratic patterns.

Step 5: Trigger event mapping. Map the contextual events (product changes, personal circumstances, team dynamics) that coincide with experience shifts. These trigger-shift pairs are your highest-value findings — they tell you not just that experience changes, but why it changes and what precipitates the change.

In complex longitudinal studies with multiple data streams — diary entries, interviews, behavioral logs, survey responses — the analytical challenge resembles orchestration more than simple coding. The same principles that govern multi-agent orchestration in production systems apply to coordinating multiple analytical passes across temporal qualitative data: each pass has a specific role, and their outputs must be synthesized coherently.

Phase 4: Synthesis and Reporting

Longitudinal findings demand different reporting formats than cross-sectional research.

Individual journey maps. For key participants or archetypes, produce visual timelines showing their experience trajectory with annotated inflection points. These are powerful artifacts for building stakeholder empathy.

Temporal theme maps. Show how themes rise and fall over the study period, annotated with events that explain the changes. This is your "what happened and why" view.

Segment trajectory comparisons. If different user segments follow different experience trajectories, visualize these side by side. This directly informs personalization and segmentation strategy.

Trigger-response catalogs. Document the trigger events you identified and their typical impact on experience. This becomes a predictive tool — when you see these triggers in the future, you can anticipate the experience impact.

What Qualz.ai Brings to Longitudinal Research

We built Qualz with temporal analysis as a first-class capability, not an afterthought. The platform supports diary study management with automated participant prompting, real-time compliance monitoring, and longitudinal coding that tracks theme evolution across time.

Where Qualz particularly excels is in the synthesis phase. The platform constructs participant trajectories automatically, identifies cross-participant temporal patterns, and generates trigger-event maps that connect contextual changes to experience shifts. Researchers spend their time interpreting patterns and making strategic recommendations rather than drowning in manual coding of hundreds of diary entries.

For teams running their first longitudinal study, Qualz provides templated study designs for common longitudinal methods — 4-week onboarding diary studies, 12-week adoption tracking, feature release impact studies — so you do not have to design the temporal structure from scratch.

When to Choose Longitudinal Over Cross-Sectional

Not every research question requires longitudinal data. Here is when the investment pays off:

Onboarding and adoption research. If you want to understand how users move from novice to proficient, you need to watch it happen over time. Cross-sectional interviews with users at different tenure levels are a poor substitute — they introduce survivorship bias and cannot capture the causal chain of events.

Churn investigation. Users who churn can rarely articulate the full trajectory that led to their decision. The frustrations accumulated gradually. The alternatives entered their awareness incrementally. A longitudinal study catches the degradation as it happens.

Feature impact assessment. A/B tests tell you whether a feature changed a metric. Longitudinal qualitative research tells you how and why — the behavioral adaptations, the workflow changes, the mental model shifts that the metric reflects.

Experience design for long-term engagement. If your product depends on sustained engagement — think enterprise tools, health platforms, educational products — understanding the long-term experience trajectory is essential. What sustains engagement past the novelty period? What triggers re-engagement after dormancy?

Getting Started With Your First Longitudinal Study

Start small. A 4-week diary study with 10 participants, collecting 3 entries per week, gives you 120 data points — enough to reveal meaningful temporal patterns without overwhelming your team.

Choose a research question that is inherently temporal: How does the new user experience evolve during the first month? How do users adapt their workflows after a major feature release? What triggers the transition from casual to power user?

Design your diary prompts to surface change. Collect consistently. And let AI handle the analytical complexity that has historically made longitudinal qualitative research a luxury only the largest research teams could afford.

The richest insights in qualitative research live in the spaces between time points — in the trajectories, the turning points, the gradual shifts that no single interview can capture. AI makes those spaces accessible.

Ready to run your first longitudinal qualitative study? Book a demo to see how Qualz.ai helps teams track experience over time with AI-powered analysis.