The regulatory landscape for drug development has shifted. Patient-reported outcomes (PROs) are no longer a nice-to-have supplement to clinical endpoints. They are becoming a requirement.

The FDA's Patient-Focused Drug Development (PFDD) initiative, the EMA's increasing emphasis on patient preference studies, and the growing expectations of Health Technology Assessment (HTA) bodies worldwide all point in the same direction: regulators want to hear from patients in their own words about what matters to them — symptom burden, treatment impact, quality of life, and the lived experience of disease.

This creates a significant operational challenge. The qualitative research methods traditionally used to capture patient voice — in-depth interviews, focus groups, concept elicitation studies — were designed for small samples. They are labor-intensive, expensive, and slow. Running 20 to 30 interviews for a single concept elicitation study can take months and cost hundreds of thousands of dollars.

Meanwhile, the evidence requirements keep expanding. Regulators want patient input at every stage of the drug development lifecycle, from early discovery through post-market surveillance. The gap between what is being asked for and what current methods can deliver is widening.

AI-moderated interviews offer a path through this gap — not by replacing human judgment, but by enabling qualitative rigor at a scale that traditional methods simply cannot achieve.

The Regulatory Push for Patient Voice

The shift toward patient-centricity in drug development is not new, but it has accelerated dramatically in recent years.

FDA Patient-Focused Drug Development

The FDA's PFDD program, established under the 21st Century Cures Act, has produced four comprehensive guidance documents outlining how patient experience data should be collected, analyzed, and submitted. The core message is clear: clinical trial endpoints alone do not capture what matters most to patients.

PFDD Guidance 1 and 2 emphasize the importance of collecting qualitative data on disease symptoms and daily impacts directly from patients. Guidance 3 addresses the selection and development of clinical outcome assessments (COAs), many of which require qualitative concept elicitation as a foundational step. Guidance 4 covers how to integrate patient experience data into regulatory decision-making.

The practical implication is that sponsors developing new therapies need qualitative evidence — interview transcripts, thematic analyses, conceptual frameworks — demonstrating that their endpoints reflect what patients actually experience and care about.

EMA and Global Alignment

The EMA has followed a parallel trajectory. Its Patient Engagement Framework and the Regulatory Science Strategy to 2025 both call for systematic incorporation of patient perspectives into benefit-risk assessment. The ICH E8(R1) guideline on general considerations for clinical studies explicitly recognizes the importance of patient input in study design.

HTA bodies are equally demanding. NICE in the UK, G-BA in Germany, and HAS in France increasingly expect patient experience evidence as part of value dossiers. The days when a well-designed RCT alone could secure market access are fading.

What This Means in Practice

For clinical research organizations and pharmaceutical companies, the message translates into concrete requirements:

- Concept elicitation studies before endpoint selection, involving qualitative interviews with patients to identify relevant symptoms and impacts

- Cognitive debriefing studies to validate that PRO instruments are understood as intended

- Patient preference studies exploring willingness to accept treatment trade-offs

- Treatment burden assessments capturing the full impact of therapies on daily life

- Post-market qualitative surveillance monitoring real-world patient experience after launch

Each of these requires structured qualitative data collection from patient populations that are often geographically dispersed, medically fragile, and difficult to recruit.

Why Traditional PRO Methods Are Falling Short

The qualitative methods used in patient-centered outcomes research were developed in an era when a study involving 30 patients was considered comprehensive. Those methods — one-on-one semi-structured interviews and moderated focus groups — produce rich data. But they carry significant limitations when applied to the scale and pace that modern regulatory requirements demand.

The Scale Problem

A typical concept elicitation study involves 15 to 30 patients, conducted in three to five waves until thematic saturation is reached. Each interview runs 60 to 90 minutes, is conducted by a trained qualitative researcher, and must be transcribed and coded.

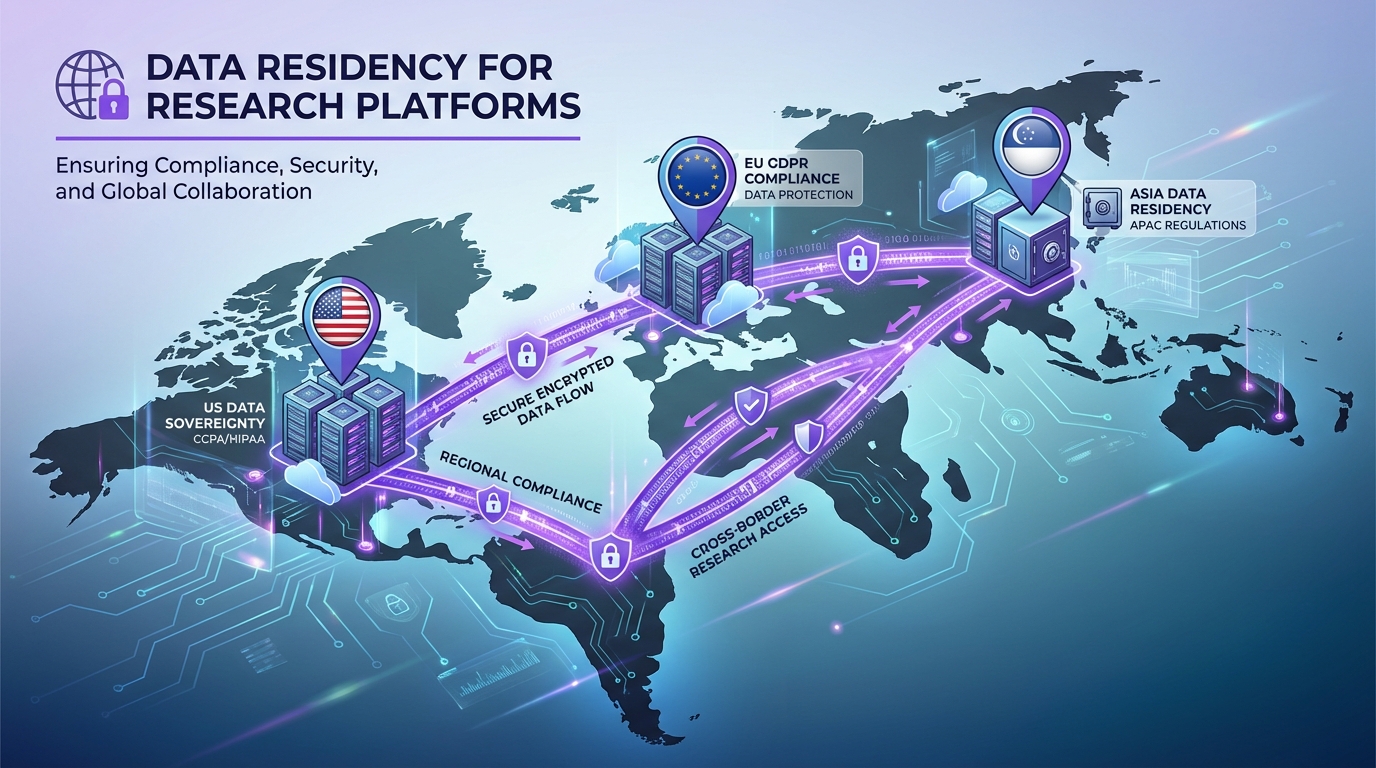

For a global submission, you may need to replicate this across multiple countries, languages, and cultural contexts. A single PRO development program can require 100 or more patient interviews before you have sufficient evidence for regulatory review.

At traditional research rates — one interviewer conducting two to three interviews per day, followed by hours of transcription and analysis — this timeline extends to months. And that is for a single study in a single indication.

The Consistency Problem

When multiple interviewers conduct qualitative interviews across sites and countries, consistency becomes a serious concern. Each interviewer brings their own style, their own follow-up instincts, and their own unconscious biases.

Regulatory reviewers know this. FDA reviewers have raised questions about inter-interviewer variability in PRO development submissions. When your concept elicitation study involves eight different interviewers across four countries, demonstrating that the same constructs were explored with the same depth and neutrality is difficult.

Moderator variability is a well-documented challenge in qualitative research. In clinical contexts, where the evidence directly informs regulatory decisions, the stakes are considerably higher.

The Cost Problem

Qualitative patient research is expensive. A single concept elicitation study can cost $200,000 to $500,000, depending on the indication, patient population, and geographic scope. A full PRO development program — including concept elicitation, item generation, cognitive debriefing, and psychometric validation — routinely exceeds $1 million.

These costs create perverse incentives. Companies cut corners on sample sizes, limit geographic diversity, or skip qualitative work entirely and rely on existing instruments that may not capture what matters most to their specific patient population.

The result is weaker evidence packages, longer regulatory review cycles, and ultimately less patient-centered drug development.

The Access Problem

Many patient populations are difficult to reach through traditional research methods. Patients with rare diseases are geographically scattered. Patients with severe conditions may be too ill to travel to a research site. Patients with cognitive impairments, pediatric populations, and caregivers all present unique recruitment and logistical challenges.

Traditional qualitative research requires patients to be available at a specific time, in a specific place (or on a specific video call), for a 60- to 90-minute conversation with a stranger. For many of the patients whose voices most need to be heard, this is an unreasonable ask.

How AI Interviews Maintain Rigor at Scale

AI-moderated interviews address these limitations not by simplifying the research, but by removing the operational bottlenecks that constrain it.

Consistent Protocol Execution

An AI interviewer follows the same discussion guide, with the same probing logic, for every single participant. The fifteenth interview is conducted with the same rigor as the first. The hundredth interview is conducted with the same rigor as the fifteenth.

This is not rigidity — a well-designed AI interview adapts its follow-up questions based on participant responses, exploring themes in depth when they emerge and moving on when a topic has been adequately covered. But the adaptation follows explicit, auditable rules rather than an individual interviewer's in-the-moment judgment.

For regulatory submissions, this consistency is a significant advantage. You can demonstrate exactly what was asked, why follow-up questions were triggered, and how the protocol ensured comprehensive coverage of the conceptual framework. Every interaction is automatically logged with complete audit trails suitable for regulatory review.

Parallel Data Collection

An AI interviewer can conduct hundreds of interviews simultaneously. A concept elicitation study that would take weeks with human interviewers can be completed in days.

This is not just a speed advantage. It enables research designs that were previously impractical:

- Large-sample qualitative studies with 200 or more participants, providing robust evidence of thematic saturation

- Multi-country studies conducted simultaneously rather than sequentially, eliminating the timeline penalty of global research

- Rapid-cycle studies that can be repeated quarterly or even monthly to track evolving patient experiences

- Subgroup analyses with sufficient qualitative depth to compare experiences across disease severity levels, treatment regimens, or demographic groups

Patient-Friendly Participation

AI-moderated interviews can be completed asynchronously, on the patient's schedule, from their home. A patient with chronic fatigue can participate in short sessions across multiple days. A caregiver can respond during the brief windows when their care responsibilities allow. A patient in a rural area with no access to a research center can participate with nothing more than a smartphone.

This flexibility does not compromise data quality. Longitudinal qualitative research designs — where patients contribute observations over days or weeks rather than in a single intensive session — can actually produce richer data by capturing experiences as they unfold rather than relying on retrospective recall.

Automated Thematic Analysis

Qualitative coding is one of the most time-consuming steps in PRO research. A single concept elicitation study can generate hundreds of pages of transcripts that must be systematically coded, categorized, and analyzed.

AI-powered thematic analysis can process this volume in hours rather than weeks, identifying themes, tracking saturation curves, and generating conceptual frameworks that map directly to the constructs regulators care about.

Crucially, this analysis is reproducible. Run the same analysis twice and you get the same results. Change a coding parameter and you can immediately see how it affects the thematic structure. This level of analytical transparency is difficult to achieve with manual coding, where inter-rater reliability is an ongoing challenge.

Use Cases in Clinical Research

The applications span the full drug development lifecycle.

Treatment Burden Assessment

Understanding the full burden of treatment — not just side effects, but the daily impact of medication regimens, monitoring requirements, dietary restrictions, and healthcare visits — is increasingly central to regulatory and HTA assessments.

AI interviews can systematically explore treatment burden across large patient samples, capturing the specific ways that therapy interferes with work, relationships, sleep, finances, and daily activities. Because the interviews follow a consistent protocol, the resulting data supports quantitative burden scores alongside rich qualitative narratives.

Symptom Concept Elicitation

Identifying the symptoms and impacts that matter most to patients is the foundation of meaningful endpoint selection. AI-moderated concept elicitation interviews can reach saturation more reliably by including larger, more diverse patient samples.

When a concept elicitation study includes 200 patients rather than 25, you can be confident that you have captured the full range of relevant symptoms — including those experienced by subpopulations that a smaller sample might miss.

Cognitive Debriefing at Scale

Cognitive debriefing — testing whether patients understand and interpret PRO instrument items as intended — is a critical validation step. Traditional debriefing studies involve 10 to 15 patients per language version.

AI-moderated debriefing interviews can test comprehension across larger samples, identifying interpretation issues that small studies might miss. They can also be conducted simultaneously across multiple languages, dramatically accelerating the timeline for international instrument validation.

Real-World Patient Experience Monitoring

Post-launch, pharmaceutical companies need ongoing evidence about how patients experience their therapies in routine clinical practice. This real-world evidence supports label expansion, HTA resubmissions, and pharmacovigilance.

AI interviews enable continuous qualitative surveillance — regular check-ins with patient panels that track evolving experiences over months or years. This produces a living evidence base that grows richer over time, rather than a single snapshot from a one-time study.

Care Experience Research

Health systems and integrated delivery networks increasingly conduct qualitative research to understand the patient experience across care pathways. AI interviews can gather structured narratives from thousands of patients about their experiences with specific services, transitions of care, or chronic disease management programs.

The cost efficiency of AI-powered approaches makes it feasible to conduct this research at a scale that would be prohibitively expensive with traditional methods — and to repeat it frequently enough to drive continuous improvement.

Maintaining Regulatory-Quality Standards

Scale without rigor is not useful in regulated environments. The value of AI-moderated interviews in clinical research depends on meeting the same quality standards that regulators expect from traditional qualitative methods.

Protocol Documentation

Every AI-moderated interview is fully documented: the discussion guide, the probing logic, the decision rules for follow-up questions, and the complete transcript of every interaction. This documentation package provides regulators with full visibility into the data collection process.

Audit Trails

Regulatory submissions require evidence that data was collected systematically and without bias. AI interviews generate automatic audit trails that document every question asked, every response received, and every algorithmic decision made during the interview. This level of documentation exceeds what is typically available for human-moderated interviews.

Data Quality Controls

AI systems can apply real-time quality checks during interviews — identifying responses that are too brief for meaningful analysis, flagging potential misunderstandings, and ensuring that all required topics are adequately explored before the interview concludes.

Human Oversight

AI-moderated interviews do not eliminate the need for human expertise. Qualitative researchers design the discussion guides, define the conceptual frameworks, review the thematic analyses, and make the interpretive judgments that inform regulatory submissions. The AI handles execution at scale; humans provide the scientific judgment.

A New Paradigm for Patient-Centered Evidence

The convergence of regulatory demand for patient voice and AI's ability to conduct qualitative research at scale represents a genuine shift in how clinical evidence is generated.

This is not about replacing the depth of qualitative research with shallow automation. It is about removing the operational constraints that have forced patient-centered research to remain small, slow, and expensive — precisely at the moment when the demand for that research is expanding dramatically.

Pharmaceutical companies that adopt AI-moderated patient interviews will be able to build richer evidence packages, reach more diverse patient populations, and respond to regulatory requests faster. More importantly, they will be able to make the patient voice a genuine input to drug development decisions, rather than a checkbox exercise constrained by budget and timeline.

The patients whose experiences inform these decisions deserve to be heard — not a carefully selected 25, but the hundreds or thousands whose collective voice can genuinely represent the diversity of disease experience. AI makes that possible.

Ready to bring the patient voice into your research at scale? Book a demo to see how Qualz.ai helps clinical research teams gather rich qualitative patient data — with the rigor regulators expect and the scale your studies demand.