The Democratization Dilemma

Every growing product org hits the same inflection point: research demand outpaces research capacity. Product managers need user insights for every sprint. Designers want validation before every major decision. Growth teams want to understand churn signals. And your research team — if you have one — is drowning.

The instinct is obvious: let everyone do research. Give product managers interview templates. Train designers on usability testing. Democratize the whole thing.

Here's the problem: most democratization efforts produce one of two outcomes. Either the research quality degrades so badly that teams are making decisions on flawed data (worse than no data), or researchers become bottleneck gatekeepers who slow everything down trying to maintain standards.

Neither works. But there's a middle path that does.

Why Untrained Research Is Dangerous

Before we solve this, let's be honest about why "just let everyone do interviews" fails.

Leading questions corrupt data silently. A product manager who's spent three months building a feature will unconsciously frame questions to validate their work. "Don't you think this new dashboard makes reporting easier?" isn't research — it's confirmation theater. The PM walks away feeling validated. The data is worthless.

Sample bias compounds. Non-researchers tend to recruit the easiest participants — existing power users, friendly customers, internal stakeholders. The insights from these conversations systematically over-represent satisfied, engaged users and under-represent the silent majority who churned, struggled, or never activated.

Analysis without training produces narratives, not insights. Reading five interview transcripts and pulling out quotes that support your hypothesis isn't qualitative analysis. It's cherry-picking disguised as research. Real thematic analysis requires systematic coding, pattern recognition across the full dataset, and active effort to find disconfirming evidence.

Ethical risks multiply. Informed consent, data handling, participant well-being — these aren't bureaucratic checkboxes. A non-researcher might inadvertently pressure a participant, mishandle sensitive disclosures, or make promises about product changes they can't keep.

The research community's instinct to protect quality is correct. Their typical solution — hoarding all research behind a centralized team — is not.

The Tiered Research Model

The framework that actually works is a tiered system that matches research complexity to researcher skill level, with guardrails that scale.

Tier 1: Self-Service (Anyone Can Do It)

These are structured, low-risk research activities with built-in guardrails:

- Templated surveys with pre-validated question banks

- Unmoderated usability tests on existing features using scripted tasks

- Customer feedback synthesis from support tickets, reviews, NPS comments

- Competitor experience reviews using standardized evaluation frameworks

The key: these activities use pre-designed research instruments that eliminate most ways a non-researcher can introduce bias. The questions are already written. The analysis framework is already defined. The guardrails are structural, not behavioral.

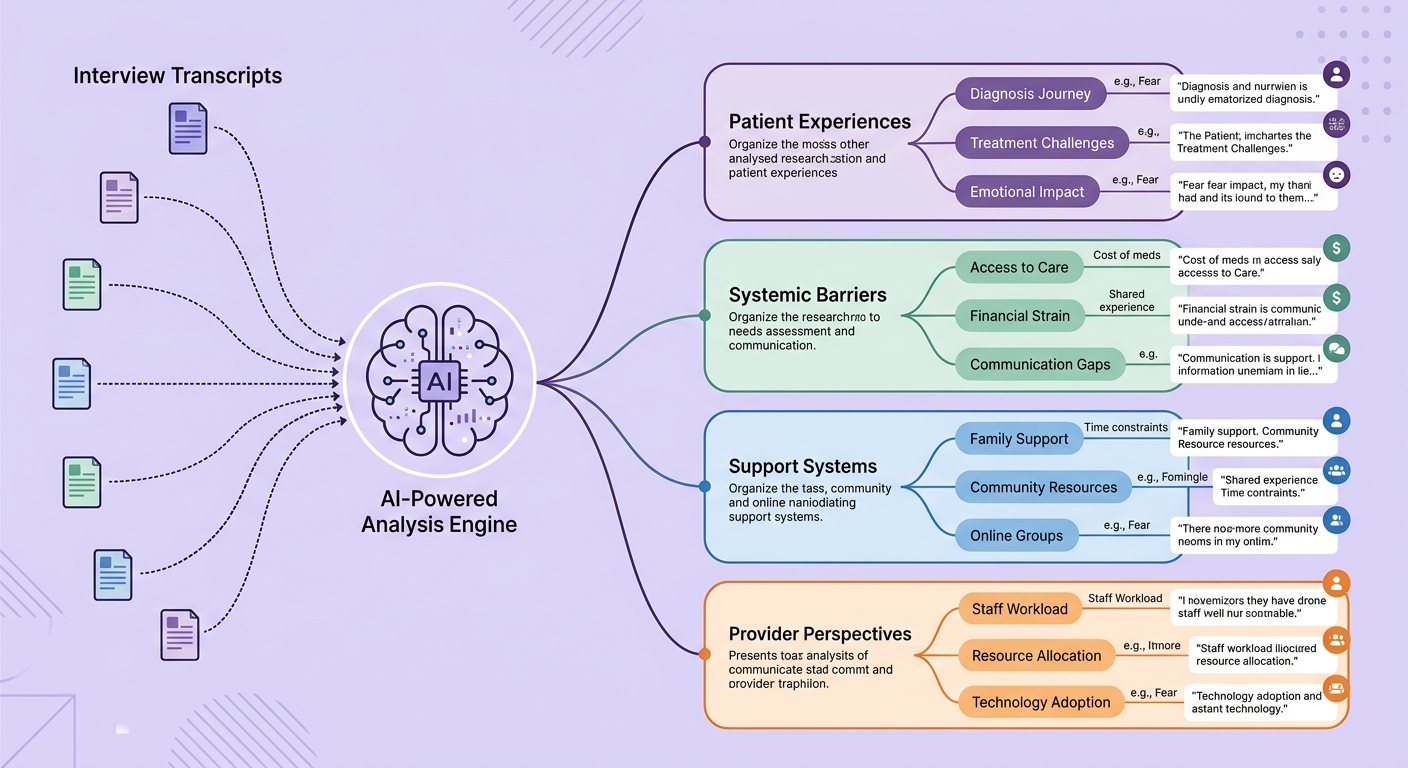

This is where AI-powered research platforms create massive leverage. When the platform handles question design, response analysis, and theme extraction, the skill floor drops dramatically. A product manager using an AI-assisted survey platform with smart branching logic and automated thematic coding can produce genuinely useful insights without formal research training.

Tier 2: Guided Research (Trained + Supported)

These require some training and researcher oversight:

- Moderated user interviews following researcher-approved discussion guides

- Concept testing with structured evaluation criteria

- Diary studies with researcher-designed protocols

- A/B test interpretation beyond basic metrics

For Tier 2, the research team's role shifts from doing to enabling. They design the research instruments, train PMs and designers on effective interview techniques, review discussion guides before sessions, and spot-check recordings for quality.

The training isn't a one-time workshop. It's ongoing coaching with feedback loops. After each study, a researcher reviews a sample of sessions and provides specific feedback: "In minute 12, you asked a leading question — here's how to reframe it." This is how competence actually builds.

Tier 3: Expert-Only (Researchers Lead)

Some research should never be democratized:

- Foundational/generative research exploring new problem spaces

- Sensitive topics (health, finances, vulnerable populations)

- Strategic research informing company-level decisions

- Longitudinal studies requiring methodological consistency

- Cross-functional synthesis connecting insights across multiple studies

These require the full weight of research expertise — study design, sophisticated sampling, advanced analysis methods, and the judgment to know when findings are robust enough to act on versus when they need more investigation.

Building the Infrastructure

The tiered model only works if you build the infrastructure to support it.

Research ops is the force multiplier. Someone needs to own participant recruitment pipelines, research tooling, template libraries, quality metrics, and the training program. This is research operations, and it's the most underleveraged role in product organizations. One good ResearchOps person can 10x your org's research capacity.

Centralized insight repositories prevent redundancy. Teams doing decentralized research will unknowingly duplicate each other's work unless insights are stored, tagged, and searchable. When a PM can search "onboarding friction" and find three studies from the past quarter, they either build on that foundation or realize the question is already answered.

Quality dashboards keep everyone honest. Track metrics like: percentage of studies using approved templates, participant diversity scores, time from research to decision, and researcher satisfaction with democratized study quality. What gets measured gets managed.

Ethical review stays centralized. Even Tier 1 research should pass through a lightweight ethical review — automated where possible, human-reviewed for anything involving sensitive data or vulnerable populations. This is non-negotiable and shouldn't be democratized.

The AI Acceleration Layer

Here's where this gets interesting for 2026. AI is collapsing the skill gap between Tier 1 and Tier 2 research.

Modern AI research tools can:

- Detect leading questions in discussion guides before they reach participants

- Monitor interviews in real-time and flag when a moderator is introducing bias

- Automate thematic analysis across hundreds of responses with consistent coding that doesn't degrade with volume

- Generate research briefs from raw data that surface both confirming and disconfirming evidence

- Recommend sample sizes and recruitment criteria based on research questions

This doesn't replace researcher judgment. It augments everyone else's capability while freeing researchers to focus on Tier 3 work — the strategic, complex, high-stakes research that actually requires deep expertise.

The organizations winning at research democratization in 2026 aren't choosing between quality and scale. They're using AI-powered research infrastructure to get both. And the gap between companies that figure this out and those still debating whether PMs should be "allowed" to talk to customers is widening fast.

Making the Shift

If you're a research leader reading this, here's your playbook:

- Audit current demand. Map every research request from the past quarter. Categorize by tier. You'll likely find 60-70% is Tier 1, 20-25% is Tier 2, and only 10-15% truly requires Tier 3 expertise.

- Build Tier 1 first. Create templated surveys, usability test scripts, and analysis frameworks. Deploy an AI-assisted platform that handles the mechanical work. Get three PM teams piloting within 30 days.

- Launch a Tier 2 training cohort. Pick 5-8 motivated PMs and designers. Run a 4-week coaching program with live practice and feedback. These become your "research champions" who mentor others.

- Protect Tier 3 capacity. If your researchers are spending 70% of their time on Tier 1 work, they have no capacity for the strategic research that actually moves the business. Democratization isn't about doing less research — it's about doing more of the right research.

- Measure and iterate. Track research volume, quality, and impact across all tiers. Adjust the tier boundaries based on what you learn.

The companies that build research as an organizational capability — not just a team function — will have a structural advantage in understanding their users. And understanding users remains the single highest-leverage activity in product development.

Research democratization isn't about lowering the bar. It's about building more bars at different heights and giving everyone the right ladder.