The False Dichotomy: Full Study or Nothing

Product teams live in a constant tension: ship fast or research first. The problem is not that teams do not value research -- it is that the perceived cost of "doing research" feels incompatible with the sprint cycle. When the only research model you know involves a two-week recruitment phase, eight one-hour interviews, a week of analysis, and a polished report, it is no wonder product managers default to gut instinct.

But this is a false dichotomy. Between "full formal study" and "guessing" lies a rich landscape of lightweight validation methods that can stress-test critical assumptions in days rather than weeks.

The teams shipping the best products are not choosing between speed and evidence. They are matching the weight of their research method to the weight of the decision.

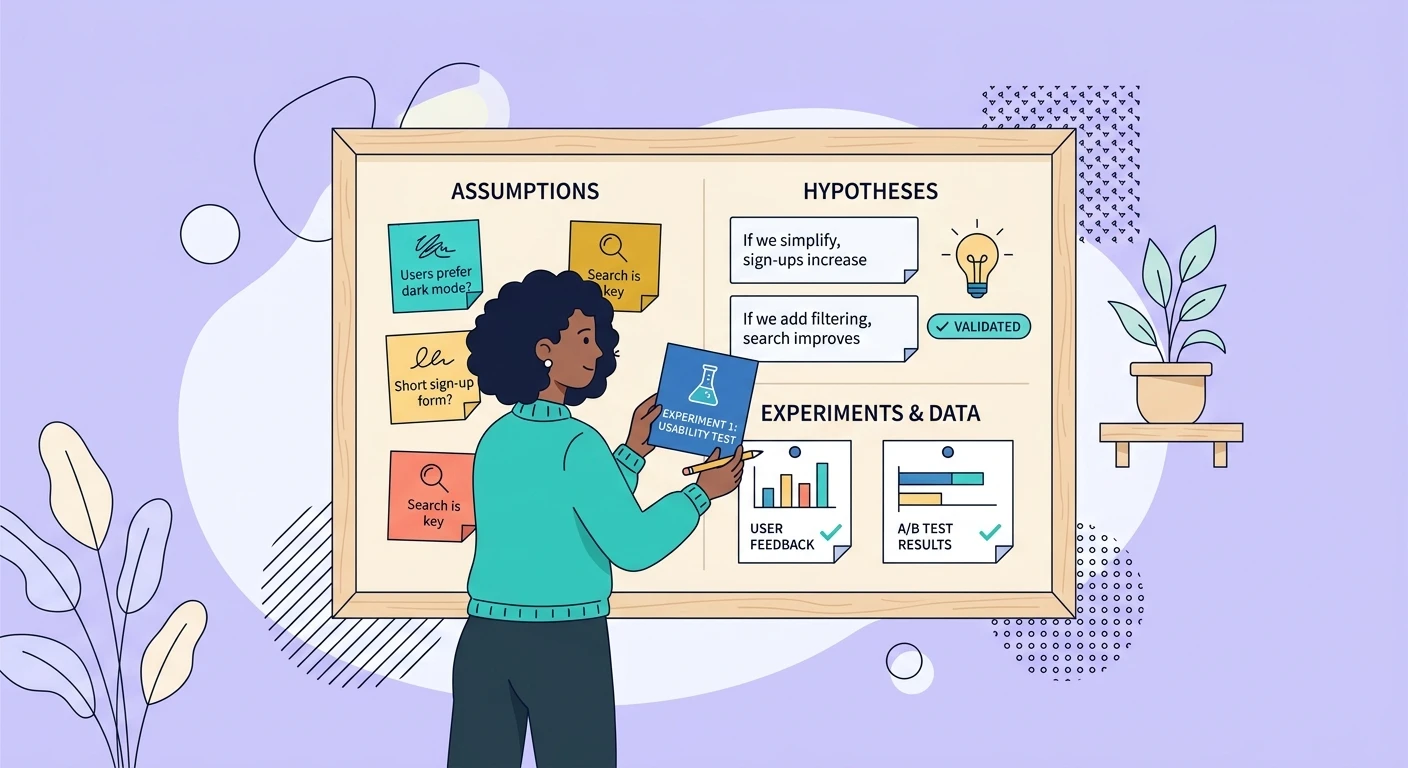

The Assumption Stack: What Actually Needs Validation

Before choosing a method, clarify what you are actually trying to validate. Most product assumptions fall into four categories:

Existence assumptions -- "This problem exists for our users." These are the cheapest to validate because evidence is usually already sitting in support tickets, session recordings, and feedback channels. The signal-to-noise problem in customer feedback is real, but even noisy data can confirm or deny existence.

Magnitude assumptions -- "This problem is painful enough to change behavior." Harder to validate passively. Requires some form of user contact, but not necessarily a full interview.

Solution assumptions -- "Our proposed solution actually addresses the pain." This is where most teams over-invest in fidelity too early. You do not need a pixel-perfect prototype to test whether your solution concept resonates.

Willingness assumptions -- "Users will adopt this solution given the switching cost." The trickiest to validate without a live product, but not impossible.

Five Lightweight Validation Techniques

1. The Five-Conversation Sprint

Forget the 12-15 participant sample that academic rigor demands. For assumption validation, five focused 15-minute conversations will surface the critical signal. This is not a study -- it is a pulse check.

The key: extreme focus. One assumption per sprint. Three questions maximum. No discussion guide longer than a sticky note.

Recruit from existing customers (support interactions, power users, churned accounts). Skip the formal recruitment process. A Slack message, an in-app intercept, or a personal email gets you five conversations in 48 hours.

As research on how to design interviews for your research demonstrates, the quality of your questions matters more than the quantity of your participants.

2. The Existing Data Audit

Before talking to anyone, exhaust what you already know. Most organizations are sitting on mountains of unanalyzed qualitative data:

- Customer support transcripts from the last 90 days

- Sales call recordings mentioning the feature area

- App store reviews and G2 feedback

- Community forum threads

- Previous research studies that touched adjacent topics

An afternoon of systematic review often validates (or kills) assumptions before you recruit a single participant. The challenge is analyzing this data at scale without losing nuance, but modern AI tools make this feasible in hours rather than weeks.

3. The Concept Value Test

Skip the prototype. Write a one-paragraph description of the proposed solution -- what it does, who it is for, what problem it solves. Show it to ten target users and ask two questions: "Would this solve a real problem for you?" and "What would you stop using if this existed?"

The second question is the killer. It forces concrete tradeoff thinking rather than hypothetical enthusiasm. If nobody can name something they would stop using, your concept has a demand problem regardless of how well you execute it.

4. The Behavioral Proxy

Instead of asking people what they would do, observe what they already do. Behavioral proxies use existing actions as evidence for assumptions:

- If you assume users want feature X, check how many have built workarounds (spreadsheets, integrations, manual processes)

- If you assume a workflow is painful, measure abandonment rates at specific steps

- If you assume willingness to pay, look at what adjacent tools users already pay for

This connects to the broader principle that product analytics without qualitative context leads teams astray -- but the reverse is also true. Behavioral data without qualitative interpretation is ambiguous. Use it to generate hypotheses, then validate with a quick conversation.

5. The Pre-Mortem Interview

Gather your team and ask: "It is six months from now and this feature completely failed. Why?" Collect the failure scenarios. Then spend one day talking to 3-5 users specifically about the top failure scenario.

This inverts the typical research question from "will this work?" to "what would make this fail?" -- which consistently surfaces risks that optimistic feature discussions miss.

Knowing When Lightweight Is Not Enough

These methods have limits. They work for assumption validation -- confirming that you are pointed in the right direction. They do not replace full studies when:

- The decision is irreversible or extremely expensive to reverse

- You are entering a completely new market where your intuitions are unreliable

- Regulatory or safety concerns demand rigorous evidence

- Stakeholders require formal documentation for buy-in

The skill is matching method weight to decision weight. A feature toggle that can be reverted in an hour does not need the same evidence threshold as a platform migration that will take eighteen months. The principles of research triangulation apply -- multiple lightweight signals can provide confidence that a single method cannot.

Building the Validation Habit

The goal is not to replace formal research but to make evidence-gathering a reflexive habit rather than a formal event. When validation takes two days instead of six weeks, it stops being a blocker and starts being a default.

The most effective product teams we see have made this shift: research is not something you commission. It is something you do, continuously, in proportion to the uncertainty you face. Every assumption gets some level of scrutiny. The question is never "should we validate?" but "how lightweight can we make this validation while still getting a useful signal?"

That question, asked consistently, is worth more than any single study.