Every Channel Is Screaming. Almost None of It Is Useful.

The modern product team has more customer feedback than any team in history. Support tickets stream in at hundreds per day. NPS surveys generate thousands of open-ended comments per quarter. Sales calls are recorded and transcribed. Social media mentions ping Slack channels in real-time. App store reviews accumulate relentlessly. Community forums buzz with feature requests.

And yet, product decisions still get made on gut feel, executive opinion, and whichever customer yelled loudest last week.

The problem is not a lack of feedback. It is a signal-to-noise ratio that has collapsed. When everything is feedback, nothing is insight. Teams that try to "listen to all customer voices equally" end up paralyzed by contradictory signals, chasing edge cases, or building for the vocal minority while the silent majority churns.

Why Volume Destroys Signal

Feedback channels have fundamentally different signal properties. A support ticket is written by someone with an immediate problem -- it captures frustration at a specific moment, not strategic product direction. An NPS comment is shaped by the recency effect and the artificial constraint of a text box that follows a numeric score. A sales call objection reflects the prospect's negotiating position, not necessarily their actual decision criteria. A social media post optimizes for engagement and social signaling, not accuracy.

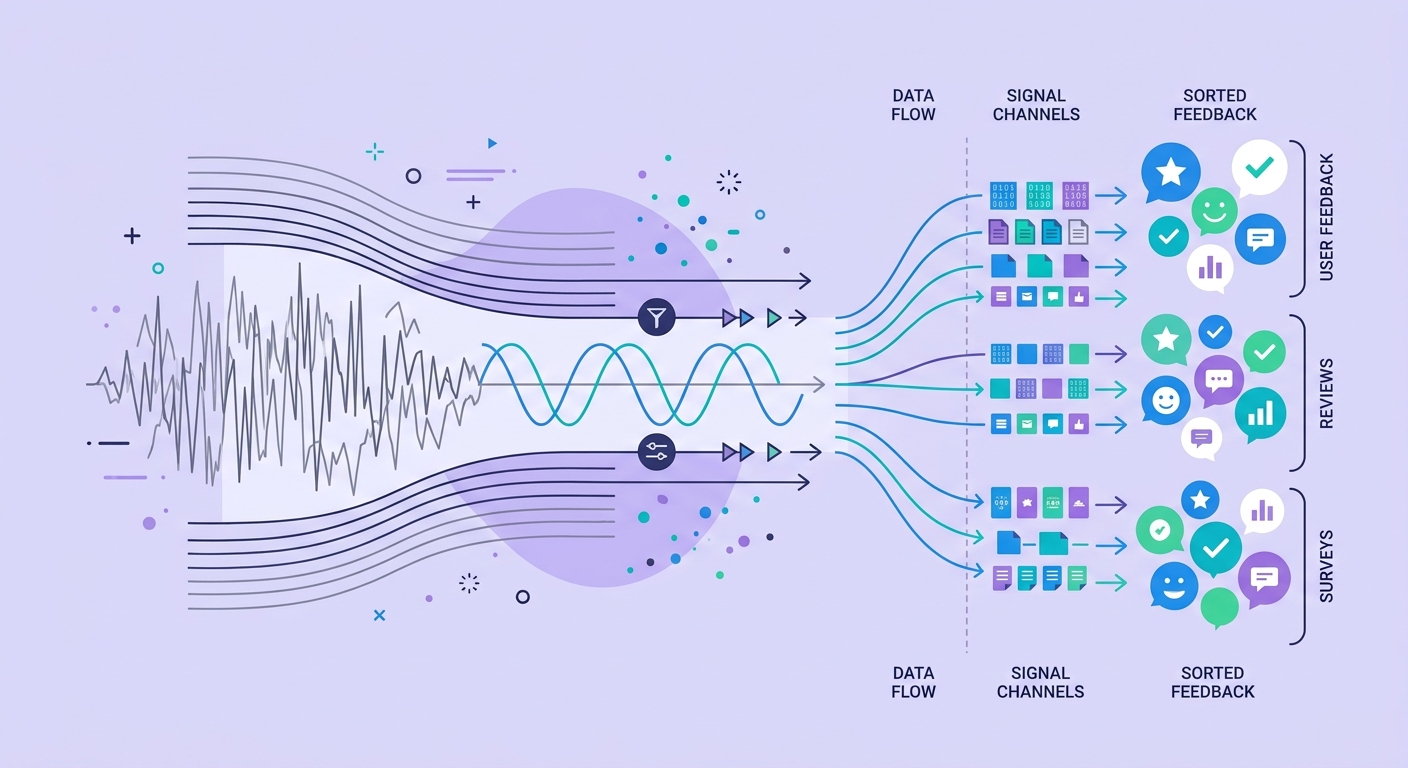

Treating all of these as equivalent "voice of customer" data is like treating all electromagnetic radiation as visible light. You need different instruments to detect different signals, and you need to understand what each channel actually measures versus what you wish it measured.

The teams that excel at turning interview transcripts into roadmap decisions understand this implicitly. They know that raw feedback is input, not output. The transformation from feedback to insight requires deliberate, structured processing.

The Five Failure Modes

1. The Loudest Voice Wins

Enterprise customers with direct access to the executive team get features prioritized regardless of whether the need is widespread. A single passionate user on Twitter triggers a roadmap change that affects thousands. The customer who writes a 2,000-word email gets attention that the hundred customers who silently churned never will.

2. Channel Bias Masquerades as Consensus

When 80% of your feedback comes from support tickets, your roadmap optimizes for reducing support load rather than driving growth or retention. When sales calls dominate the input, you build for prospects rather than existing customers. The channel you listen to most shapes the product you build, regardless of whether that channel represents your most valuable users.

3. Recency Overwhelms Frequency

The feedback that arrived today feels more urgent than the pattern that has been accumulating for six months. A sudden spike in complaints about a minor bug overshadows the slow-building frustration with a fundamental workflow problem. Teams respond to spikes when they should respond to trends.

4. Feature Requests Replace Problem Statements

Customers describe solutions, not problems. "I need an export to CSV button" is not an insight -- it is one possible solution to an unstated problem that might be better solved five other ways. When teams take feature requests at face value, they build a product that is a patchwork of customer-designed solutions rather than a coherent experience. Understanding the real cost of unanalyzed qualitative data means recognizing that raw requests are not insights.

5. Sentiment Scores Hide Complexity

Aggregate sentiment scores -- whether from NPS, CSAT, or AI-generated sentiment analysis -- create the illusion of understanding. A sentiment score of 0.7 tells you nothing about why, what to do about it, or whether the underlying issues are one big problem or fifty small ones. As we explored in our analysis of why sentiment alone misses the story, the qualitative context behind the number is where actionable insight lives.

Building a Systematic Triage Framework

Layer 1: Channel-Appropriate Extraction

Different channels require different extraction methods. Support tickets should be mined for recurring friction patterns, not individual feature requests. NPS comments should be clustered by theme across score bands, not read individually. Sales call recordings should be analyzed for objection patterns across deal stages. Social media should be monitored for brand perception shifts, not individual complaints.

The key is matching your extraction method to what each channel actually measures well.

Layer 2: Problem Decomposition

Every piece of feedback -- regardless of channel -- should be decomposed into the underlying problem statement before entering your insight system. "I need CSV export" becomes "I need to share data with stakeholders who don't have product access." "Your onboarding is confusing" becomes "I couldn't accomplish my first task within my first session."

This decomposition step is where most teams skip and where most signal is lost. It requires either trained human analysts or AI-powered qualitative analysis sophisticated enough to look past surface statements.

Layer 3: Frequency-Weighted Prioritization

Once problems are extracted and decomposed, weight them by frequency across channels, impact on retention and revenue, strategic alignment, and effort to address. The critical word is "across channels." A problem that surfaces in support tickets AND sales calls AND NPS comments AND exit interviews is far more likely to be real and important than one that dominates a single channel.

Layer 4: Validation Through Deliberate Research

Feedback triage identifies hypotheses. It does not validate them. The final layer is deliberate, targeted research to validate the most promising signals before they become roadmap items. This is where structured interviews, usability testing, and prototype validation earn their keep -- not as the primary discovery mechanism, but as the confirmation mechanism for signals already identified through systematic feedback analysis.

The Role of AI in Feedback Triage

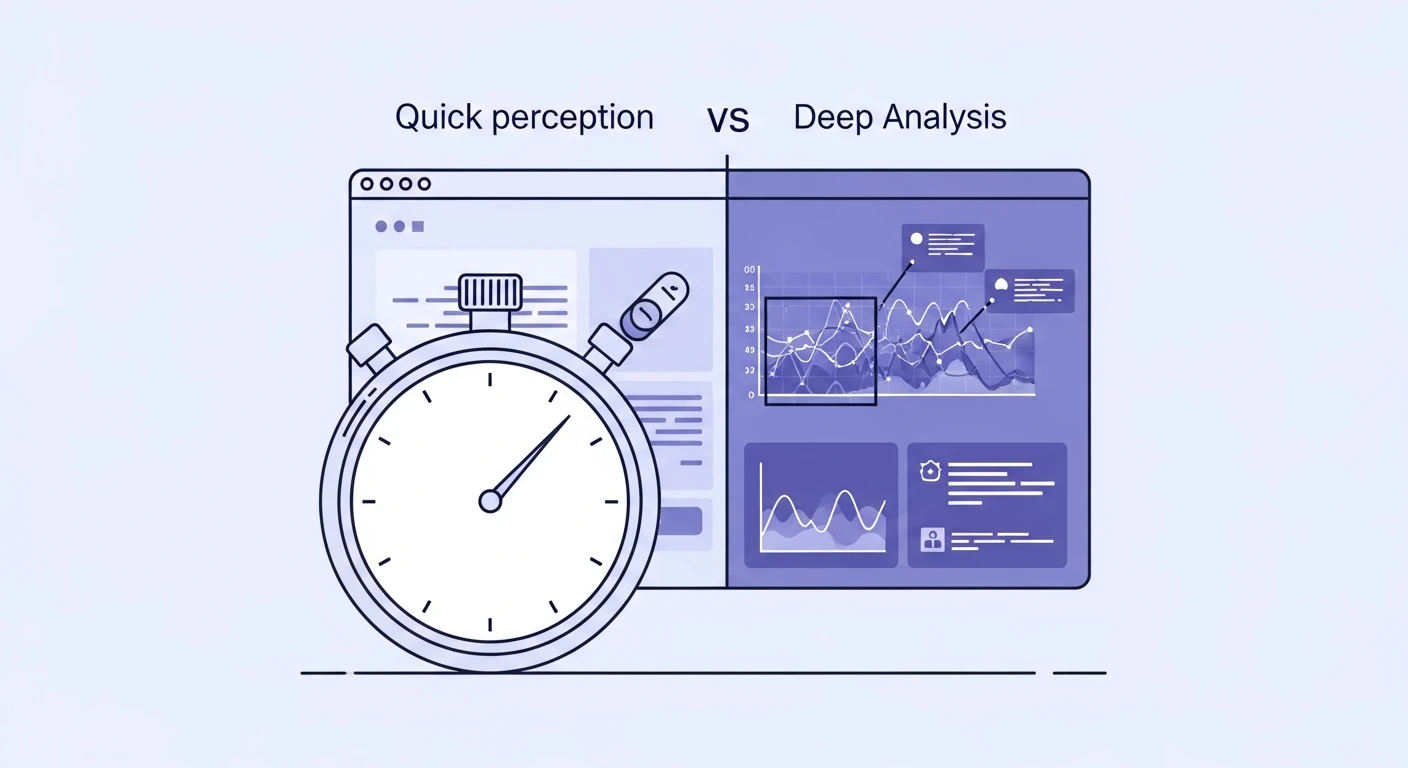

AI-powered analysis is transformative for this problem specifically because the volume problem is what makes manual triage impossible. No human team can read 10,000 support tickets per month and extract thematic patterns with consistency. No analyst can listen to 200 sales calls per quarter and identify objection frequency distributions.

But AI analysis works only when it is structured correctly. Dumping feedback into a sentiment classifier and reading the output is not triage -- it is automation of the wrong process. The AI needs to be configured for problem extraction, cross-channel pattern matching, and frequency analysis, not summarization.

The teams getting this right treat AI as the pattern-detection layer and humans as the interpretation and decision layer. The AI identifies that 340 support tickets and 28 NPS comments this month reference the same underlying workflow friction. The human decides what to do about it.

From Noise to Roadmap

The goal is not to listen to more feedback. It is to listen better. A team that processes 10% of its feedback through a rigorous triage framework will make better product decisions than a team that vaguely absorbs 100% of its feedback through miscellaneous Slack channels and quarterly NPS reviews.

Start by auditing your current feedback channels. What does each one actually measure? Who is represented and who is missing? What extraction method is appropriate for each? Then build the decomposition layer that transforms surface feedback into underlying problems. Then count.

The insights you need are already in your data. They are buried under noise. The teams that win are not the ones with the most feedback -- they are the ones with the best filters.