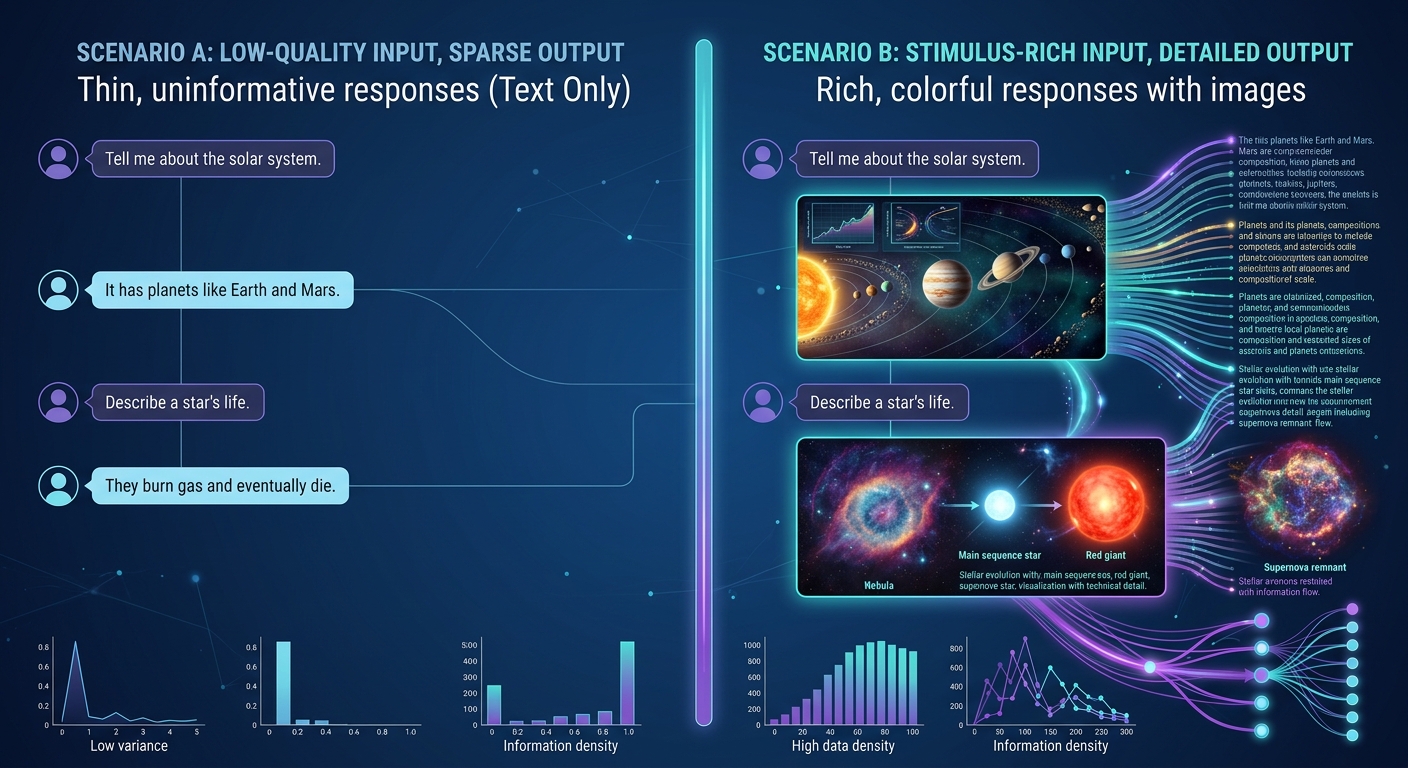

Program evaluators and nonprofit leaders face the same impossible math. Your funder wants you to interview 80 stakeholders across four regions. You have two staff members who can conduct interviews. Each interview takes 45 minutes plus 30 minutes of scheduling, prep, and notes. That is 100 hours of interview time alone — before a single transcript is coded.

So you compromise. You interview 15 people instead of 80. You run three focus groups instead of individual conversations. You send a survey with a couple of open-ended questions and hope people write more than two sentences. The funder gets a report. The report says what 15 people think. Nobody mentions the 65 voices that were never heard.

This is not a quality problem. It is a structural constraint that has shaped — and limited — stakeholder engagement in the nonprofit and evaluation sector for decades. AI-moderated interviews remove that constraint entirely.

The Scale Problem Is Not About Technology

Before AI interviews, the stakeholder engagement bottleneck was always human capacity. Not willingness, not methodology, not participant access — just the raw hours required to have meaningful conversations with enough people to produce credible findings.

Consider the real numbers behind a typical program evaluation:

Recruitment and scheduling: For every completed interview, expect 3-5 scheduling attempts. For 80 interviews, that is 240-400 emails or phone calls. A coordinator spending 10 minutes per attempt is looking at 40-65 hours just on logistics.

Conducting interviews: A skilled interviewer can do 3-4 quality interviews per day before fatigue degrades their performance. Eighty interviews at 3 per day is 27 interview days — over five work weeks of nothing but interviews.

Note-taking and transcription: Even with recording, someone needs to review and clean transcripts, note contextual observations, and flag key moments. Budget 30 minutes per interview minimum. That is another 40 hours.

Analysis: Coding 80 transcripts manually takes 160-320 hours depending on depth. Most evaluation teams do not have this capacity, which is why the hidden cost of unanalyzed qualitative data remains one of the sector's biggest blind spots.

Total: 480-825 hours for a single evaluation cycle. For a two-person team, that is 3-5 months of full-time work on one project. No wonder most evaluations settle for 15-20 interviews and call it representative.

How AI-Moderated Interviews Change the Math

AI-moderated interviews do not replace human judgment in evaluation design. They replace the mechanical bottleneck that prevents evaluators from talking to enough people.

Here is what changes:

Interviews Run 24/7 Without Staff Time

An AI-moderated interview is available whenever the participant is ready. A community health worker finishing a 12-hour shift at 10 PM can complete their interview that night. A parent juggling childcare can respond during nap time. A program participant in a different time zone does not need to coordinate across schedules.

This is not a minor convenience improvement. For the populations that nonprofits serve — shift workers, caregivers, people in crisis, rural communities — scheduling a 45-minute phone call during business hours is a genuine barrier to participation. Asynchronous AI interviews remove that barrier completely.

The evaluation team's role shifts from conducting interviews to designing them. You write the interview guide, configure the follow-up logic, set the parameters for probing — and then the system handles execution across all 80 participants simultaneously, not sequentially.

Adaptive Follow-ups Maintain Depth

The most common objection to AI interviews is that they cannot match the depth of a skilled human interviewer. This was true three years ago. It is not true now.

Modern AI-moderated interviews adapt in real time based on participant responses. When someone mentions an unexpected challenge, the system probes deeper. When an answer is vague, it asks for specifics. When a participant raises a topic that connects to an earlier response, the system makes the connection and follows up.

This adaptive capability means that an AI interview with 80 participants produces richer data than 15 human-moderated interviews with the same guide — not because the AI is better at interviewing than a human, but because 80 conversations surface patterns that 15 cannot.

The depth-versus-scale tradeoff that has defined qualitative evaluation for decades is no longer a tradeoff. You get both.

Consistency Across Every Conversation

Human interviewers drift. The twentieth interview does not follow the same probing pattern as the fifth. Interviewer fatigue, rapport differences, and unconscious bias mean that two participants answering the same question may receive very different follow-up experiences.

AI-moderated interviews apply the same follow-up logic to every participant. The probing depth is consistent. The question sequencing is consistent. The analytical framework is consistent. When you compare responses across 80 participants, you are comparing apples to apples because every participant experienced the same conversational structure.

This consistency is not just methodologically important — it is essential for the kind of stakeholder analysis that produces strategic intelligence rather than anecdotal impressions.

Participants Control Their Own Experience

In traditional interviews, the participant adapts to the interviewer's schedule, pace, and communication style. AI-moderated interviews flip this dynamic.

Participants choose when to engage. They can pause and return later. They can take time to think before responding — something that live interviews rarely allow without awkward silence. They can participate in their preferred language. And for sensitive topics, the absence of a human interviewer can actually increase honesty.

For nonprofits working with vulnerable populations — survivors of trauma, people experiencing housing instability, youth in foster care — this participant-controlled dynamic is not a nice-to-have. It is an ethical improvement over traditional methods that force participants to disclose sensitive information in real-time to a stranger. Research shows that anonymous AI interviews unlock more candid community feedback than face-to-face alternatives.

What This Looks Like in Practice

Impact Measurement at Scale

A workforce development nonprofit serves 1,200 participants annually across three cities. Their funder requires annual outcome evaluation with qualitative evidence of program impact.

Before AI interviews: The evaluation team interviews 20 participants (selected for convenience, not representativeness), runs two focus groups per city, and supplements with a post-program survey. The qualitative findings section of their report draws on about 30 voices. The funder accepts it because everyone knows qualitative evaluation at scale is expensive.

With AI interviews: The same team deploys an AI-moderated interview to all 1,200 participants. Four hundred complete it — a 33% response rate that would be exceptional for a survey and unthinkable for traditional interviews. The qualitative findings draw on 400 voices across all three cities, all demographic groups, and all program tracks. Cross-site comparisons become possible for the first time. The funder does not just accept the report — they use it to make allocation decisions because the evidence base is credible.

The evaluation team spent the same amount of time. The difference is that their time went to designing the evaluation and interpreting results instead of conducting and transcribing interviews.

Multi-Stakeholder Engagement

A foundation funding education initiatives needs input from teachers, administrators, parents, and students across 15 school districts. Traditional approach: hire an external evaluation firm for $150,000-$300,000, wait 6 months for the report, receive findings based on 40-60 interviews.

With AI-moderated interviews, the foundation's internal team designs four stakeholder-specific interview guides. Each guide adapts its language, framing, and follow-up logic to the audience — teacher interviews probe pedagogical practice, parent interviews focus on student experience at home, student interviews use age-appropriate language and shorter format.

The system runs all four interview streams simultaneously across all 15 districts. Within three weeks, the foundation has qualitative data from 500+ stakeholders — more voices than the external firm would have reached in six months at five times the cost.

Longitudinal Program Tracking

One of the most powerful applications is ongoing stakeholder engagement rather than point-in-time evaluation. A community development organization can deploy AI interviews quarterly, tracking how participant experiences, needs, and outcomes evolve over time.

This kind of longitudinal qualitative data has been nearly impossible to collect at scale. The operational cost of conducting 200 interviews every quarter would consume most organizations' entire evaluation budget. But when the marginal cost of an additional AI interview is near zero, longitudinal engagement becomes feasible — and the resulting data tells a story that no single evaluation snapshot can match.

From Data Collection to Actionable Analysis

Collecting 400 stakeholder interviews is only valuable if you can analyze them. This is where the combination of AI-moderated interviews and AI-powered analysis creates compounding value.

When stakeholder interviews are conducted through an AI platform like Qualz, the transcripts flow directly into analysis. There is no transcription step, no data cleaning, no manual import into a separate analysis tool. The same platform that collected the data can apply multi-lens analysis — examining the same stakeholder responses through frameworks like Jobs-to-Be-Done, Sentiment and Emotion Spectrum, Stakeholder Equity Audit, and Narrative Arc.

Qualz offers 14 specialized analytical lenses that can be applied individually or in combination. For stakeholder engagement specifically, the Stakeholder Equity Audit lens reveals whose voices are centered and whose are marginalized — critical for organizations committed to equitable evaluation practice. The Framing and Discourse lens surfaces how different stakeholder groups talk about the same issues using different language and mental models.

The result is not just a collection of interview transcripts. It is a structured, multi-dimensional analysis of stakeholder perspectives that would take a team of analysts months to produce manually — delivered in hours.

Addressing the Skeptics

"AI cannot build rapport like a human interviewer."

Correct. AI interviews are not trying to replicate the rapport-based interview. They serve a different function: enabling consistent, deep conversations at a scale that human interviewers cannot reach. The question is not whether an AI interview is as good as the best human interviewer on their best day. The question is whether 400 AI interviews produce better evaluation evidence than 20 human interviews. The answer is unambiguously yes.

"Our stakeholders will not engage with an AI."

Completion rates consistently exceed expectations. Most organizations see 30-50% completion rates for AI interviews, compared to 10-20% response rates for evaluation surveys. Participants engage because the format is convenient, the conversation adapts to their responses, and they feel heard — the system acknowledges what they have said and asks relevant follow-ups rather than marching through a fixed question list.

"We will lose the nuance."

You gain nuance. Fifteen interviews give you themes. Four hundred interviews give you themes, sub-themes, contradictions, minority perspectives, regional variations, demographic patterns, and outlier cases. Nuance comes from breadth as much as depth — and AI interviews give you both.

Getting Started

If your organization needs to engage stakeholders at a scale that your current team cannot support — whether for program evaluation, impact measurement, strategic planning, or community engagement — AI-moderated interviews are not a future possibility. They are available now.

The organizations that adopt this approach first will set the standard for what credible stakeholder engagement looks like. The ones that wait will eventually be asked by their funders why they are still making decisions based on 20 interviews when their peers are drawing on 400.

Book an information session to see how AI-moderated interviews work with your specific stakeholder engagement needs. Bring your most challenging evaluation design problem — the one where you know you need more voices but cannot figure out how to hear them all. That is exactly the problem this solves.