The Reality of Manual Qualitative Coding

If you have ever spent an entire afternoon hunched over a single interview transcript, highlighter in hand (or dragging selections in NVivo), tagging every utterance with a code you invented three interviews ago and can barely remember the definition of — you know the pain.

Manual qualitative coding is one of the most intellectually demanding, tedious, and time-consuming tasks in research. And yet, for decades, it has been treated as a rite of passage. You do the hours. You earn the insights. No shortcuts.

Here is the math most of us know but rarely say out loud:

- A single 60-minute interview produces roughly 8,000-10,000 words of transcript

- First-pass open coding takes 4-6 hours per transcript for an experienced coder

- A second pass for axial or focused coding adds another 2-3 hours

- A typical qualitative study involves 15-30 interviews

- Total coding time: 90-270 hours — before you even start writing up findings

That is not a methodology problem. That is a capacity problem. And it explains why qualitative research has historically been small-n, slow to deliver, and expensive to commission. It also explains why research agencies are losing clients to faster, AI-augmented competitors.

The question is no longer whether AI can help with qualitative coding. It already does. The question is how to integrate it without sacrificing the rigor that makes qualitative research valuable in the first place.

What Manual Coding Actually Involves (And Where It Breaks Down)

Let us be precise about what we mean by "coding" in qualitative research. For readers less familiar with the full process, our guide to qualitative data analysis (QDA) covers the foundations.

Qualitative coding is the process of assigning labels (codes) to segments of text — interview transcripts, open-ended survey responses, field notes, focus group recordings — to identify patterns, themes, and meaning. It typically follows a progression:

- Open coding — reading through data line by line, generating initial codes

- Axial coding — grouping codes into categories, identifying relationships

- Selective/theoretical coding — integrating categories into themes or theory

The manual approach breaks down in predictable ways:

Coder fatigue. By transcript 12, your attention drifts. Codes that were crisp in interview 1 get applied inconsistently by interview 20. Studies have shown inter-coder reliability drops measurably after sustained coding sessions, with agreement rates falling 10-15% after four continuous hours.

Code sprawl. Without discipline, codebooks balloon. A project that starts with 30 codes ends with 180. Half are redundant. Merging them retroactively means re-reading everything.

Confirmation bias. Once you "see" a theme early, you unconsciously look for it everywhere. Disconfirming evidence gets under-coded. This is not a character flaw — it is how human cognition works under cognitive load.

Bottleneck economics. In consulting and agency work, coding is the bottleneck. The partner sells a four-week turnaround. Two of those weeks are coding. If a second coder is needed for reliability, make it three. Clients increasingly will not wait that long.

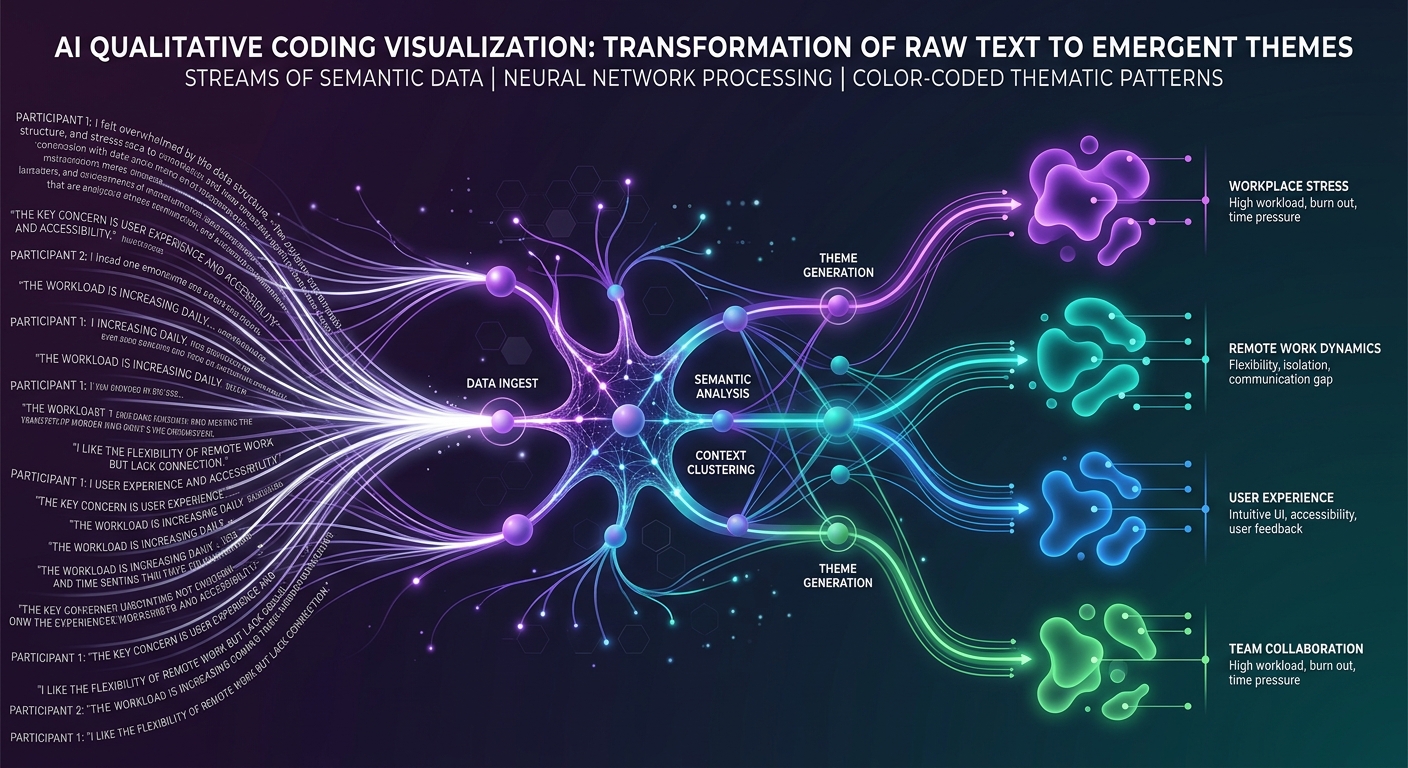

How AI-Powered Coding Actually Works

AI qualitative coding is not a magic "summarize" button. The tools that actually work — and there is a growing gap between serious platforms and glorified ChatGPT wrappers — operate on several levels.

Automated Theme Generation

The AI reads your entire dataset (not just one transcript at a time) and identifies recurring patterns across all sources simultaneously. This is fundamentally different from human coding, which is sequential and accumulative.

Where a human coder processes transcripts one by one and builds themes iteratively, AI processes the entire corpus in parallel. It can hold 30 transcripts in context at once, identifying a theme that appears subtly in interview 3, explicitly in interview 17, and as a contradiction in interview 22.

For a deeper look at how modern thematic analysis works, see our guide on thematic analysis for modern research.

Multi-Lens Analysis

This is where AI coding gets genuinely powerful — and goes beyond what most manual approaches attempt.

Rather than coding data through a single analytical framework, AI can apply multiple analytical lenses to the same dataset simultaneously:

- Thematic lens — what are the recurring topics and patterns?

- Sentiment lens — what is the emotional valence of each segment?

- Process lens — what sequences and workflows do participants describe?

- Stakeholder lens — how do different participant groups diverge?

- Contradiction lens — where do participants disagree with each other or themselves?

In manual coding, applying even two of these frameworks means coding the data twice. Most studies stick to one because of time constraints. AI removes that constraint entirely. You can explore lens-based analysis as a practical way to get richer, more multidimensional findings from the same data.

Codebook-Aware Coding

The best AI coding tools do not just generate codes from scratch. They can work with your existing codebook — applying your pre-defined codes consistently across the dataset while flagging segments that do not fit any existing code.

This matters enormously for:

- Longitudinal studies where codebook consistency across waves is critical

- Multi-site evaluations where different teams need to apply the same framework

- Program evaluation where funders require specific indicators to be tracked

For researchers who want to maintain methodological control while leveraging AI speed, building codebooks with AI covers how to get the best of both worlds.

Contextual Understanding vs. Keyword Matching

Early "automated coding" tools were essentially keyword matchers — they would flag any mention of "frustration" and code it as "negative experience." Modern AI coding understands context. It can distinguish between:

- "I was frustrated with the login process" (UX pain point)

- "The team was frustrated because the timeline kept shifting" (organizational challenge)

- "It is frustrating to hear people say qualitative research is not rigorous" (meta-commentary)

Same word. Three different codes. This contextual understanding is what separates current AI coding from the crude text-mining approaches of the 2010s.

The Numbers: Time Savings and Accuracy

Let us talk data. Across multiple studies and our own benchmarking at Qualz:

Time reduction. AI coding consistently reduces first-pass coding time by 85-95%. A 10,000-word transcript that takes 4-6 hours manually gets coded in 8-15 minutes with AI. For a 20-interview study, that compresses roughly 120 hours of coding into about 4-5 hours of AI-assisted work (including review time).

Consistency. AI does not get tired at transcript 15. It applies codes with the same logic at transcript 30 as transcript 1. Internal consistency measurements show AI coding maintaining 92-96% self-consistency across a dataset, compared to 78-85% for solo human coders and 82-90% for trained coder pairs.

Coverage. This is the finding that surprises most researchers. AI coding typically identifies 15-25% more relevant segments than manual coding on the same dataset. Not because the AI is "better" — but because human coders, especially under time pressure, skip segments. They read past a paragraph that seems tangential, when it actually contains a subtle but important data point.

Agreement with human coders. When AI-generated codes are compared against expert human coding, agreement rates typically fall in the 80-88% range — which is comparable to, and often exceeds, inter-coder reliability between two human coders on the same dataset.

These are not theoretical benchmarks. These are the numbers researchers see when they run AI coding alongside manual coding on the same transcripts.

When Human Coding Still Matters

Let me be direct: AI coding does not replace the researcher. It replaces the most mechanical, time-consuming part of the researcher's job.

Here is where human judgment remains essential:

Interpretive depth. AI can identify that participants frequently discuss "feeling unheard." A human researcher understands why that matters in the specific context of, say, a healthcare system where patients from marginalized communities have historically been dismissed. The interpretation layer — connecting codes to theory, context, and significance — remains fundamentally human.

Highly specialized domains. If your research involves coded language, cultural idioms, or domain-specific jargon that the AI has limited training data on, human coding may catch nuances that AI misses. Think: gang intervention programs, indigenous healing practices, highly technical engineering workflows.

Methodological requirements. Some grounded theory purists require that coding emerge entirely from researcher engagement with the data. If your methodology demands that the researcher's evolving understanding shapes the coding process — not just the outcome — then AI coding changes the epistemological basis of the work. That is a legitimate concern worth taking seriously.

Regulatory or IRB constraints. Some institutional review boards have not yet caught up with AI-assisted analysis. If your IRB approval specifies manual coding procedures, you need to amend before switching.

Novel phenomena. When studying something genuinely new — a cultural shift, an emerging technology experience, a crisis response — AI models trained on existing data may not have adequate frameworks. Human inductive coding shines when the territory is truly unmapped.

A Practical Workflow for Transitioning

If you are convinced that AI coding is worth exploring but nervous about abandoning your established process, here is a pragmatic transition workflow:

Phase 1: Parallel Run (1-2 Projects)

- Code 3-5 transcripts manually using your normal process

- Run the same transcripts through AI coding

- Compare: Where does AI agree with you? Where does it diverge? Where does it find things you missed?

- Use this to calibrate your trust level and understand the tool's strengths and blind spots

Phase 2: AI-First with Human Review (3-5 Projects)

- Let AI generate the initial coding pass across your full dataset

- Review AI-generated codes transcript by transcript — not to redo the coding, but to validate, merge, split, and refine

- Track how much time you spend on review vs. how much you previously spent on manual coding

- Adjust your codebook iteratively based on what the AI surfaces

Phase 3: Integrated Workflow (Ongoing)

- Use AI coding as your default first pass

- Focus your human expertise on interpretation, theme development, and writing

- Deploy multi-lens analysis to get richer findings than manual coding ever produced

- Reserve full manual coding for projects where methodology demands it

Key Principles for the Transition

Do not treat AI codes as final. They are a high-quality first draft. Your job shifts from generating codes to curating and interpreting them.

Document your process. If you are publishing academic research, describe your AI-assisted coding methodology transparently. The field is developing norms here — be part of setting them.

Start with your strongest dataset. Do not test AI coding on messy, poorly transcribed data. Use clean transcripts from a well-designed study so you can evaluate the AI's analytical capability, not its tolerance for garbage input.

Invest the time savings wisely. The whole point is not to do the same work faster — it is to do better work. Use the hours you save on coding to do deeper interpretation, more thorough member checking, or (radical thought) collect more data.

What This Means for the Industry

The shift from manual to AI-assisted qualitative coding is not a future trend. It is happening now, and it is reshaping who wins in qualitative research.

For UX researchers: Faster coding means faster insight delivery. When your product team operates in two-week sprints, a qualitative study that takes six weeks to code is a qualitative study that does not get commissioned. AI coding makes qual viable for the pace of product development.

For market research firms: The agencies that adopt AI coding will deliver richer findings in half the time at lower cost. The ones that do not will increasingly lose to competitors who do. This is not speculation — it is already happening, as we discussed in our piece on how AI is reshaping qualitative analysis.

For program evaluators: When you can code 100 interview transcripts in a day instead of a month, you can actually do justice to the qualitative components of mixed-methods evaluations instead of treating them as the underfunded afterthought they often become.

For academic researchers: AI coding does not threaten qualitative rigor — it enables it. When coding takes less time, researchers can afford larger samples, multiple coding passes, and more sophisticated analytical frameworks. The constraint was never methodological. It was logistical.

Getting Started

If you have read this far, you are probably already feeling the pain of manual coding and wondering whether the switch is worth the learning curve.

It is. Unambiguously.

The researchers and teams who are making this transition now are not cutting corners — they are removing a bottleneck that has artificially limited the scope and speed of qualitative research for decades.

At Qualz, we built our platform specifically for this transition. Upload your transcripts, get AI-generated themes in minutes, apply multiple analytical lenses, and maintain full control over your codebook and methodology. No black boxes. No "trust the algorithm." Just faster, more thorough coding that lets you spend your time where it matters — on interpretation and insight.

The transcripts are not going to code themselves. But they do not need to take all week, either.

Try Qualz free and see what your data looks like when you are not too exhausted to find what is in it.