Healthcare has a measurement paradox. No industry collects more structured feedback from the people it serves. Between CAHPS, HCAHPS, Press Ganey, NPS, and dozens of proprietary patient satisfaction instruments, the average hospital system processes tens of thousands of survey responses every quarter. The dashboards are full. The scores are tracked to the decimal point. Executive bonuses are tied to them.

And yet, when a quality director sits down to figure out why their hospital's communication scores dropped three points this quarter, they are often guessing.

This is not a failure of effort. It is a structural limitation of quantitative patient feedback. Survey scores are designed to measure what patients experienced and how they rated it. They were never designed to explain why those experiences happened, what patients actually felt in the moment, or what specific changes would make a meaningful difference.

That explanatory layer — the qualitative understanding of patient experience — has historically been too expensive, too slow, and too difficult to scale for most healthcare organizations. The result is an industry that spends billions measuring patient experience and comparatively almost nothing understanding it.

That is starting to change.

The CAHPS Ceiling: What Survey Scores Cannot Tell You

The Consumer Assessment of Healthcare Providers and Systems (CAHPS) survey program, administered by the Agency for Healthcare Research and Quality (AHRQ), has been the gold standard for patient experience measurement since the 1990s. HCAHPS — the hospital-specific version — became mandatory for CMS reimbursement reporting in 2006. Today, virtually every hospital in the United States collects and reports HCAHPS data.

The surveys have genuine value. They provide standardized, comparable metrics across institutions. They create accountability. They have driven real improvements in measurable aspects of patient experience, particularly around communication basics and discharge processes.

But CAHPS and its derivatives share a fundamental limitation: they are closed-ended instruments measuring predefined dimensions of care. A patient can tell you they rate nurse communication as a 3 out of 4. They cannot tell you that the real problem was that night shift nurses seemed rushed and never introduced themselves, that the call button took 22 minutes to get answered on Tuesday, or that the one nurse who did sit down and explain their medication made them feel safe for the first time since admission.

That context — the specific, situated, emotionally rich detail of lived patient experience — is precisely what healthcare organizations need to drive meaningful improvement. And it is precisely what structured surveys cannot capture.

The Score Plateau Problem

Many healthcare systems have hit what industry consultants call the "CAHPS ceiling." After years of targeted improvement efforts, scores in key domains have plateaued. Communication composites hover in the high 70s to low 80s nationally. Responsiveness scores remain stubbornly lower. And the variation between top-performing and median institutions has narrowed considerably.

When scores plateau, the lever for improvement is no longer broad operational changes. It is understanding the granular, context-specific drivers of patient experience within your particular institution, patient population, and care environment. That requires qualitative inquiry — open-ended conversations where patients describe their experiences in their own words, at their own pace, with the freedom to surface issues that no survey designer anticipated.

The Financial Stakes

This is not an academic concern. Under the CMS Hospital Value-Based Purchasing (VBP) program, HCAHPS scores directly affect Medicare reimbursement. The Person and Community Engagement domain — driven entirely by HCAHPS — accounts for 25% of a hospital's VBP score. For a large hospital system, the difference between the 50th and 75th percentile on HCAHPS can represent millions of dollars in annual reimbursement.

When that much revenue depends on moving scores that have plateaued, healthcare leaders need more than another dashboard refresh. They need to understand the patient experience at a depth that structured measurement cannot provide.

What Qualitative Patient Intelligence Actually Looks Like

Qualitative research in healthcare is not new. Patient advisory councils, focus groups, bedside interviews, and ethnographic observation have been used in health services research for decades. The problem has never been the value of the approach — it has been the practicality.

A traditional qualitative patient experience study might involve:

- Recruiting 20-30 patients from a target population

- Conducting 60-90 minute semi-structured interviews with each

- Transcribing recordings (often 4-6 hours of transcription per hour of interview)

- Coding transcripts through multiple analysis passes

- Synthesizing themes and producing a findings report

The entire process typically takes 3-6 months and costs $50,000-$150,000 depending on the scope. For a health system trying to understand a specific service line issue or prepare for a CMS survey, that timeline and budget are often prohibitive.

The result is that qualitative patient intelligence has been reserved for major research initiatives or accreditation preparations. It has almost never been integrated into the continuous improvement cycles where it would have the most operational impact.

What Changes When You Can Do Qual at Scale

Imagine a different model. Instead of a twice-a-decade qualitative study, imagine being able to conduct in-depth patient conversations continuously — 50, 100, 500 interviews per month — with automated analysis that surfaces themes, patterns, and actionable insights in near real time.

At that scale, qualitative patient intelligence stops being a research project and becomes an operational capability:

- Service recovery in context. When a patient describes a negative experience in their own words, you understand not just that they were dissatisfied but the specific sequence of events, staff interactions, and environmental factors that drove the experience. Service recovery can be targeted and specific rather than generic.

- Root cause identification. Quantitative data tells you that discharge communication scores dropped in Q3. Qualitative data tells you that patients on the cardiac unit are confused because three different staff members give them different medication instructions, and none of them sits down to check understanding. That is an actionable finding.

- Equity and access insights. Structured surveys often mask disparities in patient experience across demographic groups. Open-ended conversations surface the specific barriers, cultural factors, and communication gaps that drive differential experiences — insights that are invisible in aggregate scores.

- Staff experience connection. Patients frequently describe interactions that reveal staff burnout, workflow problems, or resource constraints. These observations provide a patient-perspective window into operational and workforce issues that staff satisfaction surveys may not capture.

- Regulatory and accreditation preparation. CMS surveys and Joint Commission reviews increasingly look for evidence that organizations listen to patients in meaningful ways. A continuous qualitative intelligence program provides that evidence and the improvement actions that follow from it.

Why Healthcare Could Not Scale Qual Before

The barriers to qualitative research at scale in healthcare have been both economic and structural.

Cost and Time

Traditional qualitative research requires skilled human moderators — typically master's or doctoral-level researchers with training in health services research, qualitative methodology, and often clinical knowledge. These professionals command $150-$300 per hour, and a single study can consume hundreds of person-hours between interview conduct, transcription, analysis, and reporting.

For context, that budget could fund several thousand additional CAHPS surveys. When healthcare leaders are forced to choose between broad quantitative coverage and narrow qualitative depth, breadth usually wins.

Patient Population Complexity

Healthcare serves populations with extraordinary diversity in health literacy, cognitive capacity, emotional state, language, and cultural background. A post-surgical patient on opioid pain medication presents differently in an interview than a healthy adult in a consumer research study. Patients with limited English proficiency, cognitive impairment, or health anxiety require adapted interview approaches.

This complexity increases the skill requirements for human moderators and limits the number of researchers who can competently conduct patient interviews across diverse populations.

Privacy and Compliance

Patient experience data intersects directly with protected health information (PHI). Any qualitative research program involving patient conversations must navigate HIPAA requirements, IRB oversight (in academic medical centers), and institutional privacy policies. The compliance burden has historically made qualitative programs organizationally complex to initiate and sustain.

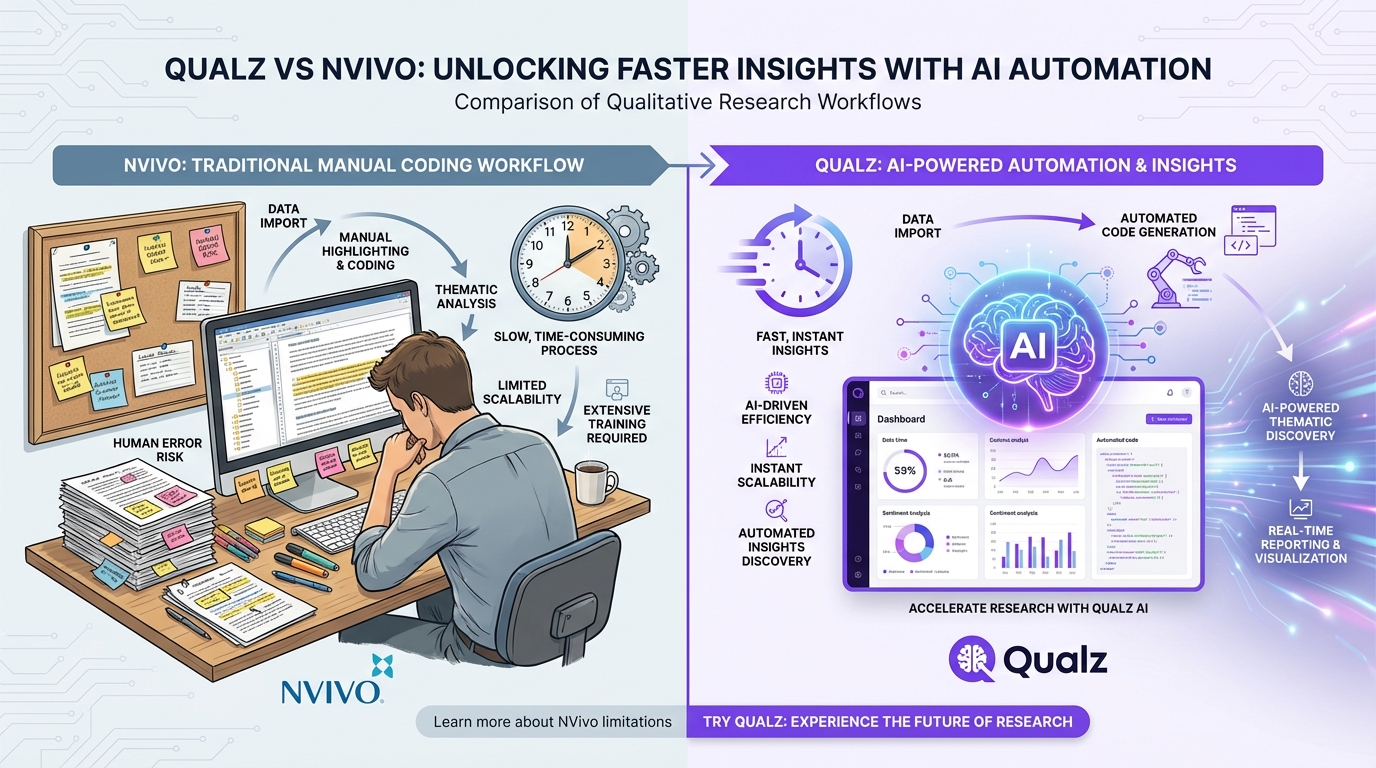

Analysis Bottleneck

Even when organizations manage to conduct qualitative patient interviews, the analysis bottleneck often defeats the purpose. A study producing 30 hours of interview recordings generates hundreds of pages of transcripts. Manual qualitative coding — the systematic process of identifying themes, patterns, and relationships in textual data — is painstaking. A single experienced coder can typically analyze 3-5 transcripts per day.

By the time findings are synthesized and reported, the operational window for action has often passed. The insights are valid but stale.

How AI-Moderated Interviews Change the Equation

AI is not going to replace the need for qualitative expertise in healthcare. What it does is remove the bottlenecks that have kept qualitative patient intelligence from operating at the scale and speed that healthcare operations require.

Conducting Interviews at Scale

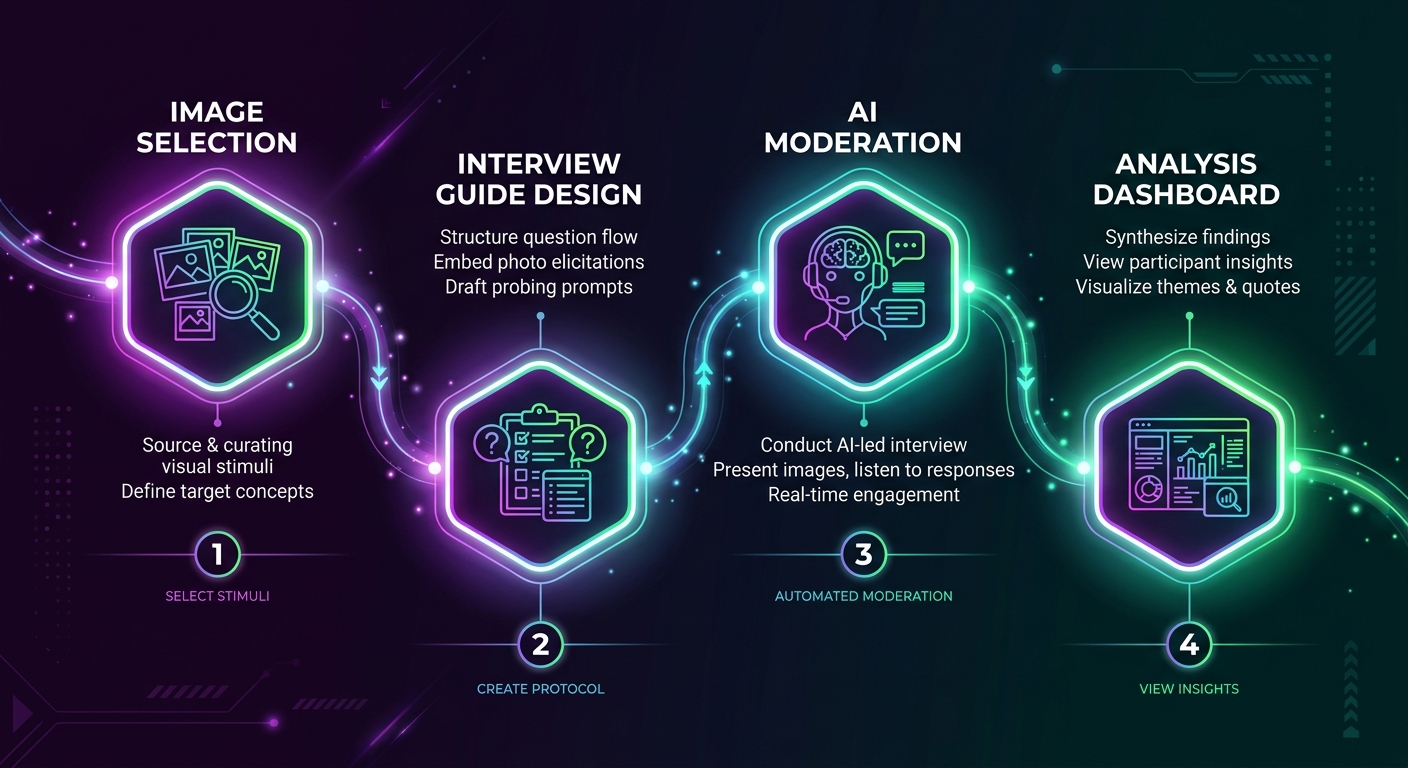

AI-moderated interviews can conduct hundreds of patient conversations simultaneously. Each conversation follows a research-designed discussion guide while adapting to the patient's responses, probing on themes that emerge, and maintaining the conversational depth that makes qualitative research valuable.

Unlike human moderators, AI interviewers do not fatigue, do not unconsciously signal preferred answers through body language or tone, and do not introduce the moderator bias that decades of research have documented. They also do not vary in quality from interview to interview — the fiftieth conversation is as attentive and methodologically consistent as the first.

For healthcare organizations, this means moving from a model where 20 patient interviews represent a significant research investment to one where 200 patient interviews are a routine monthly capability.

Real-Time Analysis and Theme Detection

The analysis bottleneck disappears when AI handles both the interview conduct and the initial analysis pass. Themes, sentiment patterns, and emerging issues can be surfaced as they develop rather than weeks or months after data collection.

This speed transforms qualitative intelligence from a retrospective research output to a real-time operational input. A patient experience team can identify a developing service issue — say, confusion about a new discharge process — within days of implementation rather than discovering it in the next quarterly CAHPS report.

Privacy-Compliant by Design

Modern AI platforms can be designed with healthcare compliance built into the architecture. Conversations can be conducted without collecting unnecessary PHI. Data can be processed and stored in HIPAA-compliant environments. Audit trails can document exactly how patient data was collected, processed, and analyzed — a level of transparency that is actually more rigorous than what most manual qualitative research programs provide.

Reducing Moderator Bias in Sensitive Contexts

Healthcare conversations touch on deeply personal topics — pain management, mental health, end-of-life care, reproductive health, substance use. These are exactly the domains where social desirability bias is strongest in human-moderated interviews. Patients underreport pain, overstate medication compliance, and minimize mental health symptoms when speaking with someone they perceive as a healthcare professional.

AI-moderated interviews reduce this dynamic substantially. Patients speaking with an AI interviewer show lower social desirability bias across sensitive topics, providing more honest and clinically useful feedback.

Accessibility and Reach

AI-moderated interviews can be conducted asynchronously — patients can participate when and where it works for them, not when a researcher is available. For post-discharge patients, rural populations, patients with mobility limitations, or caregivers who cannot schedule a fixed appointment, this flexibility dramatically expands who can participate in qualitative research.

The technology also supports multiple languages without requiring multilingual moderators, addressing one of the most persistent equity gaps in patient experience research.

Building a Qualitative Patient Intelligence Program

For healthcare organizations considering this approach, the path forward is not about replacing quantitative measurement. It is about complementing it.

Start Where CAHPS Falls Short

Identify the domains where your quantitative scores have plateaued or where scores alone do not explain the variation you observe. Common starting points include:

- Care transitions. Discharge and handoff experiences are consistently among the lowest-scoring CAHPS domains and the most complex to improve.

- Communication gaps. When "doctor communication" scores are high but "understanding medications" scores are low, qualitative research can identify the specific disconnect.

- Equity concerns. If you see score disparities across patient demographics, qualitative inquiry can surface the specific experiential differences driving those gaps.

Integrate With Existing Workflows

Qualitative patient intelligence should feed the same improvement cycles that your quantitative data feeds. Connect findings to your existing patient experience committee, department-level improvement teams, and quality reporting structures. The goal is not a parallel track — it is a richer evidence base for the improvement work already underway.

Think Continuous, Not Episodic

The real value of AI-enabled qualitative research is the ability to run it continuously. Rather than a single large study, consider an always-on program that conducts a steady stream of patient conversations across service lines, with ongoing analysis that surfaces emerging themes and tracks whether improvement actions are having their intended effect.

Do Not Build This Yourself

Healthcare IT teams are already stretched thin. Building a custom qualitative research platform — with the natural language processing, compliance infrastructure, qualitative analysis methodology, and ongoing model management it requires — is a significant engineering undertaking that diverts resources from core capabilities. Purpose-built platforms like Qualz.ai exist specifically for this use case, with the compliance, methodology, and scale requirements already addressed.

The Shift From Measurement to Understanding

Healthcare's relationship with patient experience data is at an inflection point. The measurement infrastructure is mature. The financial incentives are aligned. The regulatory environment increasingly demands evidence of patient-centered improvement. What has been missing is the bridge between knowing your scores and understanding your patients.

Qualitative patient intelligence — the systematic, scalable collection and analysis of patients' own descriptions of their care experiences — provides that bridge. And for the first time, AI makes it possible to build this capability at a scale and cost that works within healthcare operational realities.

The organizations that figure this out first will not just improve their CAHPS scores. They will understand why those scores move, develop targeted interventions based on genuine patient insight, and build a continuous feedback loop between patient experience and care delivery improvement.

The scores are a starting point. The conversation is where the understanding begins.

Ready to hear what your patients are really thinking? Book a demo to see how Qualz.ai helps healthcare organizations move beyond survey scores to genuine patient intelligence — at scale, with full compliance.