Product analytics can tell you that 37% of users abandon your onboarding flow at step three. What it cannot tell you is why. Maybe the form is confusing. Maybe users don't see the value yet. Maybe they got a Slack notification and never came back. The number is precise, but the insight is hollow.

This is the fundamental tension every product team faces: quantitative data shows you what is happening at scale, while qualitative research reveals the motivations, frustrations, and decision-making processes behind those numbers. Used in isolation, each method has blind spots. Used together — strategically, not haphazardly — they produce the kind of compounding insight that separates teams shipping features nobody wants from teams building products people cannot live without.

The problem is that most product teams default to one mode. Engineering-heavy teams lean on analytics. Design-led teams lean on interviews. And the decision about when to combine methods is usually made by whoever shouts loudest in the planning meeting, not by any systematic framework.

This post provides that framework. Whether you're a product manager trying to prioritize customer problems for growth, a researcher embedded in an agile team, or a founder making bets with limited runway, you'll walk away knowing exactly when to go qual, when to go quant, and when the answer is both.

The Integration Decision Matrix

Not every research question requires both methods. The decision depends on three variables: the maturity of your understanding, the type of decision you're making, and the cost of being wrong.

When Quantitative Alone Is Sufficient

Go quant-only when you have a well-defined hypothesis and need to measure magnitude, frequency, or statistical significance across a large population.

Best for:

- A/B testing UI changes where the variable is isolated

- Measuring feature adoption rates post-launch

- Tracking NPS, CSAT, or retention metrics over time

- Validating pricing sensitivity across segments

The key qualifier: you already understand the problem space well enough to ask precise questions. If you're measuring something you don't understand, you'll get precise answers to the wrong questions.

When Qualitative Alone Is Sufficient

Go qual-only when you're exploring a new problem space, trying to understand user mental models, or need to generate hypotheses rather than test them.

Best for:

- Early discovery before building anything

- Understanding why users churn, not just that they churn

- Exploring emotional responses to brand positioning

- Mapping workflows and pain points in enterprise contexts

As researchers have noted in work on continuous discovery versus project-based research, qualitative methods shine when you need to surface what you don't know you don't know. The danger is stopping here when you need to scale those insights.

When You Need Both

Combine methods when the stakes are high, the problem is complex, or you need to move from "we think we understand" to "we know, and here's the evidence."

Signals that mixed methods are necessary:

- Your quantitative data shows a pattern but you cannot explain it

- Your qualitative insights are compelling but leadership wants numbers

- You're making a high-cost, hard-to-reverse decision (pricing, market entry, major feature bet)

- You need to generalize findings from a small sample to a larger population

- Different data sources are telling conflicting stories

Five Integration Patterns That Work

Mixed-methods research isn't one thing. It's a family of approaches, each suited to different situations. Here are the five patterns product teams use most effectively.

Pattern 1: Exploratory Sequential (Qual → Quant)

Start with qualitative research to discover themes, then use quantitative methods to test those themes at scale.

Example: You conduct 15 user interviews and discover that mid-market SaaS buyers consistently mention "time to first value" as their primary evaluation criterion. You then run a survey of 500 prospects to quantify how this criterion ranks against price, features, and support quality.

This pattern is ideal for product teams in the discovery phase who need to move from insight to evidence. It's the most natural fit for teams practicing research democratization, where researchers generate the initial insights and product managers validate them quantitatively.

Pattern 2: Explanatory Sequential (Quant → Qual)

Start with quantitative data to identify patterns, then use qualitative methods to explain those patterns.

Example: Your product analytics show that users who complete three specific actions in the first week have 4x higher 90-day retention. You run interviews with both high-retention and low-retention users to understand what drives that behavior — and discover it's not the features themselves but a specific aha moment during onboarding.

This is the pattern for teams swimming in data but starving for understanding. When dashboards raise more questions than they answer, qualitative follow-up provides the narrative that makes numbers actionable.

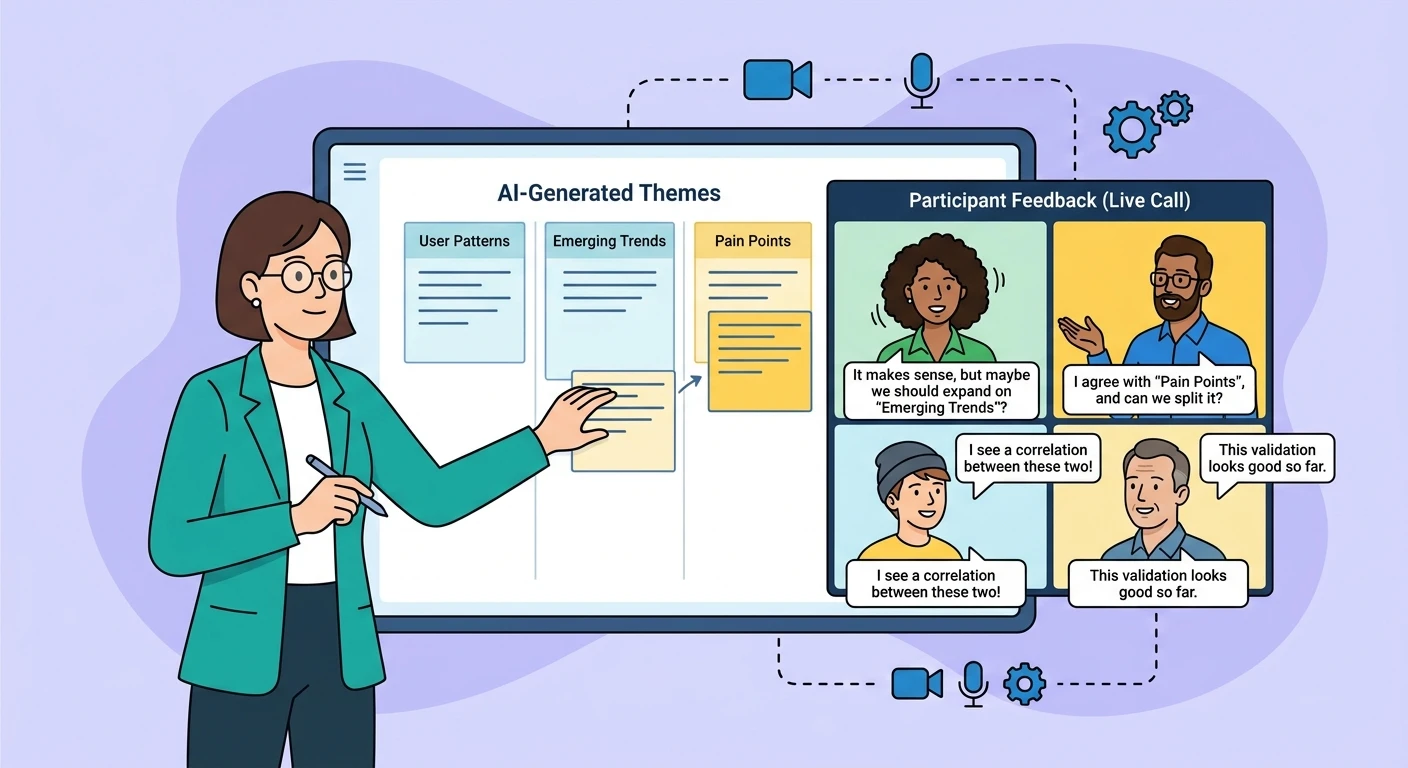

Pattern 3: Convergent Parallel (Qual + Quant Simultaneously)

Run both methods at the same time, analyze them separately, then merge the findings.

Example: While running a large-scale survey on feature satisfaction, you simultaneously conduct diary studies with 10 users to capture in-the-moment reactions. The survey tells you 62% are "satisfied" with search. The diaries reveal that "satisfied" means "I've learned the workarounds" — a very different signal.

This pattern is resource-intensive but powerful for high-stakes decisions. It's particularly valuable when you suspect that sentiment analysis alone misses the story, as aggregated satisfaction scores can mask the nuance that drives real behavior.

Pattern 4: Embedded Design

One method is nested within the other as a supporting element.

Example: You're running a randomized controlled trial of two onboarding flows. Within the trial, you embed short qualitative exit interviews with a subset of participants from each group. The trial gives you statistical power; the interviews give you the explanatory texture.

This is increasingly common in organizations building AI-native operating models, where experimentation infrastructure already exists and qualitative overlays add the human context that pure metrics miss.

Pattern 5: Multi-Phase Design

A series of qual and quant phases, each building on the last, across an extended research program.

Example: Phase 1 (qual): Discover that enterprise buyers evaluate AI tools primarily through a trust lens. Phase 2 (quant): Survey 300 buyers to quantify trust factors. Phase 3 (qual): Test messaging frameworks built on those trust factors. Phase 4 (quant): A/B test the top messaging variants in-market.

This is the pattern for strategic research programs — the kind that inform annual planning, market entry, or major pivots. It aligns well with the principles of context engineering in AI-driven development, where iterative learning loops produce compounding intelligence.

Common Integration Mistakes

Mistake 1: Using Qual to Confirm What Quant Already Told You

If your survey says users want faster load times and you interview users who also say they want faster load times, you haven't learned anything new. Integration should produce additive insight, not redundant validation.

Mistake 2: Treating Small Qualitative Samples as Statistically Representative

"We talked to 8 users and 6 of them said X, so 75% of our users want X." No. Qualitative research generates hypotheses. Quantitative research tests them. Conflating the two destroys the credibility of both.

Mistake 3: Running Both Methods but Never Merging the Findings

Parallel execution without integration is just two separate studies wearing a trench coat. The value of mixed methods comes from the synthesis — the moment where qualitative context transforms quantitative patterns into strategy.

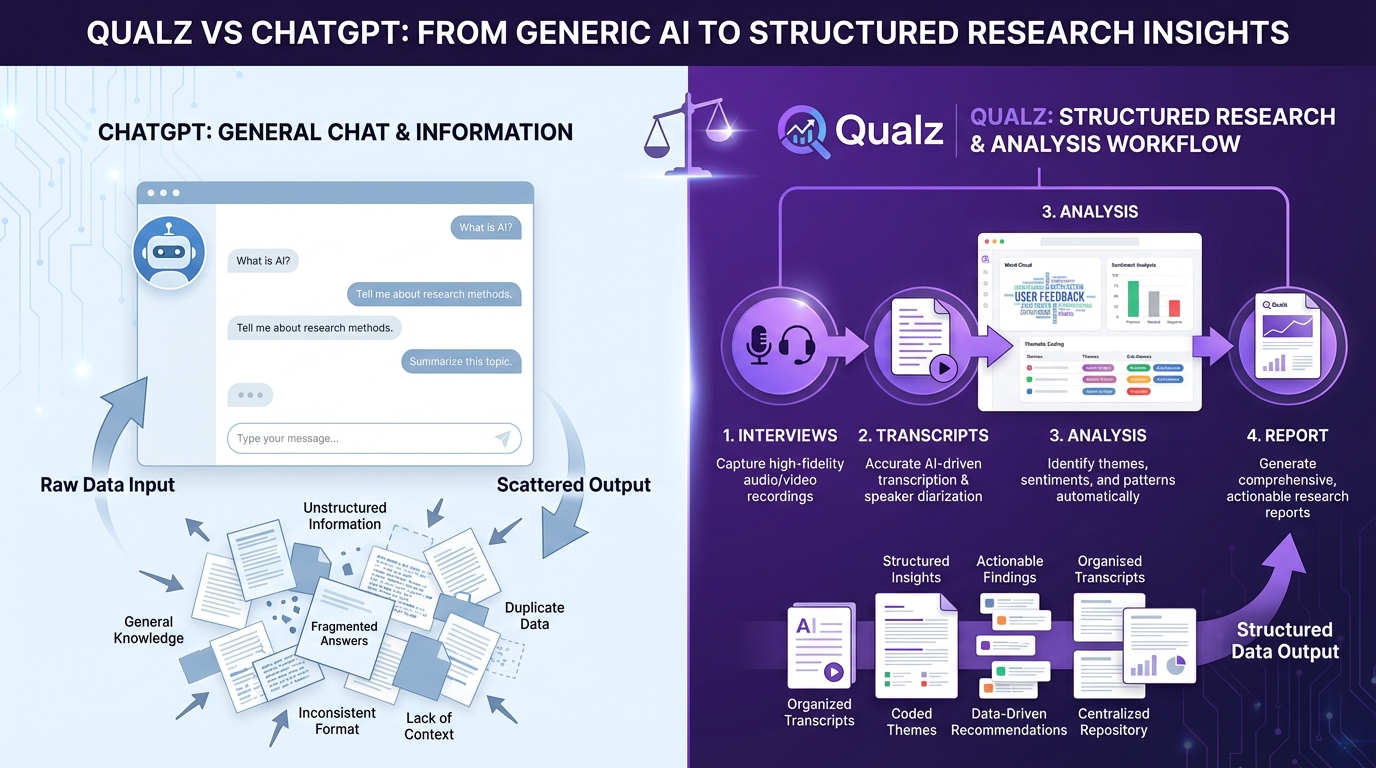

Mistake 4: Defaulting to Surveys When You Need Interviews

Surveys are not qualitative research. A survey with open-ended questions is still a survey. If you need depth, context, and the ability to follow unexpected threads, you need actual conversations — whether human-moderated or AI-assisted.

Operationalizing Mixed Methods in Agile Teams

The biggest objection to mixed-methods research in product teams isn't philosophical — it's logistical. "We don't have time for both." Here's how to make it work within sprint cycles.

The 70/20/10 Research Allocation

- 70% of research effort goes to lightweight, continuous methods (analytics, in-product surveys, automated feedback loops)

- 20% goes to focused qualitative sprints (interview batches, usability sessions) triggered by specific decisions

- 10% goes to deep mixed-methods studies for high-stakes strategic questions

This allocation ensures you're always learning without creating a research bottleneck. The insight-to-action gap closes when research cadence matches decision cadence.

Practical Workflow for a Two-Week Sprint

Week 1:

- Day 1-2: Review existing quant data. Identify the pattern or question.

- Day 3-5: Run 5-8 qualitative interviews or usability sessions targeting the question.

Week 2:

- Day 1-2: Synthesize qualitative findings. Generate hypotheses.

- Day 3-4: Design and deploy a targeted survey or analytics analysis to validate hypotheses.

- Day 5: Merge findings into a decision brief.

This compressed cycle won't produce a doctoral thesis, but it will produce better decisions than either method alone.

Building the Infrastructure

Mixed-methods research requires shared infrastructure. At minimum:

- A unified research repository where qualitative and quantitative findings live side by side, tagged by product area, user segment, and research question

- Consistent participant panels that can be tapped for both surveys and interviews, reducing recruitment overhead

- Shared analysis frameworks so that qualitative themes map cleanly to quantitative metrics (e.g., a qualitative theme of "trust anxiety" maps to a quantitative metric of "time to complete purchase")

For teams building serious research operations, the infrastructure question extends to how AI and automation can compress the cycle further. Production-grade AI architecture for enterprise systems increasingly applies to research operations: orchestrating multiple data sources, models, and analysis methods into a coherent pipeline.

The Strategic Case for Integration

The teams that get mixed methods right don't just make better individual decisions — they build a compounding knowledge advantage. Each study informs the next. Qualitative insights sharpen quantitative instruments. Quantitative findings focus qualitative inquiry. Over time, the team develops an increasingly accurate model of their users that no single method could produce.

In a world where the great decoupling between headcount and output is accelerating, the ability to make high-conviction decisions with integrated evidence becomes a structural competitive advantage.

The framework is simple. The discipline is hard. Start with the decision you need to make, match the method to the question, and never let one data source tell the whole story.