Every nonprofit leader knows the tension. Funders want evidence of impact. Beneficiaries deserve to be heard. Program teams need feedback loops that actually inform decisions. And the budget for all of this is somewhere between shoestring and nonexistent.

Program evaluation is not optional. It is the mechanism through which organizations learn what works, demonstrate accountability to donors, and make the case for continued or expanded funding. But the way most nonprofits approach evaluation today -- paper surveys with low response rates, occasional focus groups, retrospective interviews conducted months after a program ends -- leaves enormous gaps in understanding.

The result is an evaluation gap that costs the sector far more than the research itself would. Programs continue without course correction. Grant reports rely on output metrics because outcome data is too expensive to collect. And the voices of the people programs are designed to serve get reduced to a handful of quotes cherry-picked from a convenience sample.

AI-moderated interviews are closing this gap. Not by replacing human judgment in evaluation design, but by making it economically feasible to collect rich qualitative data from the people who matter most -- at scale, with rigor, and with the ethical safeguards that working with vulnerable populations demands.

The Evaluation Gap in the Nonprofit Sector

The scale of the problem is staggering. Foundations collectively distribute billions in grants annually, yet most grantees lack the internal capacity to conduct meaningful evaluation. A 2023 survey by the Center for Effective Philanthropy found that the majority of grantees consider evaluation important but struggle to fund it adequately. The organizations that need evaluation most -- those serving marginalized communities, piloting new interventions, operating in resource-constrained environments -- are precisely the ones that can afford it least.

Traditional evaluation methods compound the problem. A single round of in-depth qualitative interviews with twenty beneficiaries -- recruiting, scheduling, conducting, transcribing, and analyzing -- can easily cost $15,000 to $25,000 when using external evaluators. Focus groups add facility costs, moderator fees, and the logistical nightmare of coordinating schedules across populations that often lack reliable transportation or childcare.

Even organizations that manage to fund evaluation face a timing problem. By the time data is collected, analyzed, and synthesized into a report, the program cycle has moved on. Evaluation findings arrive too late to influence the decisions they were meant to inform.

This is not a problem of will. Program directors and executive directors understand the value of evaluation. The problem is that the tools and methods available to them were designed for research budgets that most nonprofits simply do not have.

Why Qualitative Data Matters for Impact Measurement

Before discussing the solution, it is worth understanding why qualitative data specifically -- not just more surveys -- matters for nonprofit evaluation.

Grant reporting has historically centered on quantitative outputs. How many people were served. How many workshops were delivered. What percentage completed the program. These numbers satisfy basic accountability requirements, but they tell funders almost nothing about whether the program actually changed anything in participants' lives.

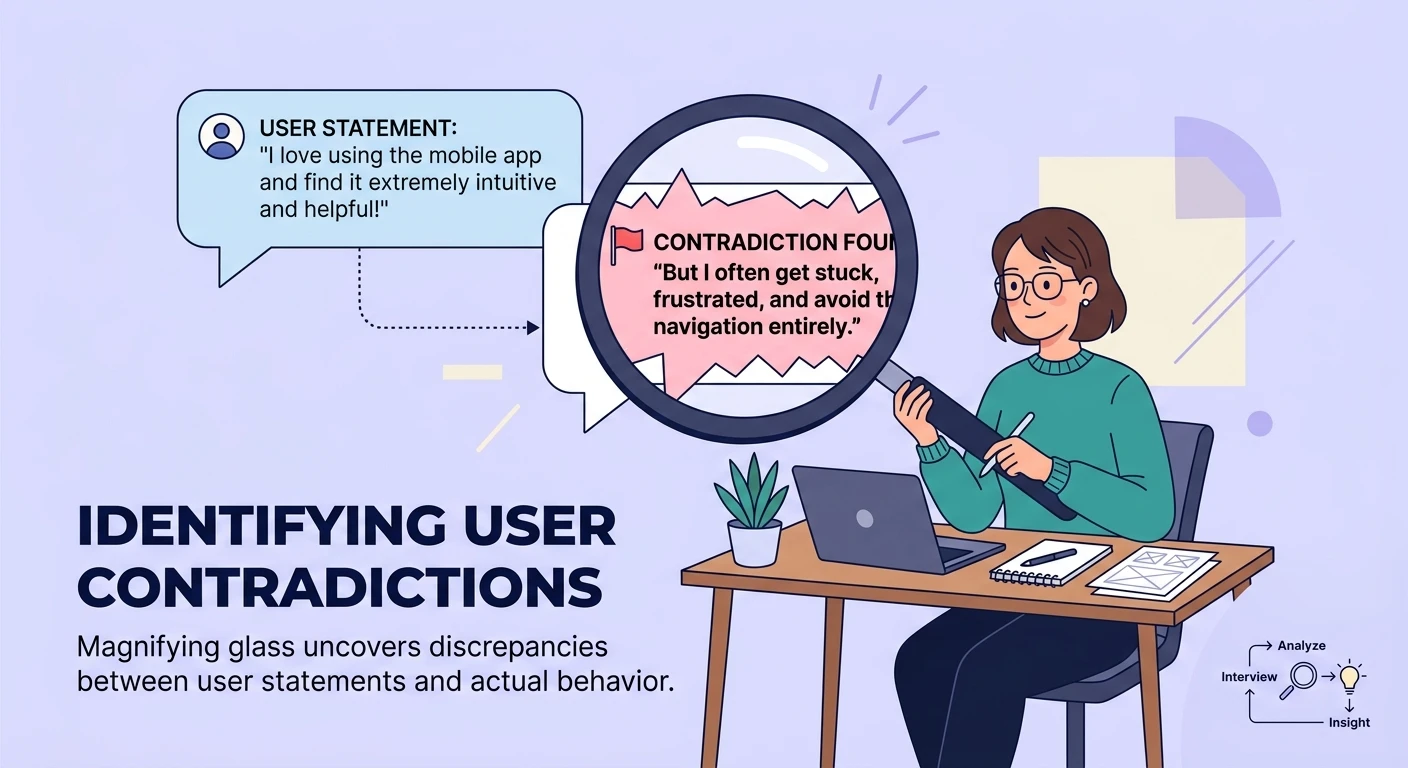

Qualitative data answers the questions that matter: How did the program change participants' daily experience? What barriers did they encounter? Which components were most valuable and which felt irrelevant? What happened after the program ended? Did the effects persist?

This is exactly what theory of change frameworks demand -- evidence that connects activities to outcomes through the mechanisms the program was designed to activate. Quantitative metrics can tell you that 80% of participants completed a job training program. Qualitative data tells you that participants who succeeded had access to peer support networks that the program had not explicitly designed but that emerged organically, and that participants who dropped out did so primarily because of scheduling conflicts with caregiving responsibilities, not because of disengagement.

That qualitative insight is what allows program teams to redesign the next cohort intelligently. And it is what allows funders to understand not just whether a program worked, but why -- and whether the model is transferable to other contexts.

The challenge has always been collecting this data at sufficient scale and depth to be credible. A handful of interviews with self-selected participants does not constitute rigorous evidence. But the cost of conducting dozens or hundreds of in-depth interviews has been prohibitive. This is where AI-moderated approaches fundamentally change the calculus.

How AI Interviews Scale Beneficiary Voice Capture

AI-moderated interviews work by conducting structured qualitative conversations with participants asynchronously. Participants engage on their own schedule, from their own devices, in their preferred language. The AI moderator follows a discussion guide designed by the evaluation team, asks follow-up questions based on participant responses, and probes for depth and specificity in the same way a skilled human interviewer would.

The economics change dramatically. Where a traditional evaluation might budget for fifteen to twenty interviews because of cost constraints, an AI-moderated approach can feasibly conduct fifty, a hundred, or more -- at a fraction of the per-interview cost. This is not about replacing depth with volume. Each individual interview still captures rich, nuanced, personal narrative. But you can now achieve both breadth and depth in a way that traditional methods could not support.

For nonprofits, this has several immediate implications.

Representative voice capture becomes feasible. Instead of hearing from the twelve beneficiaries who were easiest to reach or most willing to participate, you can systematically include participants across program sites, demographics, and experience levels. The voices that are hardest to capture in traditional evaluation -- those with transportation barriers, irregular schedules, language differences, or distrust of formal research -- become accessible through asynchronous, device-based participation.

Longitudinal feedback becomes affordable. Programs can check in with beneficiaries at multiple points -- mid-program, at completion, and months after -- without the compounding cost of scheduling and conducting repeat interviews. This transforms evaluation from a snapshot into a continuous feedback loop.

Analysis scales with collection. AI-powered thematic analysis can process dozens of interview transcripts in hours rather than weeks, identifying patterns, contradictions, and emergent themes across the full dataset. Program teams get actionable findings while the information is still timely enough to influence decisions.

Staff time is preserved. In many nonprofits, evaluation falls to program staff who are already stretched thin. AI-moderated interviews remove the scheduling, conducting, transcribing, and initial coding burden, freeing staff to focus on what humans do best: interpreting findings, designing program improvements, and building relationships with the communities they serve.

Privacy and Ethics: Getting It Right With Vulnerable Populations

Any conversation about AI and vulnerable populations requires serious engagement with ethics. Nonprofits serve people who may be experiencing housing instability, health crises, immigration uncertainty, domestic violence, substance use disorders, or poverty. The stakes of mishandling their data are not abstract.

Several principles should guide the use of AI interviews in nonprofit evaluation.

Informed consent must be genuine, not performative. Participants need to understand, in plain language and in their own language, that they are speaking with an AI system, how their data will be used, who will see it, and how it will be protected. Consent processes should be designed for the literacy level and context of the population, not copied from academic IRB templates. For deeper guidance on working with sensitive populations, the principles in crisis-safe AI research with vulnerable populations apply directly.

Anonymization must be robust. Qualitative data from small programs can be re-identifying even without names attached. AI systems should be configured to redact personally identifiable information automatically, and evaluation teams should review outputs for indirect identifiers before sharing with funders or publishing results.

Participants must have control. The ability to skip questions, pause, return later, or withdraw entirely is not optional. It is especially important when working with populations that may feel pressure to participate in order to maintain access to services. The asynchronous nature of AI interviews actually supports autonomy here -- participants are not facing a live interviewer whose social presence creates implicit pressure to continue.

Data governance must be clear. Who owns the data? Where is it stored? How long is it retained? These questions need answers before the first interview launches, not after. Organizations should ensure their data residency and compliance requirements are met, particularly when working across jurisdictions or with populations whose data carries additional legal protections.

The AI should know its limits. If a participant discloses an active crisis -- suicidal ideation, abuse, immediate safety concerns -- the system must have protocols for appropriate escalation. This is not a feature request; it is a requirement for ethical deployment in contexts where participants may be experiencing trauma.

Real Use Cases Across the Sector

The applications span the full range of nonprofit and foundation work.

Program feedback and improvement. A workforce development nonprofit conducts AI interviews with program graduates three months after completion. The data reveals that the most valued program component was not the technical training -- which the program emphasized in grant applications -- but the peer cohort experience. This insight reshapes how the program is designed, staffed, and pitched to funders.

Needs assessment. A community foundation preparing a strategic plan uses AI interviews to hear from 200 community members across its service area. The scale makes it possible to identify needs that differ significantly across neighborhoods -- something a focus group with twenty participants would have missed entirely.

Community voice in grantmaking. A foundation incorporates AI-moderated stakeholder engagement into its grantmaking process, interviewing community members about their priorities before issuing an RFP. Grant decisions are informed not just by applicant proposals but by the expressed needs of the people those grants are meant to serve.

Funder learning. A foundation evaluating its own grantmaking strategy conducts AI interviews with current and former grantees. The anonymous feedback mechanism produces candid responses about application burden, reporting requirements, and the foundation's responsiveness -- feedback that grantees rarely share directly for fear of jeopardizing future funding.

Multi-site evaluation. A national nonprofit operating in twelve cities uses a standardized AI interview protocol to collect beneficiary feedback across all sites simultaneously. For the first time, the organization can compare experiences across sites with comparable data, identifying which local adaptations are working and which are struggling. The qualitative evidence base built through this approach supports both internal learning and external reporting.

From Evaluation Burden to Evaluation Culture

The deeper shift that AI-moderated interviews enable is cultural, not just operational. When evaluation is expensive and time-consuming, it becomes something organizations do to satisfy funders -- a compliance exercise performed at the end of a grant cycle. When evaluation is accessible and timely, it becomes something organizations do for themselves -- a learning practice embedded in how programs operate.

This is the difference between organizations that report on what happened and organizations that systematically learn from what happened. The cost efficiency of AI-powered approaches makes the latter possible for organizations that previously could not afford the former.

For foundation program officers evaluating grant applications, the question is no longer whether an organization has evaluation capacity. It is whether they are equipped to capture the voices of the people they serve in a way that is rigorous, ethical, and actionable. AI-moderated interviews lower the barrier to answering that question affirmatively.

For nonprofit leaders making the case for funding, qualitative impact data from beneficiary voices is more compelling than any logic model or output count. When a funder reads fifty beneficiary accounts describing how a program changed their trajectory -- in their own words, with the nuance and specificity that only qualitative data provides -- the case for continued investment makes itself.

Making the Shift

If your organization is ready to explore how AI-moderated interviews can strengthen your evaluation practice, start by identifying one program cycle where you currently rely on post-hoc surveys or anecdotal feedback. Design a short AI interview protocol -- five to seven core questions with follow-up probing -- and pilot it with a subset of participants. Compare the depth and richness of what you learn against your existing methods.

The gap will be immediately apparent.

Book an information session to see how Qualz.ai can help your organization build evaluation capacity that matches your mission -- capturing the voices that matter, at the scale your programs deserve.