The Speed vs. Rigor False Dichotomy

Every qualitative researcher I talk to has the same concern about AI-assisted analysis: "But will it be rigorous?"

The question reveals an assumption that speed and rigor are opposed -- that faster analysis necessarily means shallower analysis. In manual thematic analysis, this is often true. A researcher under deadline pressure skims transcripts, codes superficially, and produces themes that are more like topic labels than genuine analytical constructs. Speed degrades quality.

But AI thematic analysis does not trade off the same way. An AI system reading 100 transcripts does not get tired at transcript 47. It does not unconsciously favor themes identified in the first few transcripts and force-fit later data into those categories. It does not lose concentration during a dense, technical passage. The failure modes of AI analysis are real, but they are different from human failure modes -- and in many cases, more manageable.

The actual risk with AI thematic analysis is not that it is less rigorous than manual coding. The risk is that teams implement it badly -- treating it as a magic button rather than a tool that requires proper configuration, validation, and human oversight. Done right, AI thematic analysis is both faster and more rigorous than the typical manual process. Here is how.

Understanding What AI Thematic Analysis Actually Does

Let me be precise about what we mean by AI thematic analysis, because the term covers a wide range of implementations with very different quality levels.

At the lowest level, you have keyword extraction and topic modeling -- statistical approaches that identify frequently co-occurring terms and cluster documents by lexical similarity. This is not thematic analysis. It is text mining with a misleading label. If your tool just outputs word clouds and topic clusters, you are not doing thematic analysis.

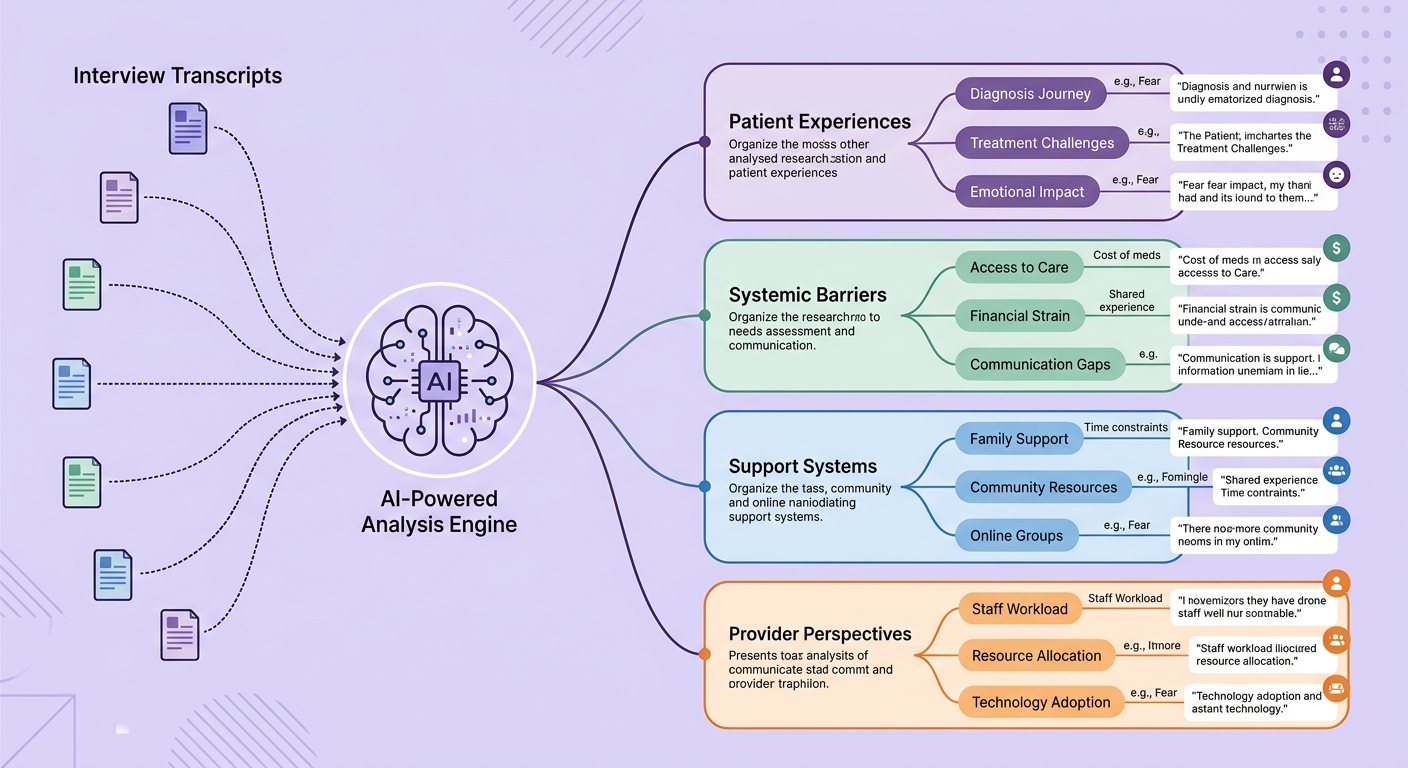

Genuine AI thematic analysis, as implemented in modern qualitative research platforms, involves several distinct operations:

Semantic coding. The AI reads each segment of text and assigns codes based on meaning, not just keywords. "I kept clicking the export button but nothing happened" and "The download feature seems completely non-functional" get the same code even though they share almost no words. This requires language understanding at a level that was simply not possible before large language models.

Theme development. Codes are grouped into higher-order themes through iterative analysis. The AI identifies patterns across codes, proposes theme structures, and can articulate what each theme captures and why certain codes belong together. This mirrors the process a researcher follows in manual affinity mapping, but at a speed and scale that changes what is practical.

Theme refinement. Initial themes are reviewed, split, merged, and redefined based on how well they fit the data. The AI can assess theme coherence -- whether all data within a theme actually belongs together -- and flag themes that may need restructuring.

Evidence mapping. Every theme is linked back to the specific data segments that support it, creating a complete audit trail from insight to evidence. This is where AI thematic analysis often surpasses manual processes -- the mapping is exhaustive and consistent, not selective.

The Rigor Framework for AI Thematic Analysis

Rigor in qualitative research is not a single thing. It is a set of criteria, and AI performs differently on each.

Credibility (truth value). Do the themes accurately represent participant experiences? This is where human oversight remains essential. The AI produces candidate themes and supporting evidence. A qualified researcher reviews them, checks whether the interpretations ring true, and validates against their contextual knowledge of the research domain. The AI accelerates the mechanical work; the human provides the interpretive judgment.

Transferability (applicability). Are findings described with enough context that readers can assess applicability to their own situation? AI actually helps here by providing more comprehensive evidence. Instead of selecting two or three illustrative quotes per theme, the AI can surface every relevant segment, giving readers a fuller picture of the data supporting each finding.

Dependability (consistency). Would the same data produce the same themes if analyzed again? This is where AI dramatically outperforms manual analysis. Run the same transcripts through the same AI analysis pipeline twice and you get highly consistent results. Run them through two different human analysts and you get inter-rater reliability numbers that would make most researchers uncomfortable. AI does not solve the philosophical question of whether themes are "discovered" or "constructed" -- but it eliminates the inconsistency that plagues manual coding.

Confirmability (neutrality). Are findings grounded in data rather than researcher assumptions? The complete audit trail that AI analysis generates -- every code linked to every supporting segment -- makes confirmability assessable in a way that is practically impossible with manual analysis at scale.

The honest assessment: AI thematic analysis is stronger on dependability and confirmability, comparable on transferability, and requires human partnership on credibility. That is not a limitation -- it is a productive division of labor.

Setting Up AI Thematic Analysis for Maximum Rigor

Here is the practical workflow that gets you both speed and rigor.

Step 1: Define your analytical framework upfront. Before you run any AI analysis, document your research questions, your epistemological position (are you doing inductive or deductive analysis?), and your initial sensitizing concepts. This is not different from good manual practice -- but it matters even more with AI because the framework shapes how you configure and evaluate the AI output.

Step 2: Run initial AI coding on a subset. Take 10-15 percent of your transcripts and run the AI analysis. Review the codes generated. Are they at the right level of granularity? Are they capturing meaning or just paraphrasing? Adjust the analysis parameters -- code granularity, the level of interpretation versus description, domain-specific terminology -- based on this review.

This calibration step is critical. It is the equivalent of training a human coder, and skipping it is the number one reason AI thematic analysis produces mediocre results. As we explored in our guide to qualitative data analysis methodology, the analytical approach must match the research objective.

Step 3: Full analysis with human checkpoints. Run the AI on the complete dataset, but build in review gates. After coding is complete, a researcher reviews a random sample (20-30 percent) of coded segments. After themes are generated, the researcher evaluates each theme for coherence, distinctiveness, and analytical depth. This is not reviewing every code -- it is strategic quality assurance.

Step 4: Negative case analysis. Ask the AI to identify data that contradicts or complicates each theme. This is one area where AI excels -- it does not have the confirmation bias that leads human analysts to unconsciously downplay disconfirming evidence. Negative cases either refine the themes or become findings in their own right.

Step 5: Thick description with AI support. When writing up findings, use the AI-generated evidence map to build rich, contextualized descriptions of each theme. The AI has already linked every relevant data segment, so you can select the most illustrative quotes while being confident you have not missed important evidence.

Common Pitfalls and How to Avoid Them

Pitfall 1: Treating AI output as final. The AI produces draft analysis, not finished findings. Teams that skip the human review step consistently produce themes that are descriptively accurate but analytically shallow -- they capture what people said but miss what it means.

Pitfall 2: Over-coding. AI systems can generate hundreds of codes from a modest dataset. More codes does not mean better analysis. Set granularity parameters that produce codes at the level of abstraction your research questions require. If you are doing a strategic study, you need fewer, broader codes. If you are doing a detailed UX analysis, you need more granular codes.

Pitfall 3: Ignoring the audit trail. The audit trail is not just for methodological purists -- it is your quality assurance mechanism. When a stakeholder questions a finding, you should be able to trace from the theme to the supporting codes to the original data segments in minutes. This capability transforms how research findings are presented and received.

Pitfall 4: Skipping reflexivity. Even with AI analysis, the researcher's assumptions shape the output. Which transcripts you collected, what questions you asked, how you configured the analysis -- all of these embed researcher decisions. Document them. The combination of observational methods and interview analysis should inform your reflexive practice.

What the Benchmarks Show

I want to share some concrete numbers from teams that have made this transition.

Time to insights: A 40-interview study that took a 3-person team 4 weeks to analyze manually now takes 1 researcher 3-4 days with AI thematic analysis. The 10x speed claim is conservative for most datasets.

Inter-rater agreement: When comparing AI codes against expert human codes on the same dataset, agreement rates typically range from 78-85 percent. For comparison, inter-rater agreement between two trained human coders on the same dataset typically ranges from 70-80 percent. The AI is at least as consistent as human coders and often more so.

Theme coverage: AI analysis consistently identifies 15-25 percent more themes from the same dataset compared to manual analysis. The additional themes are typically valid but subtle -- patterns that emerge only when you can hold the entire dataset in working memory simultaneously, which humans cannot do with large datasets.

Stakeholder confidence: This one is harder to quantify, but teams report that the comprehensive evidence mapping and consistent audit trails increase stakeholder trust in qualitative findings. When a product leader can see every data segment supporting a theme, they engage with the evidence differently than when presented with selected quotes.

As the practice of turning interview transcripts into product roadmaps becomes more systematic, the demand for this kind of rigorous, rapid analysis will only grow. The strategic value of enterprise AI systems extends naturally into qualitative research infrastructure.

The Path Forward

AI thematic analysis is not going to replace qualitative researchers. It is going to make them dramatically more productive and, paradoxically, more rigorous. The mechanical work of coding, pattern identification, and evidence mapping is where human analysts are most likely to make errors -- fatigue, confirmation bias, inconsistency. Automating that work frees researchers to focus on what humans do best: interpretation, contextualization, and the creative leap from data to insight.

The organizations that adopt this approach will not just analyze faster. They will analyze more -- tackling research questions that were previously impractical because the data volume exceeded what manual methods could handle. That expansion of what is researchable, more than the speed improvement alone, is the real transformation.

If you are a research leader considering AI thematic analysis, start small but start properly. Invest in the calibration step. Build in human review gates. Demand audit trails. The speed will take care of itself. The rigor requires intention.