The Most Expensive Person in Your Product Organization

Every product team has one. The senior leader who has "been in the industry for twenty years" and therefore "knows what users want." The VP who reviews mockups and says "users would never do that" without citing a single study. The founder who overrides research findings because the data "does not match my experience."

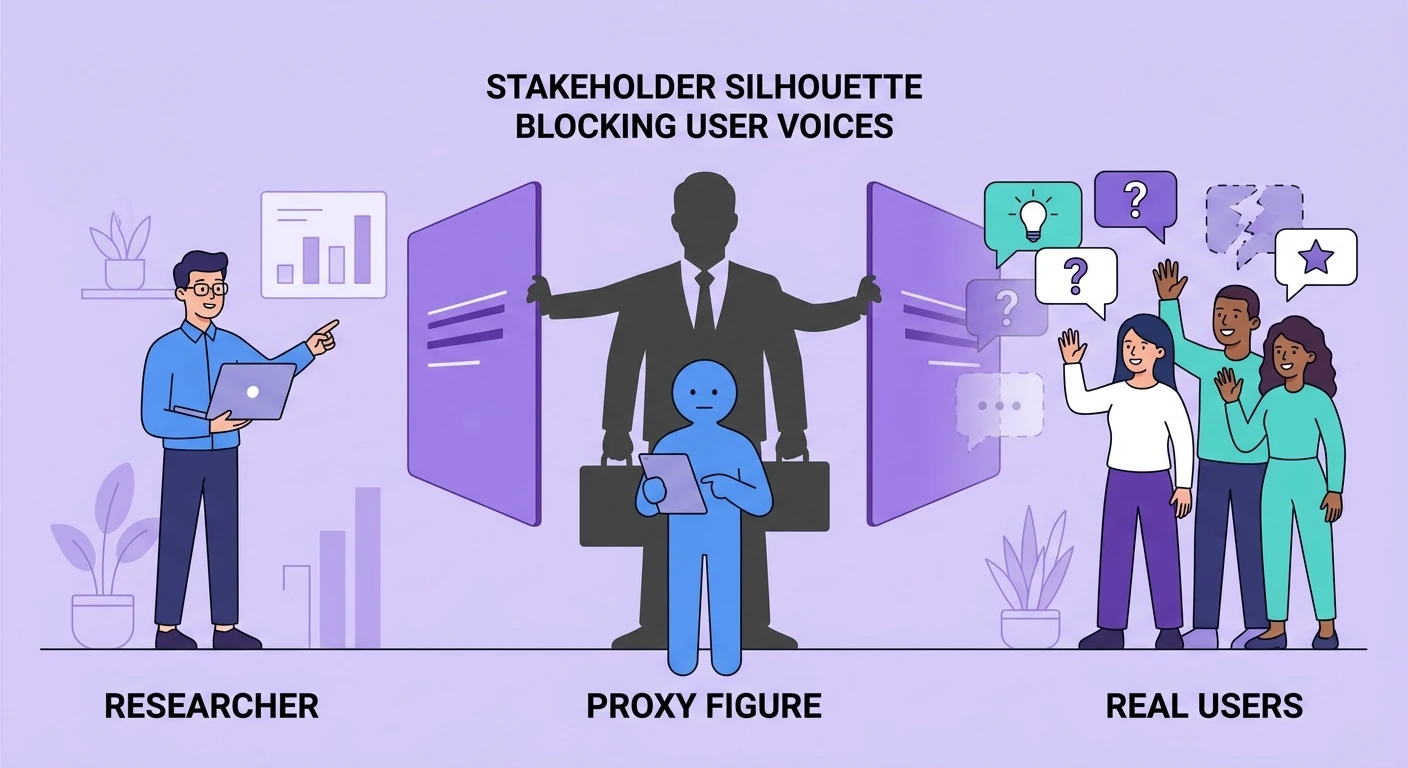

This is the proxy participant -- a stakeholder who substitutes their own judgment, preferences, and assumptions for actual user evidence. And they are the most expensive person in your product organization, not because of their salary, but because of the compounding cost of the decisions they distort.

The proxy participant problem is not about bad intentions. These leaders often have genuine domain expertise. The problem is structural: when one person's intuition consistently overrides systematic evidence, the organization loses its ability to learn from users. Every product decision filtered through a proxy participant is a decision made on a sample size of one, with all the cognitive biases, recency effects, and blind spots that entails.

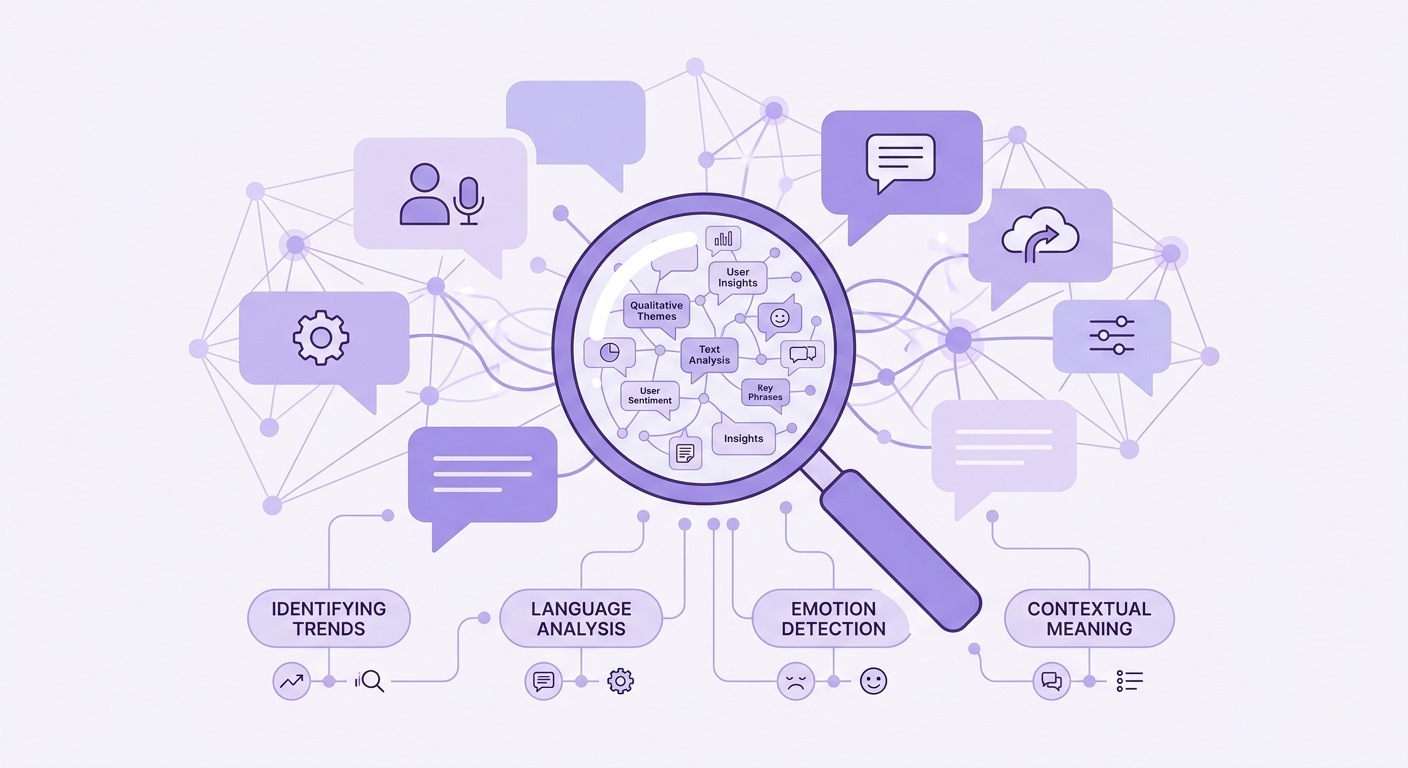

Research teams working with AI-powered qualitative analysis tools can surface patterns across dozens or hundreds of participants. A proxy participant surfaces patterns across their own mental model -- which was last updated by direct user contact sometime during the previous administration.

How Proxy Participation Manifests

Proxy participation rarely announces itself. It operates through subtle patterns that feel like normal stakeholder engagement but systematically undermine research influence.

The Preemptive Veto. Research is planned, but before it begins, a senior stakeholder declares the answer is already known. "We do not need to test this -- I talked to three customers last quarter and they all said the same thing." The study is canceled or scoped down to the point of uselessness. The three conversations -- unstructured, biased by the power dynamic of talking to the CEO, and unrepresentative of the user base -- become the evidence base for a multi-million dollar product bet.

The Selective Citation. Research is conducted and findings are presented. The proxy participant cherry-picks the one data point that confirms their existing belief and dismisses the rest as "edge cases" or "power users who are not representative." The research team watches their carefully triangulated findings reduced to a single quote that supports a predetermined conclusion.

The Experience Override. "That is interesting, but in my experience..." This phrase is the hallmark of proxy participation. It reframes systematic evidence as merely one input alongside personal anecdote, implicitly weighting them equally -- or weighting experience more heavily because it comes from someone with organizational authority.

The Requirement Injection. The proxy participant adds requirements to the product spec that were never surfaced in research. When asked where the requirement came from, the answer is vague: "customers have been asking for this" or "I have heard this from the field." No specific research artifact is cited because none exists. The requirement came from the proxy participant's mental model of what users want.

The organizations that handle this best are ones that build research repositories teams actually use, making it harder for any individual to claim knowledge that contradicts documented evidence.

The Compounding Cost

Proxy participation does not just produce one bad decision. It degrades the entire decision-making apparatus of the product organization over time.

When stakeholder intuition consistently overrides research, researchers stop pushing back. They learn that rigorous findings will be dismissed if they conflict with leadership's mental model, so they unconsciously steer toward research that confirms what leaders already believe. This is not cynicism -- it is organizational adaptation. The research function becomes a validation machine rather than a discovery engine.

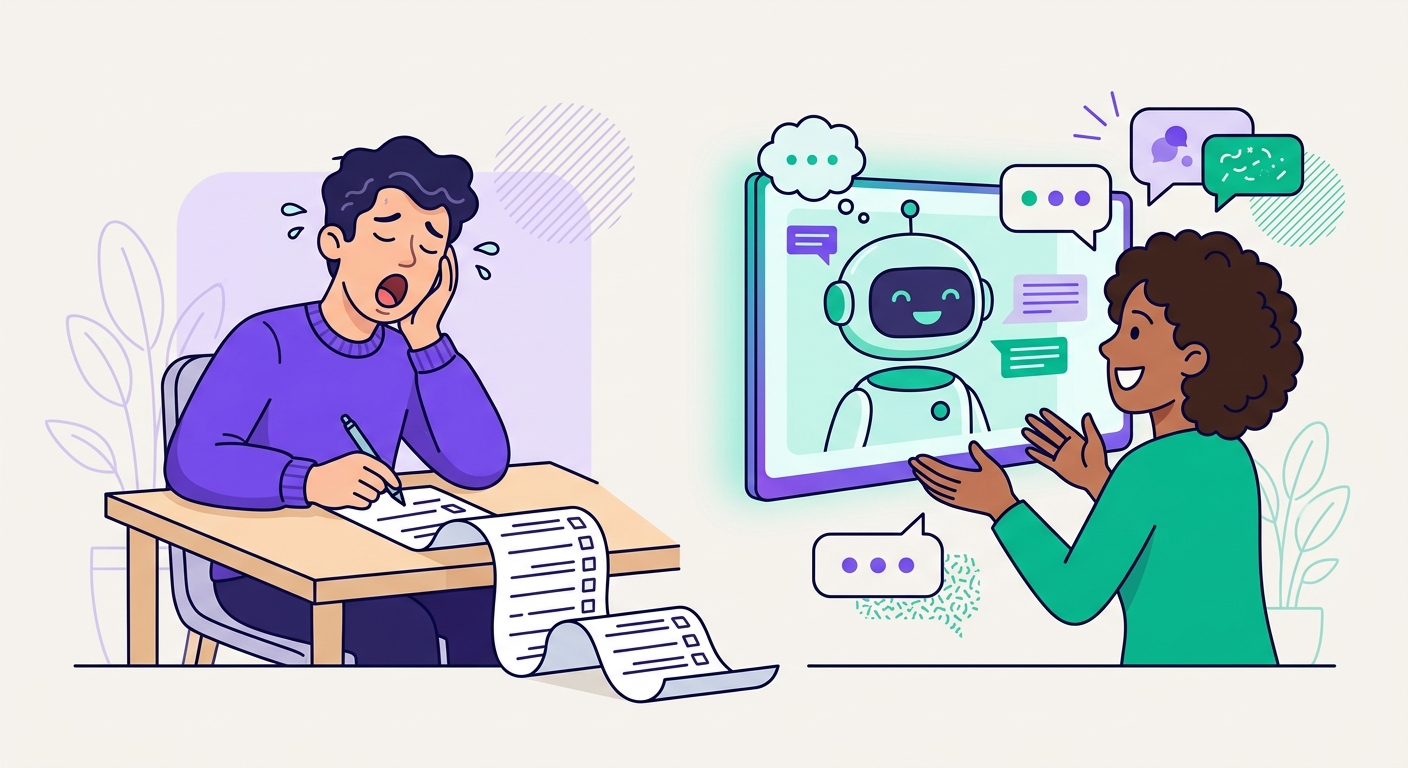

Product managers who see research overridden learn to skip it entirely. Why invest weeks in a study when the VP will override the findings in a thirty-minute review? They default to shipping what leadership wants and post-rationalizing it with selective metrics. The insight-to-action gap widens until it becomes an insight-to-action chasm, as we explored in closing the gap from interview transcripts to product roadmaps.

Engineering teams lose trust in product decisions because they can see the pattern: the spec changes after the research review, always in the direction of what the senior leader wanted before the research existed. They stop treating specs as evidence-based and start treating them as political documents. Velocity drops because conviction drops.

Measuring Proxy Participation

You cannot fix what you do not measure. Here are concrete signals that proxy participation is distorting your product decisions.

Research override rate. Track how often research recommendations are fully adopted, partially adopted, or overridden in product decisions. A healthy rate of full adoption is above 60%. Below 40% indicates systematic proxy participation. Below 20% means your research function is performative.

Citation asymmetry. In product review meetings, count citations of systematic research versus personal anecdotes. If anecdotes outnumber research citations by more than 2:1, proxy participation is dominant.

Decision traceability. For each major product decision in the last quarter, can you trace it to a specific research finding, metric, or documented user need? Or does the trail end at "leadership decision" or "strategic priority" -- euphemisms for proxy participation?

Researcher retention. High turnover in your research team -- especially among senior researchers -- is a lagging indicator of chronic proxy participation. Skilled researchers leave organizations where their work does not influence decisions. If your research team is a revolving door, the problem is probably not compensation.

These metrics align with broader research ops metrics that matter for measuring the health and impact of a research program.

Dismantling the Pattern

The solution is not to eliminate stakeholder input -- domain expertise is valuable. The solution is to create structural safeguards that prevent any single person's intuition from substituting for systematic evidence.

Pre-registration of hypotheses. Before any research study, require stakeholders to document their predictions. "I believe users will prefer option A because of X." When results come back, the prediction is compared against the evidence publicly. This does not embarrass anyone -- predictions are expected to be wrong sometimes. But it makes proxy participation visible by forcing stakeholders to state their assumptions explicitly rather than retrofitting them after seeing the data.

Evidence standards for product requirements. Every requirement in a product spec must cite its evidence source: a specific research study, a quantitative metric, a documented user request, or an explicit strategic bet (acknowledged as unvalidated). "I have heard this from customers" is not an acceptable citation. This does not prevent intuition-based decisions -- it labels them honestly.

Structured decision reviews. Replace free-form product reviews with structured frameworks that weight evidence types explicitly. Systematic research from representative samples outweighs individual customer conversations, which outweigh stakeholder intuition. Make the weighting visible.

Exposure therapy. The most effective long-term cure for proxy participation is direct, repeated exposure to real users. When senior leaders regularly observe user research sessions -- watching real people struggle with their product, hearing real frustrations in real words -- their mental models update. The gap between "what I think users want" and "what users actually need" becomes viscerally obvious.

Platforms like Qualz.ai make this exposure scalable by providing AI-analyzed interview summaries and highlight reels that leadership can consume without sitting through every full session. The goal is not to make stakeholders into researchers but to keep their mental models calibrated against current user reality.

The Organizational Test

Here is a simple diagnostic. Pick the last three major product decisions your team made. For each one, ask: would this decision have been different if the most senior stakeholder in the room had held the opposite opinion?

If the answer is yes for two or more, you have a proxy participant problem. The decisions are tracking stakeholder preference, not user evidence.

The fix is not to remove those stakeholders from the process. It is to build systems -- evidence standards, structured reviews, research repositories, decision traceability -- that make it structurally difficult for any single person's intuition to override the collective intelligence of your user base.

The best product organizations are not the ones with the smartest leaders. They are the ones where smart leaders have built systems that are smarter than any individual -- including themselves.