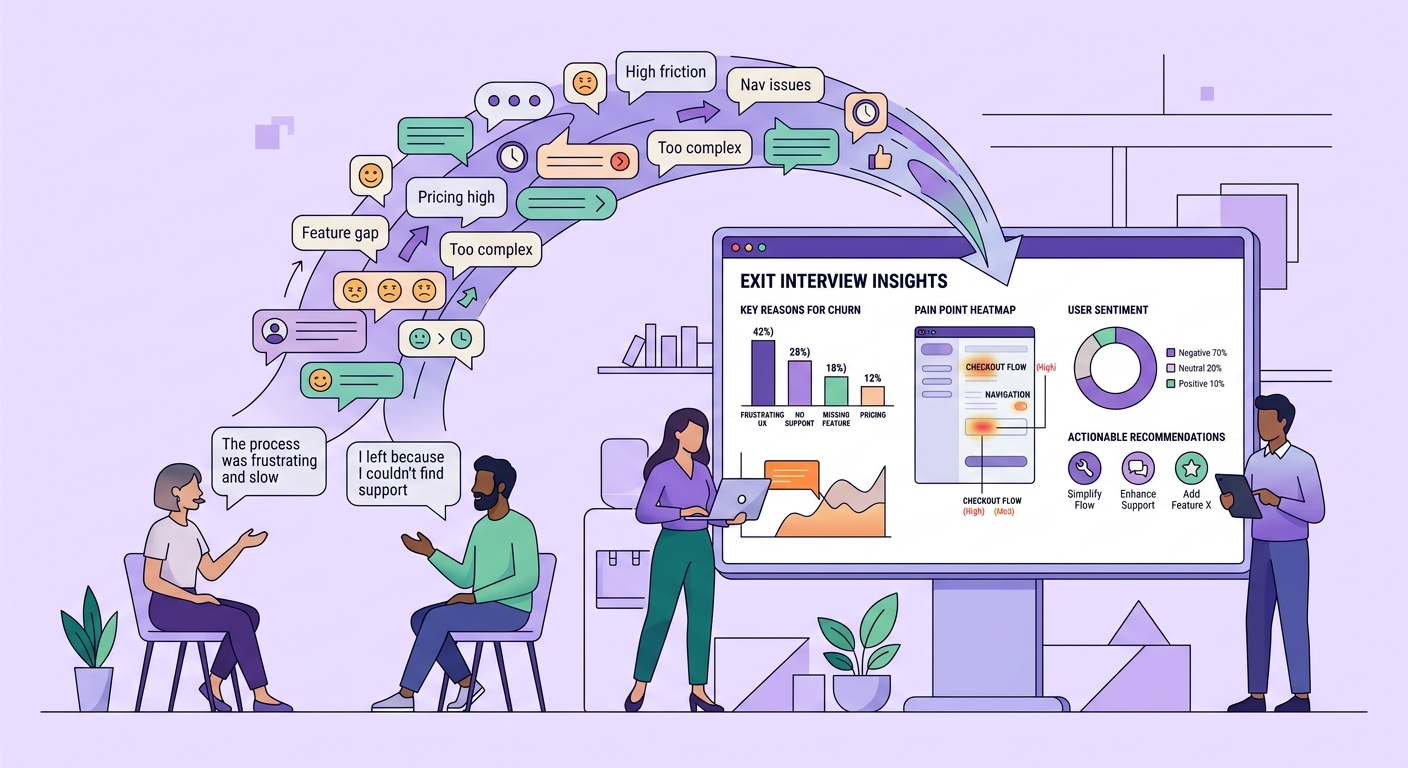

Your churn survey says customers leave because of "price." Your NPS trend is flat. Your product analytics shows declining engagement two weeks before cancellation. None of this tells you what actually happened.

Price is almost never the real reason. It is the easy answer -- the one that requires no emotional vulnerability, no detailed explanation, no confrontation. When a customer clicks "too expensive" on your cancellation survey, they are giving you the answer that ends the conversation fastest. The real story -- the compounding frustrations, the unmet expectations, the moment they started evaluating alternatives -- lives in the narrative, not the checkbox.

This is why qualitative exit interviews predict and prevent churn in ways that quantitative signals cannot. They reconstruct the story of departure, revealing not just what happened but the causal chain that led to it.

The Failure of Quantitative Churn Analysis

Every SaaS company tracks churn metrics. Monthly churn rate, net revenue retention, cohort survival curves -- the dashboards are sophisticated and the data is precise. But precision is not the same as insight.

Quantitative churn analysis excels at measuring the magnitude of the problem and identifying statistical patterns. You know that customers in the 50-200 employee segment churn at 1.4x the rate of enterprise accounts. You know that customers who do not activate feature X within 30 days have a 60% higher churn probability. You know that churn spikes in Q1.

What you do not know is why. And without the why, your retention interventions are educated guesses.

Consider what happens when a team tries to act on the finding that non-activators of feature X churn more. The obvious response is to push harder on feature X adoption -- more onboarding nudges, more in-app prompts, more CSM outreach about feature X. But what if users are not adopting feature X because it does not solve their actual problem? What if the feature was built based on assumptions about user needs that were never validated through real research? Pushing harder on a feature that misses the mark does not reduce churn -- it accelerates it by making the product feel tone-deaf.

Churn surveys have the same structural limitation. They offer predetermined categories -- price, missing features, switched to competitor, no longer needed -- and ask departing customers to select one. This data is easy to aggregate and chart. It is also systematically wrong, because it forces a complex, multi-causal decision into a single categorical variable.

In reality, churn is almost always the result of accumulated friction over time. No single event causes it. A customer who leaves "because of price" actually left because the product did not deliver enough value to justify the price, which happened because their specific use case was not well-supported, which happened because the product team prioritized features for a different segment. "Price" is the symptom. The disease is a value delivery failure that only narrative research can diagnose.

What Exit Interviews Reveal That Surveys Cannot

A well-conducted qualitative exit interview lasts 20 to 30 minutes and produces more actionable insight than months of churn dashboards. Here is what they consistently uncover:

The triggering event versus the root cause. Surveys capture what finally pushed the customer to cancel. Interviews reveal the long history that preceded it. A customer who cancelled after a price increase might have been dissatisfied for six months but tolerating it. The price increase was just the permission structure to act on existing frustration. Knowing the triggering event is interesting. Knowing the root cause is actionable.

The competitive frame. When customers leave for a competitor, surveys tell you which competitor. Interviews tell you what the competitor promised that you did not deliver, how the customer found the competitor, what the evaluation process looked like, and what your product would have needed to do differently. This is strategic intelligence that shapes product roadmap, positioning, and competitive response. It connects to the broader question of how market research and user research together create a complete picture of competitive dynamics.

The organizational politics. Enterprise churn is often driven by stakeholder dynamics that are completely invisible in product data. A champion leaves. A new VP brings their preferred tools. Budget gets reallocated to a different initiative. The product works fine -- the organizational context changed. Understanding these dynamics does not help you prevent that specific churn, but it helps you build strategies (multi-stakeholder engagement, executive alignment, integration depth) that make organizational changes less likely to result in cancellation.

The expectation gap. Customers often churn because the product did not match what they expected when they bought it. This is not a product quality issue -- it is a sales and positioning issue. Interviews reveal where expectations were set incorrectly, which messages resonated during sales but disappointed during usage, and where the gap between promise and delivery is widest. This feedback loop between exit interviews and go-to-market messaging is one of the highest-leverage improvements most companies never make.

The workaround history. Before cancelling, most customers tried to make the product work. They built spreadsheet workarounds, used manual processes alongside the tool, asked support for solutions that did not exist. Interviews reveal this workaround history, which is essentially a map of product gaps drawn by the people who experienced them most acutely.

Designing Exit Interviews That Produce Insight

Not all exit interviews are created equal. A poorly designed exit interview produces the same surface-level answers as a survey, just slower. The difference is in structure, timing, and technique.

Interview within 48 hours of cancellation. Memory degrades rapidly. The customer who cancelled three weeks ago has already rationalized the decision and smoothed out the messy emotional reality. The customer who cancelled yesterday can still reconstruct the sequence of events with specificity. The approach mirrors what makes interview design for research effective -- recency and specificity produce richer data.

Start with the timeline, not the reason. Do not ask "why did you cancel?" That invites the same categorical answer the survey would produce. Instead, ask "take me back to when you first started thinking about whether this product was the right fit." This opens a narrative that reveals the full arc of the decision, not just the endpoint.

Follow the emotion. When a customer mentions frustration, disappointment, or surprise, go deeper. "You mentioned you were frustrated with the reporting. Tell me about a specific time that frustration came to a head." Specific stories produce specific insights. General complaints produce nothing actionable.

Ask about the alternative. "What are you using now instead?" followed by "What does it do differently?" reveals your competitive gap from the customer's perspective -- not from your feature comparison matrix, but from the lived experience of someone who evaluated both options.

Conduct at scale with AI assistance. The traditional objection to exit interviews is that they do not scale. You cannot conduct 30-minute interviews with every churning customer. But this is exactly where AI-powered interview technology changes the equation. AI-moderated exit interviews can run continuously, at scale, capturing qualitative depth from every departing customer without requiring a researcher for every conversation.

From Individual Stories to Systemic Patterns

The power of exit interviews scales with volume. A single exit interview produces a useful story. Twenty exit interviews produce systemic patterns. Fifty produce a churn taxonomy that is more predictive than any statistical model because it captures the causal mechanisms, not just the correlations.

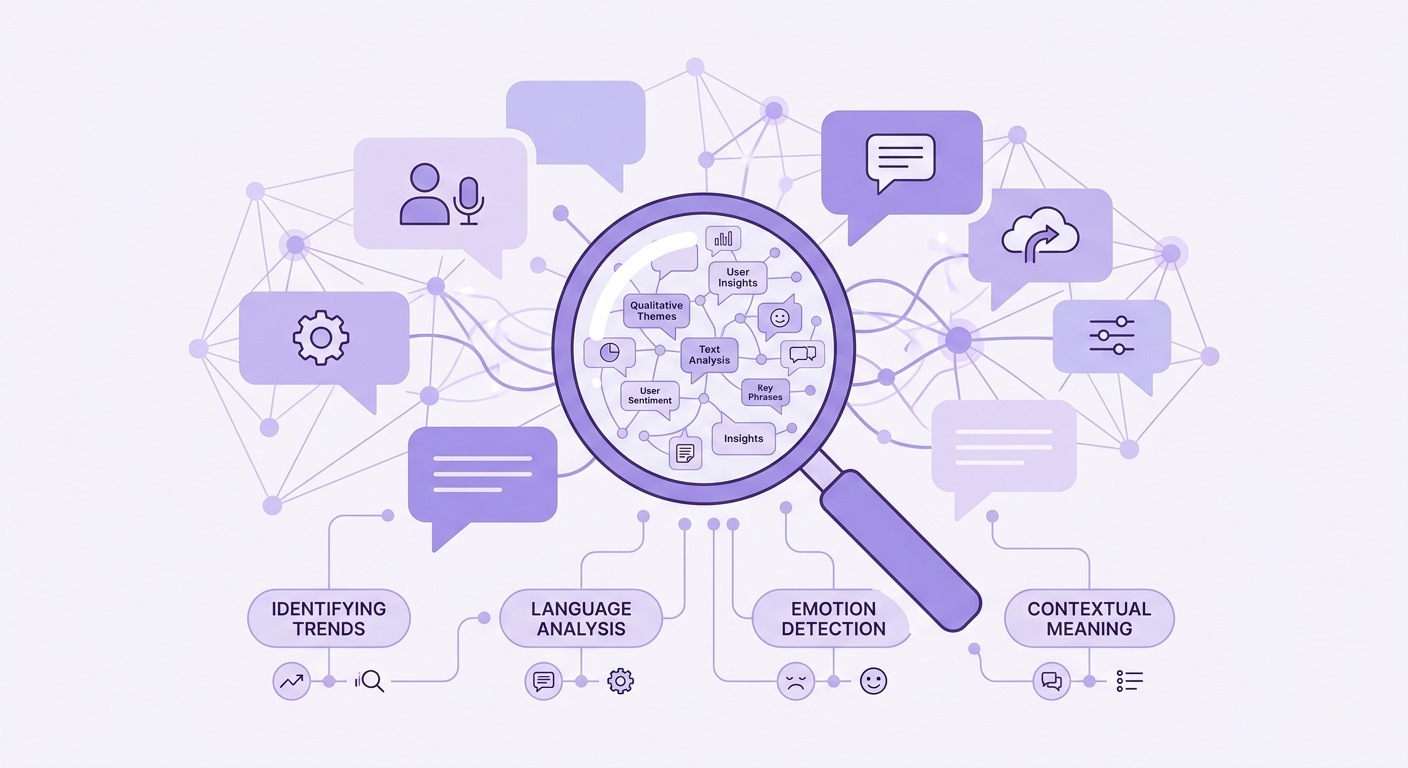

The analysis process requires synthesizing narrative data across interviews to identify recurring themes. This is where thematic analysis methods become essential. You are looking for patterns in the stories -- the same expectation gaps appearing across segments, the same competitive alternatives emerging, the same organizational triggers recurring.

Coding these interviews manually is feasible at small volumes but breaks down quickly. A team running 20 exit interviews per month generates 400 to 600 minutes of narrative data. AI-powered qualitative analysis makes this tractable, identifying thematic patterns across hundreds of interviews and surfacing the systemic issues that would take a human researcher weeks to extract.

The output is not a churn report. It is a prioritized map of value delivery failures, each one backed by specific customer narratives that make the problem concrete and the solution obvious. When you present "17 of our last 50 churned customers described the same expectation gap between what sales promised about real-time analytics and what the product actually delivers," that is a different conversation than "churn increased 0.3% last quarter."

Building Exit Interviews Into Your Retention System

The teams that use exit interviews effectively do not treat them as a research project. They treat them as a continuous feedback system that feeds directly into product, sales, and customer success operations.

Integrate exit interview insights with your research repository so that patterns are visible across the organization, not locked in a research team's analysis. Route specific findings to the teams that can act on them: product gaps to the roadmap, positioning issues to marketing, onboarding failures to customer success.

Measure the impact. Track whether the systemic issues identified through exit interviews get addressed, and whether addressing them changes churn patterns in subsequent cohorts. This closes the loop between qualitative insight and quantitative validation.

The companies with the best retention metrics are not the ones with the fanciest churn prediction models. They are the ones that talk to the people who leave, listen to what they say, and build systems to act on what they learn.

Every churned customer is a case study in what your product is not delivering. Exit interviews are how you read those case studies before they become a trend.