You just landed a 30-interview impact assessment for a water sanitation program in East Africa. The client wants thematic analysis, stakeholder mapping, and a final report — in eight weeks. You are one person.

If that scenario sounds familiar, you are part of a growing cohort of independent consultants doing program evaluation, impact assessment, and social research for international development organizations, EU-funded projects, and environmental agencies. The work is intellectually demanding, the stakes are high, and the bottleneck is almost always the same: qualitative data analysis.

This post is for you. Not for the large consultancies with teams of junior analysts. Not for academic researchers with PhD students to delegate coding to. For the solo practitioner who needs to move from raw interview recordings to defensible findings — faster, without cutting corners.

The Independent Consultant's Qualitative Bottleneck

Let's be honest about the economics. A typical impact assessment engagement for an independent consultant involves:

- 10-40 semi-structured interviews with program beneficiaries, stakeholders, and implementing partners

- Transcription of recorded interviews (often multilingual)

- Qualitative coding — applying thematic codes to hundreds of pages of transcript

- Analysis and synthesis — identifying patterns, building frameworks, triangulating with quantitative data

- Reporting — producing a deliverable that meets donor standards (OECD-DAC criteria, Theory of Change alignment, etc.)

Research from health services studies shows that manually coding a single one-hour interview transcript takes between 1.5 and 4 hours depending on the coding framework complexity. For 30 interviews, you are looking at 45-120 hours of coding alone — before you even start analysis. A 2021 study comparing rapid versus traditional qualitative analysis in implementation research found that traditional in-depth manual coding consumed roughly 3x the analyst hours compared to structured rapid approaches.

For a solo consultant billing project-based fees, those hours are the difference between a profitable engagement and working for below minimum wage. And this is before you factor in transcription time (roughly 4-6 hours per hour of audio for manual transcription) or the iterative cycles of re-reading, re-coding, and refining your codebook.

The math simply does not work at scale. Which is why most independent consultants either limit the number of interviews they conduct (weakening their findings), outsource transcription and coding to research assistants (adding cost and coordination overhead), or deliver analysis that is thinner than they would like.

Why Generic AI Tools Fall Short

You have probably already tried using ChatGPT or Claude to help with qualitative analysis. Upload a transcript, ask for themes, get a response. It works — kind of.

The problem is threefold:

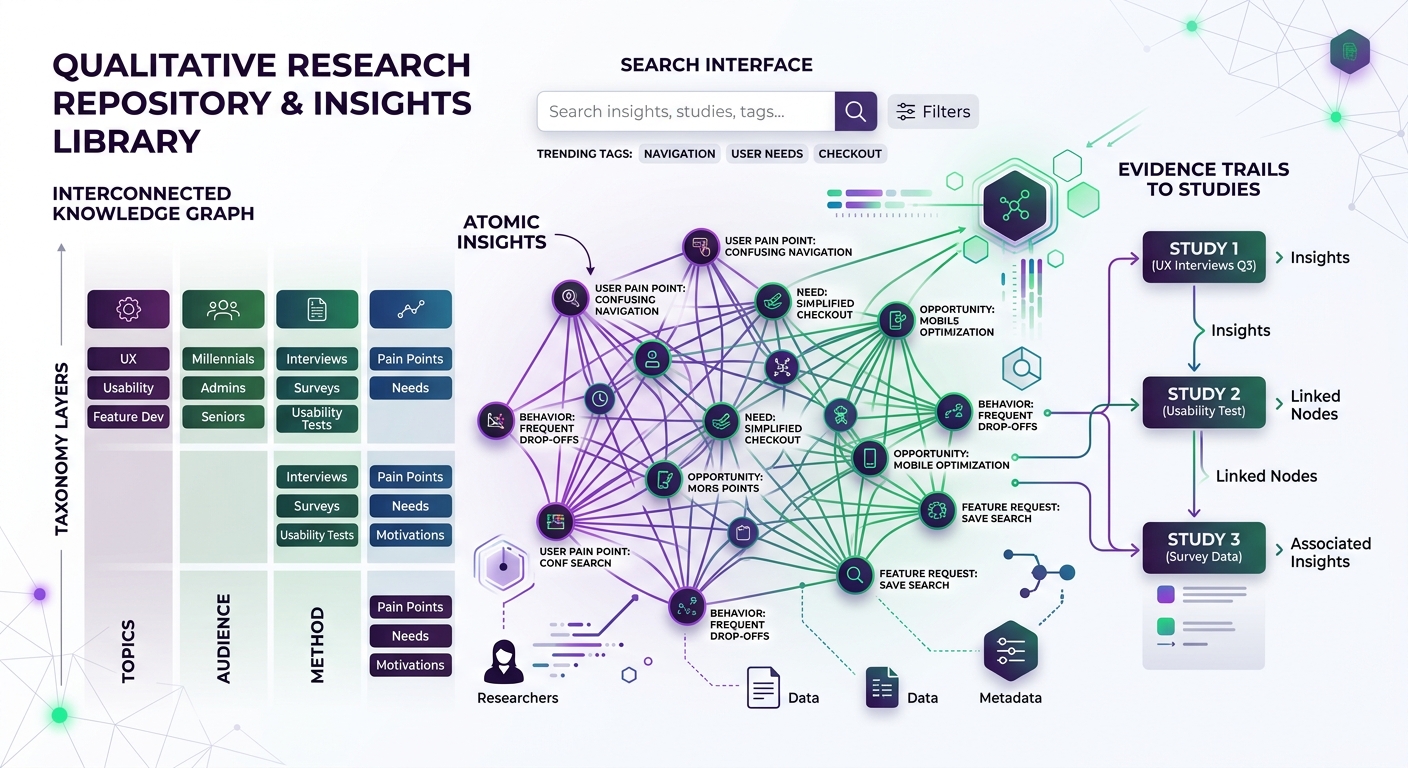

No persistent codebook. General-purpose LLMs do not maintain a structured codebook across sessions. Every time you upload a new transcript, you are starting from scratch or manually pasting your coding framework back in. For a 30-interview study, this becomes unmanageable.

No audit trail. Donors, ethics boards, and peer reviewers want to see how you got from raw data to findings. "I asked ChatGPT" is not a methodology section. You need traceable coding decisions — which segments were coded, under which themes, with what rationale.

No analytical framework integration. Impact assessment is not just thematic analysis. You need to work with Theory of Change frameworks, OECD-DAC evaluation criteria (relevance, coherence, effectiveness, efficiency, impact, sustainability), contribution analysis, and outcome mapping. Generic AI tools do not structure their outputs around these frameworks.

Data governance gaps. Pasting beneficiary interview transcripts into consumer AI tools raises serious questions about data processing, storage, and compliance — especially for EU-based consultants or anyone working with EU-funded projects.

This is the gap that purpose-built qualitative data analysis platforms are designed to fill.

The AI-Native Workflow: From Recording to Report

Here is what an AI-augmented impact assessment workflow looks like in practice, using tools designed specifically for qualitative research:

Step 1: Transcription and Data Preparation

Modern AI transcription handles multilingual audio with speaker diarization (identifying who said what) at accuracy rates above 95% for major languages. What used to take 4-6 hours per interview hour now takes minutes.

For independent consultants working across languages — say, conducting interviews in French in West Africa, Spanish in Central America, and English in donor capitals — this is transformative. You no longer need to budget for transcription services or spend your evenings with headphones and a foot pedal.

The key requirement: your transcription pipeline needs to feed directly into your analysis environment. If you are transcribing in one tool and analyzing in another, you are creating friction and data handling risks.

Step 2: AI-Assisted Coding

This is where the real time savings happen. AI-native qualitative analysis tools can:

- Apply your codebook automatically. You define the codes — whether deductive (based on your Theory of Change or evaluation matrix) or inductive (emerging from the data). The AI applies them consistently across all transcripts, with confidence scores.

- Surface patterns you might miss. When you are manually coding interview 27 of 30, cognitive fatigue is real. AI does not get tired. It will flag connections between an offhand comment in interview 3 and a theme that emerged strongly in interview 22.

- Enable [multi-lens analysis](https://qualz.ai/blog/multi-lens-analysis-qualitative-data). Run the same dataset through different analytical frameworks — stakeholder perspective, gender lens, sustainability criteria — without re-coding from scratch.

A recent case study documented 180 hours saved when using AI-assisted coding on 200 entrepreneur interviews with predefined themes. For a solo consultant, that is not an incremental improvement — it is the difference between taking on two projects per quarter versus four.

But — and this is critical — AI-assisted coding is not autonomous coding. You are reviewing, validating, and refining the AI's output. The tool accelerates the mechanical work; the analytical judgment remains yours. This is what separates rigorous AI-augmented qualitative analysis from the "just throw it at ChatGPT" approach.

Step 3: Thematic Analysis and Synthesis

Once your data is coded, the analysis layer is where AI tools earn their keep for impact assessment specifically:

- Frequency and co-occurrence mapping. Which themes appear together? Where do beneficiary experiences diverge from implementer perceptions?

- [Sentiment analysis](https://qualz.ai/blog/sentiment-analysis-qualitative-research) across stakeholder groups. How do community leaders feel about the program versus frontline staff versus donor representatives?

- Evidence mapping to evaluation criteria. Automatically tag coded segments to OECD-DAC criteria or your custom evaluation matrix, making it straightforward to build evidence-based findings.

- Deviant case identification. Which respondents contradict the dominant narrative? These are often the most analytically valuable data points.

For stakeholder engagement analysis, AI tools can help you move beyond the classic power-interest grid toward richer, data-driven stakeholder maps that draw on what people actually said in interviews — not just your assumptions about their influence.

Step 4: Reporting and Deliverables

The final output matters enormously in consulting. Your report is your product. AI tools can:

- Generate draft narrative sections anchored to coded data, with direct quotes properly attributed

- Produce data visualizations — theme frequency charts, stakeholder maps, sentiment distributions

- Create appendices with full coding summaries that satisfy donor audit requirements

This does not mean AI writes your report. It means AI assembles the raw materials — the coded evidence, the patterns, the illustrative quotes — so you can focus on interpretation, recommendations, and the strategic narrative that is where your expertise actually lives.

GDPR and Data Governance: The Non-Negotiable for EU Consultants

If you are based in the EU, working with EU-funded projects, or processing data from EU residents, GDPR compliance is not optional. And it creates specific requirements for how you handle qualitative research data.

Under GDPR, interview transcripts containing personal data make you a data controller (or processor, depending on your contractual relationship with the commissioning organization). This means:

- Lawful basis for processing. You need a clear legal basis — typically legitimate interest or consent — for processing interview data through any AI tool.

- Data processing agreements. Any AI platform you use must have a proper DPA in place, specifying what data is processed, where, for how long, and under what security measures.

- Data residency. Where are the servers? If your AI tool processes data through US-based infrastructure without adequate safeguards, you have a compliance problem. Look for platforms offering EU data residency or processing.

- Right to erasure. Participants can request deletion of their data. Your AI tool needs to support this — not just in the frontend, but in training data and model memory.

- Data minimization. Process only what is necessary. Tools that allow you to anonymize or pseudonymize transcripts before AI processing help you comply with this principle.

For a deep dive on navigating these requirements, see our GDPR compliance guide for qualitative research and our guide on handling sensitive data in AI-assisted research.

The practical implication: when evaluating AI tools for your practice, GDPR compliance should be a first-pass filter, not an afterthought. This is especially true if you are writing EU project proposals (Horizon Europe, Interreg, LIFE Programme) where data management plans are scrutinized during evaluation.

Budgeting AI Tools into Project Proposals

Here is a practical concern that rarely gets addressed: how do you actually budget for AI-native qualitative analysis tools when writing a project proposal?

For EU-funded projects (Horizon Europe, Interreg, etc.)

AI analysis tools typically fall under "Other goods, works, and services" or "Equipment" cost categories, depending on whether you are licensing SaaS or purchasing infrastructure. Key tips:

- Line-item the tool explicitly. Do not bury it in "miscellaneous." Evaluators want to see that your methodology is thought through. A line item for "AI-assisted qualitative data analysis platform (12-month license)" signals methodological sophistication.

- Justify it in your methodology section. Explain how AI-assisted coding improves consistency and reduces bias in your qualitative analysis. Reference the growing body of literature on AI in evaluation methodology.

- Budget for the human hours too. AI tools reduce analysis time, but you still need to budget for researcher time on validation, interpretation, and reporting. A common mistake is over-claiming efficiency gains and under-budgeting the human layer.

- Include it in your Data Management Plan. Describe how the tool handles data, its GDPR compliance posture, and data retention/deletion policies.

For bilateral donor projects (USAID, DFID/FCDO, GIZ, etc.)

Donor procurement rules vary, but the principle is the same: technology costs are eligible when they are directly linked to project activities. Frame AI tools as methodology infrastructure, not overhead.

For private sector and foundation clients

You have more flexibility here. Build the cost into your professional fees or list it as a direct project cost. The key is demonstrating value: "This tool allows me to analyze 40 interviews with the same rigor that would typically require a three-person team."

Pricing reality check

Most AI-native qualitative analysis platforms operate on subscription models ranging from $50-300/month for individual researchers, or project-based pricing for larger datasets. Compare this to:

- Hiring a research assistant for transcription and coding: $2,000-5,000 per project

- Traditional CAQDAS licenses (NVivo, ATLAS.ti): $100-800/year, with no AI capabilities

- Your own time doing manual coding: 45-120 hours at your billing rate

The ROI math is straightforward. A $200/month tool that saves you 60 hours per project pays for itself many times over.

When AI Is Not the Answer: Maintaining Methodological Integrity

Intellectual honesty requires acknowledging limitations. AI-assisted qualitative analysis is not appropriate for every situation:

Highly sensitive populations. When working with conflict-affected communities, survivors of violence, or other vulnerable groups, the ethical calculus around AI processing of their words requires extra scrutiny. Human-only analysis may be more appropriate, or at minimum, additional safeguards are needed.

Novel theoretical frameworks. If you are developing genuinely new theory from your data (pure grounded theory), AI coding trained on existing frameworks may constrain rather than support your analysis. The inductive process requires a kind of intellectual openness that current AI tools can inadvertently narrow.

Very small datasets. If you have 5-8 interviews, the setup time for configuring AI-assisted coding may not save you net time compared to careful manual analysis. The efficiency gains scale with dataset size.

When the donor requires specific methodology. Some evaluation frameworks mandate particular analytical approaches. Check your Terms of Reference before assuming AI-assisted analysis is acceptable.

For situations where you cannot interview real participants at all — whether due to access constraints, security concerns, or budget limitations — it is worth exploring when synthetic participants can supplement real data in impact assessment contexts.

Building Your AI-Augmented Practice

If you are an independent consultant ready to integrate AI tools into your impact assessment work, here is a practical roadmap:

Month 1: Evaluate and select. Try 2-3 platforms with a real (anonymized) dataset from a past project. Evaluate on: coding accuracy, framework flexibility, GDPR compliance, export capabilities, and whether the tool actually fits how you think about qualitative data. Qualz.ai's consulting solution is purpose-built for this use case.

Month 2: Pilot on a live project. Use AI-assisted coding alongside your traditional approach on one project. Compare results. This gives you confidence in the tool and produces a methodological comparison you can reference in future proposals.

Month 3: Standardize and scale. Build templates — codebook structures for common evaluation frameworks (OECD-DAC, Theory of Change, Most Significant Change), proposal language for budgeting tools, and data management plan text for GDPR compliance.

Ongoing: Stay current. The field is evolving rapidly. What AI tools could do in 2024 and what they can do in 2026 are fundamentally different capabilities. The consultants who track these developments and integrate them early will have a structural advantage in proposal competitiveness and delivery efficiency.

The Competitive Reality

The international development evaluation market is getting more competitive. Donors are demanding more rigorous mixed-methods evaluations. Commissioning organizations want faster turnaround. And larger consultancies are already integrating AI into their workflows.

Independent consultants have always competed on expertise, relationships, and flexibility. AI tools add a fourth dimension: analytical capacity that punches above your headcount. A solo consultant with the right tools can now deliver the analytical depth that previously required a small team — while maintaining the personalized attention and domain expertise that clients choose independents for in the first place.

The consultants who figure this out first will not just survive the AI transition — they will thrive in it. Those who wait until AI-assisted qualitative analysis is standard practice will find themselves competing on price against consultants who already have the efficiency gains baked in.

The work you do matters. Impact assessment in water management, program evaluation beyond market research, environmental policy, education, health — these are domains where getting the analysis right has real consequences for real communities. AI tools are not about replacing the rigor. They are about giving you the capacity to deliver it — consistently, at scale, as a team of one.

Ready to see how AI-native qualitative analysis works for impact assessment? Explore Qualz.ai — built for consultants who need GDPR-compliant, framework-flexible qualitative analysis that scales with their practice.