The Data That Lives in the Gaps

Every experienced researcher has felt it: that moment in an interview when a participant pauses just a beat too long before answering, changes the subject unprompted, or gives an answer so carefully worded that it says more about what they are hiding than what they are sharing. These silences -- the pauses, deflections, and conspicuous absences -- are not noise. They are signal. And most research teams systematically ignore them.

The problem is structural. Our tools are designed to capture what people say. Transcription software records words. Coding frameworks categorize statements. Analysis platforms like those used for qualitative data analysis process explicit content. But the most revealing data in a user interview often lives in what is not said -- the feature nobody mentions, the workflow step that gets glossed over, the competitor name that triggers a visible hesitation.

This is not a new observation. Qualitative researchers have written about the significance of silence for decades. What is new is our ability to systematically detect and analyze these gaps at scale, and the growing recognition that teams who ignore silence are making product decisions on incomplete evidence.

Why Participants Go Silent

Silence in user interviews is not random. It follows predictable patterns tied to specific psychological and social dynamics.

Social desirability pressure. Participants want to appear competent, reasonable, and agreeable. When a question touches on a behavior they perceive as embarrassing -- using a workaround instead of the intended feature, ignoring a tool they know they should use, or struggling with something they think should be easy -- they minimize or redirect rather than admitting the truth directly. The silence is not about dishonesty. It is about self-presentation.

Organizational loyalty. Enterprise users interviewing about workplace tools often censor criticism of their employer's technology choices. They will describe frustrations with vague language ("it could be better") rather than specific complaints, because they are aware that their feedback might be traceable. This is especially pronounced in stakeholder interview contexts where participants have political considerations.

Unarticulated needs. Some silences reflect genuine inability to express a need, not reluctance. Users who have adapted to a broken workflow often cannot articulate what is wrong because the workaround has become invisible to them. They do not mention the problem because they no longer perceive it as a problem -- it is just how things work.

Topic avoidance. Participants systematically avoid topics that create cognitive dissonance. A user who chose a tool and is now dissatisfied will avoid discussing the selection process. A team lead who mandated a workflow that is not working will avoid discussing outcomes. The avoided topics are often the most strategically valuable ones for product teams.

Research on moderator bias in AI-assisted interviews suggests that some silence is also moderator-induced. Participants read social cues from interviewers and learn quickly which topics generate interest and which create discomfort. A moderator who subtly signals that they want positive feedback will get silence on negative experiences.

Detecting Silence Systematically

The challenge is moving from anecdotal awareness of silence to systematic detection. Here are the practical techniques that work.

Gap analysis across interviews. After conducting a set of interviews on the same topic, map the themes that each participant covered. The interesting finding is not where they overlap -- it is where individual participants diverge from the group pattern. If eight out of ten participants discuss onboarding but two skip it entirely, that absence is data. Those two participants may have had onboarding experiences so negative they have suppressed the memory, or so unusual that they do not recognize the standard framing.

Temporal pattern analysis. Track how long participants spend on each topic relative to its importance. If your interview guide allocates equal time to three workflow stages but participants consistently rush through stage two, the speed itself is information. Rushed responses often indicate discomfort, perceived irrelevance, or a topic the participant wants to move past.

Redirect tracking. Note every instance where a participant answers a different question than the one asked. This is distinct from misunderstanding the question -- redirects are purposeful. The participant heard the question, chose not to engage with it, and steered the conversation somewhere safer. The pattern of redirects across multiple interviews reveals the topics that create the most discomfort.

Probe response analysis. When you notice a potential silence and probe deeper, track the quality of the response. Genuine engagement produces specific, detailed answers. Continued avoidance produces abstract, generic responses. "Oh, that part is fine" is a silence response. "That part works well because the auto-save catches my changes every thirty seconds and I do not have to think about it" is genuine engagement.

Teams using AI-powered analysis can automate some of this detection. Natural language processing can flag interviews where expected topics are absent, where response lengths are unusually short for specific questions, or where hedging language spikes. The technology does not replace researcher judgment but surfaces patterns that manual review misses across large datasets.

Decoding What Silence Means for Product Decisions

Detecting silence is only half the challenge. The harder part is interpreting what it means and translating it into actionable product intelligence.

Silence about a feature means the feature is invisible, not unimportant. When users do not mention a capability, product teams often conclude it is not valued. But absence from conversation and absence from workflow are different things. A feature that works so seamlessly it never creates friction will never come up in an interview focused on pain points. Conversely, a feature that users have given up on will also not come up -- they have mentally deleted it from their tool.

The distinction matters enormously for product strategy. The first case means the feature is working and should be maintained. The second means it has failed and should be rethought. Journey mapping with real data can help distinguish between these by overlaying usage analytics with qualitative silence patterns.

Silence clusters reveal organizational dysfunction. When multiple participants from the same organization go silent on the same topic, you have found an organizational nerve. This is gold for enterprise product teams. The silence cluster tells you where the political tensions, failed initiatives, and unresolved conflicts live -- exactly the contexts where your product needs to tread carefully or can provide the most value.

Silence evolution over time matters more than single-point silence. In longitudinal research, tracking how silence patterns change across interviews reveals shifting attitudes. A topic that participants discussed openly six months ago but now avoid suggests a negative experience in the interim. A previously avoided topic that participants now engage with suggests resolution or acceptance. Longitudinal qualitative research methods are especially powerful for tracking these shifts.

Practical Techniques for Breaking Productive Silence

Not all silence should be broken. Some topics are genuinely irrelevant, and pushing participants to discuss them wastes interview time. But when silence is masking valuable data, these techniques help.

The third-person reframe. Instead of asking "Do you find this difficult?" ask "Some users we have spoken with find this part challenging. What has your experience been?" The third-person framing gives participants permission to share negative experiences without feeling like they are complaining.

The hypothetical future. Instead of asking about current behavior (which triggers self-presentation concerns), ask about future preferences. "If you were designing this from scratch, what would you change?" bypasses the loyalty filter because the participant is not criticizing what exists -- they are imagining what could be.

The specificity probe. When a participant gives a vague positive response ("It is fine"), ask for the last specific time they used the feature. Specific instances are harder to fabricate and often surface the friction that the general response was designed to hide.

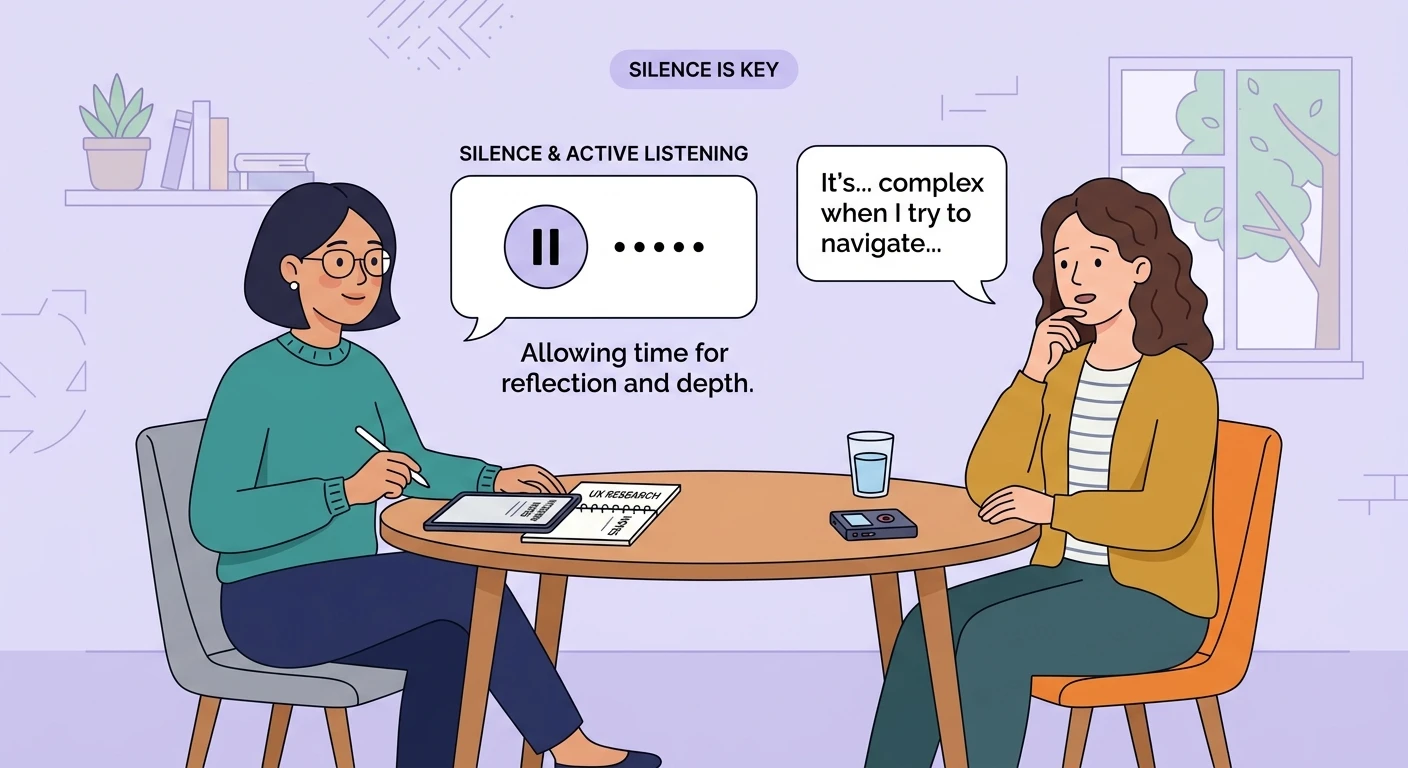

Strategic silence from the interviewer. Sometimes the most effective technique is your own silence. After a participant gives a brief answer, wait. The discomfort of silence is mutual, and participants often fill the gap with the authentic response they initially censored. This requires discipline -- most moderators rush to fill pauses -- but it is remarkably effective.

As the field moves toward asynchronous research methods, the dynamics of silence change. In written async responses, silence manifests as brevity, topic skipping, and delayed responses rather than pauses and redirections. The underlying psychology is the same, but the detection methods need to adapt.

Building Silence Awareness Into Your Research Practice

The goal is not to become obsessed with what participants do not say -- that way lies overinterpretation and conspiracy thinking. The goal is to treat silence as data that deserves the same systematic attention as explicit statements.

Start by adding a "silence log" to your interview debrief process. After each interview, note: What expected topics were not discussed? Where did the participant seem to rush or redirect? Where did probe responses remain vague despite follow-up? These observations, aggregated across interviews, reveal the hidden landscape of your users' experience that no transcript alone can capture.

The teams that master this build products that address needs users could not articulate -- not because those needs were complex, but because the evidence was hiding in plain sight, in the spaces between words.