The VoC Paradox: More Data, Less Understanding

Every enterprise has a Voice of Customer program. Most of them are broken.

Not broken in the obvious way -- they collect data, generate reports, fill dashboards. The machinery works. The problem is that the machinery produces the wrong output. NPS scores tell you whether customers are happy. They do not tell you why. CSAT surveys capture a moment in time. They miss the narrative arc of the customer experience. Open-ended feedback fields collect rich qualitative data that nobody has time to analyze, so it sits in a database, unread, while product teams make decisions based on the quantitative crumbs.

This is the VoC paradox: organizations have never had more customer data, yet product leaders consistently say they do not understand their customers well enough to make confident decisions.

The root cause is structural. Traditional VoC programs were designed around quantitative metrics because quantitative data is easy to aggregate, easy to dashboard, and easy to present to executives. Qualitative data -- the interviews, open-ended responses, support transcripts, and contextual observations that reveal the why behind customer behavior -- has always been the harder problem. It requires human analysts, takes weeks to synthesize, and produces findings that resist neat visualization.

Until now.

Why Quantitative VoC Metrics Fail Product Teams

Let me be specific about the failure modes. A product team at a mid-market SaaS company sees their NPS drop from 42 to 35. The dashboard is red. Everyone is alarmed. But what do you actually do with that information?

You can segment it: NPS dropped most among enterprise accounts in the healthcare vertical. Helpful, but still insufficient. You do not know whether the drop is driven by a recent feature change, a competitor launching a better alternative, a shift in customer expectations, or a support experience that went sideways.

So the team sends a follow-up survey. "What could we do better?" The responses trickle in: "Make it faster." "Better reporting." "More integrations." These are symptoms, not diagnoses. They tell you the surface-level complaint but not the underlying workflow breakdown, the unmet need, or the moment of frustration that triggered the response.

This is precisely why research triangulation matters so much -- single data sources, especially quantitative ones, systematically miss the context that makes customer feedback actionable.

The quantitative VoC playbook has three fundamental limitations:

It captures reactions, not reasoning. A 1-5 scale rating tells you the verdict. It does not tell you the deliberation. When a customer rates their onboarding experience a 3, you do not know whether that means "it was fine but forgettable" or "I almost churned but your support team saved me." Those are wildly different situations that require wildly different responses.

It aggregates away the signal. The average of a thousand customer voices is not the voice of the customer. It is statistical noise masquerading as insight. The most valuable customer feedback is often the outlier -- the power user who found a workflow hack, the churned customer who articulates exactly why they left, the prospect who explains what your competitor does better. Averages bury these signals.

It optimizes for measurement, not understanding. VoC programs become self-referential: the goal becomes improving the score rather than understanding the customer. Teams chase NPS improvements by cherry-picking who they survey, when they survey, and how they phrase questions. The metric goes up. Customer understanding does not.

The Qualitative Data Bottleneck

Research leaders have known this for decades. The solution has always been qualitative research -- interviews, ethnographic observation, open-ended analysis. The problem was never awareness. It was scale.

A single skilled researcher can conduct maybe 4-5 in-depth interviews per day. Analyzing those interviews -- transcribing, coding, identifying themes, synthesizing insights -- takes roughly 3x the interview time. So a 20-interview study requires about two weeks of a senior researcher's time from start to finished report.

For a company with 10,000 customers across multiple segments, geographies, and use cases, that math does not work. You cannot interview your way to comprehensive customer understanding using traditional methods. So organizations default to surveys and dashboards, accepting the quantitative limitations as the cost of scale.

This is where AI-powered qualitative analysis changes the equation entirely. Not by replacing human judgment -- by removing the bottleneck that prevented qualitative methods from operating at VoC scale.

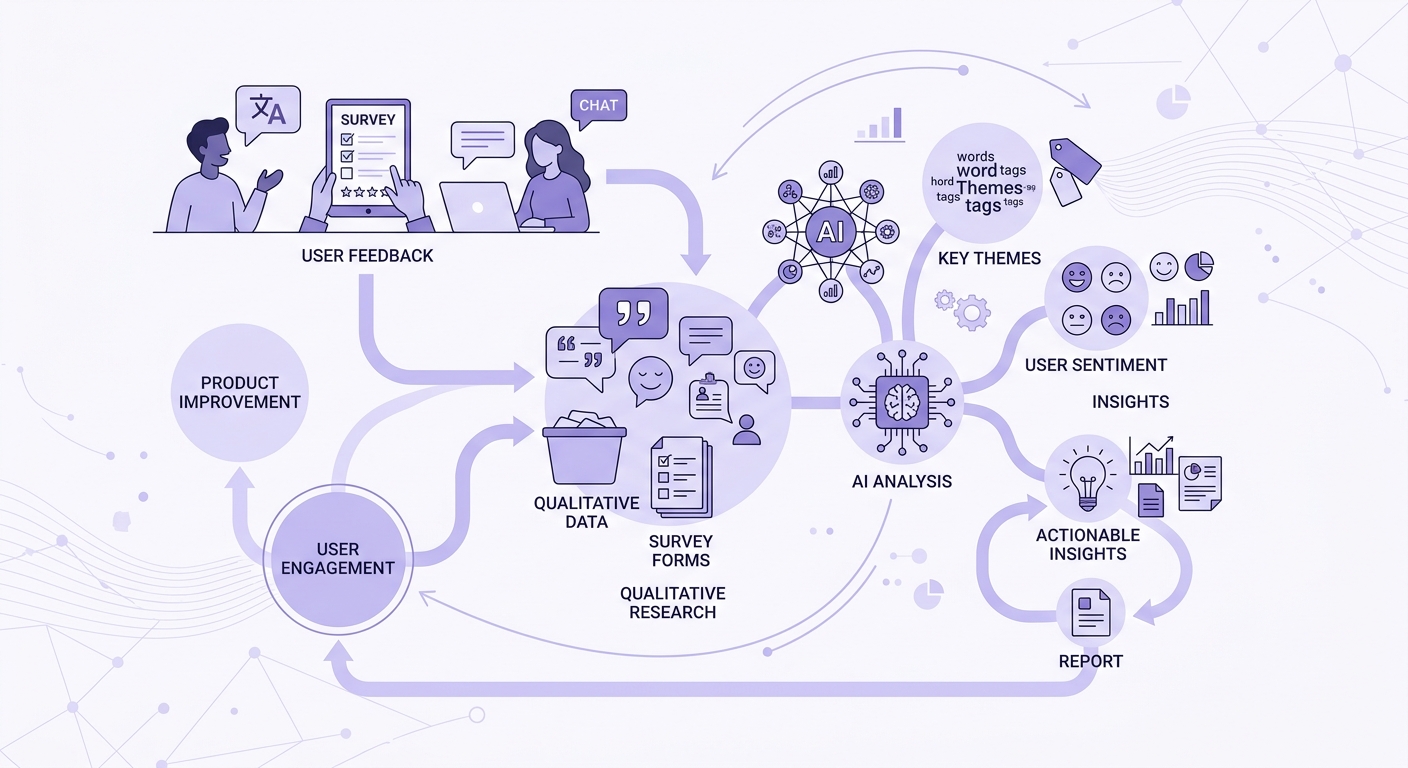

How Qualitative AI Transforms VoC Programs

Qualitative AI is not a single technology. It is a stack of capabilities that, combined, enable what was previously impossible: qualitative depth at quantitative scale.

AI-moderated interviews at scale. Instead of 20 interviews per quarter, imagine running 200 -- or 2,000. AI interviewers can conduct structured, semi-structured, or unstructured interviews with customers asynchronously, at any time, in any language. They follow discussion guides, probe for depth, and adapt to what the participant says. The quality gap between AI-moderated and human-moderated interviews is narrowing fast, especially for structured and semi-structured formats where discussion guide design matters more than interviewer charisma.

Automated thematic analysis. This is where the real leverage is. AI can process hundreds of interview transcripts and identify themes, patterns, and contradictions that would take a human team weeks to surface. Not keyword matching -- genuine thematic analysis that understands context, identifies latent themes, and maps relationships between concepts. The same rigor that a trained qualitative researcher applies to affinity mapping, but at a scale no human team can match.

Continuous qualitative listening. Traditional VoC is episodic: you run a study, get results, wait six months, run another study. Qualitative AI enables continuous discovery -- always-on interviews that feed a living understanding of your customer base. This aligns with the shift from project-based research to continuous discovery that leading product organizations are already making.

Cross-source synthesis. The most powerful VoC insights come from triangulating across data sources: interviews, support tickets, product analytics, sales call transcripts, social media mentions. AI can synthesize across these sources to build a unified qualitative picture that no single source could provide. The resulting insight density makes research repositories exponentially more valuable.

Building the Qualitative-First VoC Stack

If you are redesigning your VoC program around qualitative AI, here is the architecture that works:

Layer 1: Continuous Capture. Run AI-moderated micro-interviews at key customer journey moments -- post-onboarding, post-support interaction, quarterly check-ins, pre-renewal. Keep them short (5-10 minutes) and focused. Volume compensates for brevity.

Layer 2: Real-Time Analysis. Every interview transcript feeds an automated analysis pipeline. Themes are extracted, sentiment is captured with context (not just positive/negative, but the story behind the emotion), and emerging patterns are flagged. This is sentiment analysis that actually preserves the qualitative narrative.

Layer 3: Strategic Synthesis. Weekly or monthly, aggregate the continuous stream into strategic insights. What themes are strengthening? What new concerns are emerging? How do the qualitative findings explain the quantitative trends? This is where stakeholder interview synthesis techniques apply at a VoC scale.

Layer 4: Action Integration. Route insights to the teams that need them. Product teams get feature-level findings. CX teams get service recovery opportunities. Marketing gets messaging validation. Executive teams get the strategic narrative. The insights must flow to decisions, or the program is just expensive documentation.

Measuring Qualitative VoC Impact

The irony of qualitative VoC is that you still need to measure its impact quantitatively. Here are the metrics that matter:

Insight-to-action cycle time. How long from customer statement to product decision? Traditional VoC: 3-6 months. Qualitative AI-powered VoC: 1-2 weeks. This acceleration is the single most important metric.

Decision confidence. Survey product leaders before and after implementing qualitative VoC. Do they feel more confident in their roadmap decisions? Less reliant on gut instinct? This is subjective but meaningful.

Prediction accuracy. Qualitative VoC should help teams anticipate churn, identify expansion opportunities, and predict feature adoption. Track whether teams using qualitative insights make better predictions than those relying on quantitative dashboards alone. The evidence that qualitative exit interviews predict churn better than any survey score is already compelling.

Coverage breadth. What percentage of your customer segments have you heard from qualitatively in the last 90 days? Traditional programs struggle to get above 5 percent. AI-powered programs can realistically reach 20-40 percent.

The Strategic Imperative

Organizations that figure out qualitative AI for VoC will have an unfair advantage. Not because the technology is secret -- it is not -- but because most organizations will resist the shift. They have invested millions in quantitative VoC infrastructure. Their executive dashboards are built around NPS and CSAT. Their incentive structures reward score improvements, not customer understanding.

The organizations that win will be the ones that recognize the difference between measuring customer sentiment and understanding customer experience. Surveys measure. Qualitative AI understands.

The transition does not require scrapping your existing VoC program. It requires layering qualitative depth on top of quantitative breadth, then gradually shifting resource allocation as the qualitative insights prove their value.

Start with one high-stakes segment: your most valuable customers, your highest-churn cohort, or your newest user segment. Run AI-moderated interviews alongside your existing surveys. Compare the quality and actionability of insights from each approach. Let the results make the case for broader adoption.

The VoC program of 2027 will not choose between quantitative and qualitative. It will use AI to make qualitative research as scalable as surveys, finally delivering on the promise that VoC programs have been making for two decades: actually understanding the voice of your customer.